Why the Memory Chip Supercycle Won’t Follow the Old Playbook

Key Takeaways

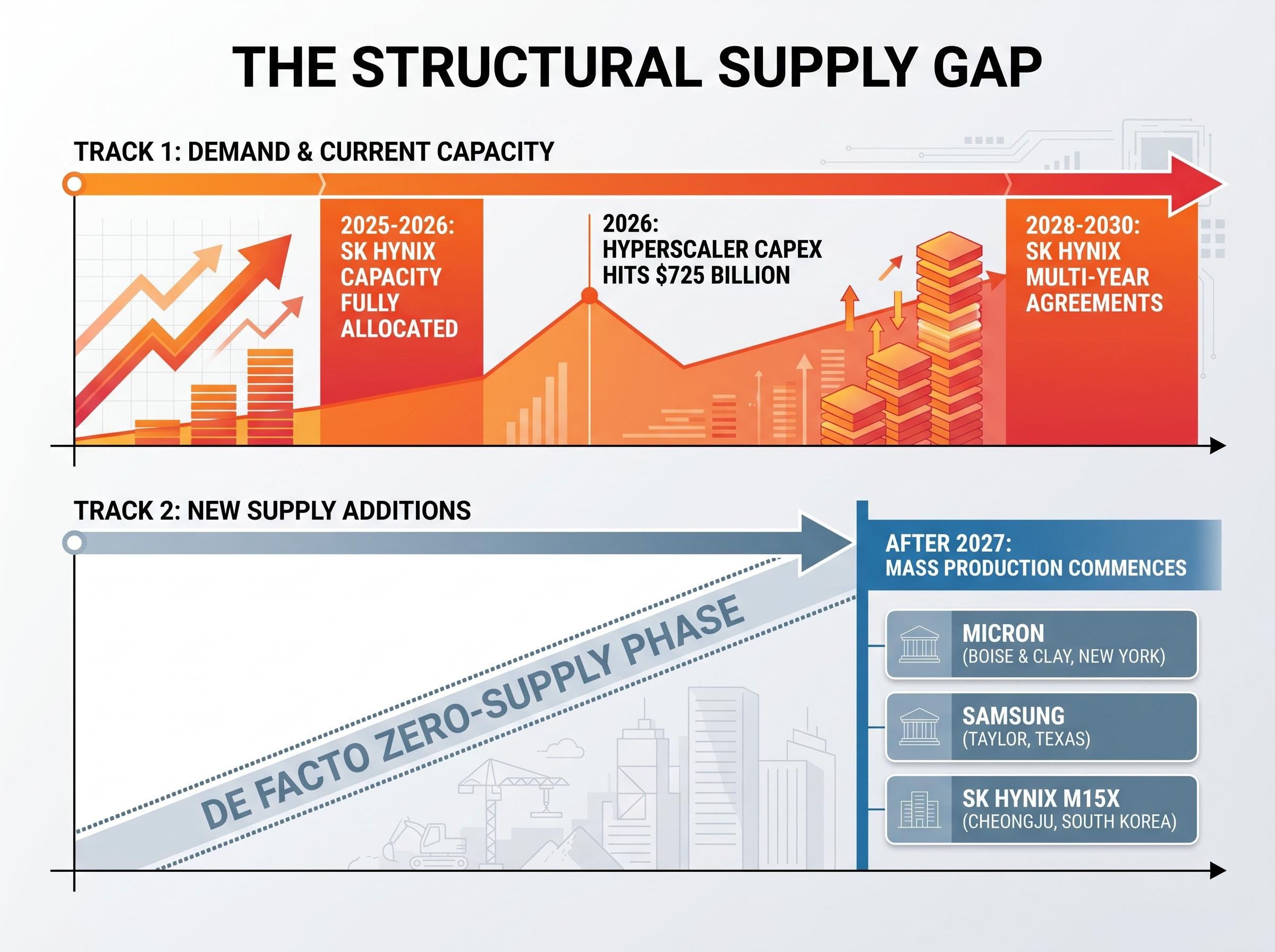

- Combined hyperscaler capex from the four largest U.S. cloud operators is projected to reach $725 billion in 2026, a 77% year-over-year increase, with projections surpassing $1 trillion in 2027.

- AI data centre operators now account for an estimated 70% of total memory shipment volumes, making the data centre the dominant demand centre for the entire memory industry.

- The memory industry is entering a de facto zero-supply phase in the near term, with new manufacturing lines not expected to reach mass production until after 2027, creating a structural supply vacuum.

- SK Hynix has shifted to foundry-style multi-year supply agreements extending through 2028-2030, marking a regime change in memory market structure that reinforces rather than shortens the cycle's duration.

- Agentic AI, AI smartphones and PCs, and automotive ADAS are broadening memory demand well beyond the hyperscaler tier, raising memory intensity across every layer of the hardware stack.

Combined capital expenditure from four major U.S. cloud operators is estimated to reach $725 billion in 2026, a 77% year-over-year increase, with projections surpassing $1 trillion in 2027. Memory is the primary bottleneck absorbing that spend. The memory chip supercycle now unfolding is structurally unlike any previous DRAM upcycle. Where past cycles turned on consumer electronics refresh events that eventually plateaued, the current dynamic originates in hyperscaler data centres and is broadening across every device category.

What follows maps the demand-side forces driving this repricing, explains the mechanics of a supply vacuum that cannot be filled before 2027, and describes how agentic AI is extending the demand curve well beyond the data centre. The result is a macro framework for understanding why this cycle behaves differently from its predecessors, and why the standard cyclical playbook does not apply.

When AI infrastructure spending stops being optional

There was a period, not long ago, when hyperscaler AI spending was a line item managers could dial up or down. That period is over.

KB Securities characterises the shift directly:

AI capex has shifted from “an optional cost to an existential competitive requirement.”

The competitive logic is straightforward. Any cloud operator that falls behind in GPU cluster density, model training throughput, or inference capacity loses enterprise customers to rivals who invested earlier. The result is a spending contest with no natural ceiling, one that now carries economy-wide implications as AI infrastructure becomes the substrate for software, logistics, healthcare, and financial services.

The capex postures of the four largest operators reflect this:

- Meta Platforms raised its 2024 capex outlook to $35-40 billion (from a prior $30-37 billion range), explicitly tied to AI servers and data centres, with management confirming further increases in 2025 and 2026.

- Alphabet signalled 2024 capex would be “notably larger” than 2023, driven primarily by AI server and data centre investment.

- Amazon indicated AWS-related capex would grow year over year in 2025, with AI infrastructure as the primary driver.

- Microsoft described “significant” capital investment to expand Azure AI infrastructure, with spending continuing to increase through fiscal year 2025 and beyond.

KB Securities estimates combined hyperscaler capex reaching $725 billion in 2026 and surpassing $1 trillion in 2027. No individual company has published guidance matching that figure, but every publicly available signal points in the same direction: up, and accelerating.

The $130 billion that Amazon, Microsoft, Alphabet, and Meta collectively committed in Q1 2026 alone gives the annual projection its structural foundation; hyperscaler AI capex at that quarterly run rate makes the $725 billion full-year figure conservative rather than aspirational.

When big ASX news breaks, our subscribers know first

What the $1 trillion number actually buys, and why memory is the binding constraint

A trillion dollars in AI infrastructure spending translates into physical hardware: GPU clusters, networking fabric, power delivery systems, cooling infrastructure, and the memory stack that sits between compute and storage. Every AI inference query and every training run reads from and writes to memory. Without sufficient memory bandwidth and capacity, the GPUs sit idle.

That dependency explains why AI data centre operators now account for an estimated 70% of total memory shipment volumes, according to KB Securities. The data centre is no longer one demand category among many. It is the demand centre for the entire memory industry.

Within that demand, one product category is more severely constrained than any other: High Bandwidth Memory (HBM), a specialised form of DRAM that is bonded directly to GPU dies using advanced packaging. HBM provides the bandwidth that AI accelerators require for large model inference and training. It is produced by only three companies on Earth, and it requires a fundamentally different manufacturing process from standard DRAM.

The three suppliers who control the HBM stack

| Supplier | HBM capacity status | Primary customer relationships |

|---|---|---|

| SK Hynix | Dominant market share (~50%+); capacity fully allocated through at least 2025-2026 | Primary HBM supplier to Nvidia |

| Samsung | Ramping HBM3E qualification; catching up in market share | Qualifying with major GPU vendors for volume ramp through 2025 |

| Micron | Design wins confirmed; supply constrained relative to demand | HBM3E offerings aligned with leading GPU platforms |

All three suppliers use qualitative “sold out” and “full capacity” language in public disclosures, confirmed across coverage from Morgan Stanley, Goldman Sachs, and Jefferies. No incremental HBM supply is available outside already-allocated contracts.

Samsung’s HBM qualification gap relative to SK Hynix is the key operational variable shaping how market share evolves among the three suppliers: as of early May 2026, Samsung held below 30% of the HBM market versus SK Hynix’s approximately 62% share, with yield improvements on advanced memory nodes representing the company’s most critical near-term challenge.

Why new factories cannot solve a problem that exists right now

Building a new DRAM or HBM fabrication facility is a multi-year undertaking. The process involves equipment procurement (with its own supply constraints), site preparation, cleanroom construction, process qualification across dozens of manufacturing steps, and a slow volume ramp to commercial yield. From ground-breaking to meaningful output, the timeline typically spans four to five years.

The major fab investment programmes underway illustrate the gap:

- Micron, Boise and Clay, New York: Tens of billions committed. Management has explicitly stated that meaningful output from these US facilities will materialise in the latter half of the decade, not in the immediate 2025-2026 period.

- Samsung, Taylor, Texas: Advanced logic and memory complex. Recent 2025 coverage indicates schedule adjustments, with no confirmed DRAM or HBM production start dates.

- SK Hynix, M15X (Cheongju, South Korea): Targeted for mid-2020s ramp. High-volume HBM output timing has not been publicly specified.

None of these programmes contribute meaningful new HBM or DRAM supply before the latter half of the decade. KB Securities frames the near-term picture in stark terms:

The industry is entering a “de facto zero-supply phase” in the near term, with new memory manufacturing lines not expected to commence mass production until after 2027.

The implication is mechanical, not speculative. Demand is compounding now. Supply relief is structurally years away.

Understanding the memory supercycle: how this cycle differs from every previous one

A memory supercycle refers to a prolonged period of rising prices and tight supply that extends well beyond the typical boom-and-bust rhythm of the semiconductor memory market. Previous cycles followed a recognisable pattern: a new hardware category (PCs in the 1990s, smartphones in the 2010s) drove a demand surge, manufacturers expanded capacity, and prices eventually fell as supply caught up and consumer adoption plateaued.

The current cycle diverges on several structural dimensions:

- Demand driver: Previous cycles depended on discrete consumer product refresh events. The current cycle is driven by infrastructure investment from well-capitalised operators who have explicitly committed to multi-year spending regardless of economic conditions.

- Operator type: Consumer hardware OEMs operated on thin margins and adjusted procurement quickly. Hyperscalers operate on platform-economics logic and cannot afford capacity shortfalls.

- Contract structure: Previous cycles relied on spot and short-term contracts. The current cycle features multi-year supply agreements.

- Supply response lag: Previous cycles saw capacity additions within two to three years. Current fab timelines extend past 2027 before meaningful supply arrives.

Morgan Stanley characterises DRAM as being in an “early-stage, multi-year upcycle” driven by AI server demand. TrendForce data shows DRAM contract prices bottomed in 2023, rose through 2024, with further increases projected into 2025 and continued strength in 2026. Goldman Sachs expects DRAM average selling prices to rise in 2025 and maintain strength into 2026.

Placing the current cycle against prior technology investment peaks provides scale: US IT spending reached 4.9% of GDP in Q1 2026, surpassing both the dot-com era peak of approximately 4.2% and the cloud buildout peak of approximately 3.8%, a comparison that underscores why the infrastructure-driven demand dynamic has no close historical analogue.

TrendForce DRAM contract price data for Q1 2026 shows quarter-over-quarter increases across PC, server, and mobile DRAM segments, driven by sustained AI and data centre procurement, corroborating the pattern of rising average selling prices that analysts at Morgan Stanley and Goldman Sachs had projected through the 2025-2026 period.

From spot contracts to foundry-style agreements

SK Hynix has transitioned to multi-year supply agreements extending through 2028-2030, structured on a foundry-style advance-order model, according to KB Securities. In a market historically dominated by spot and short-term contracts, this structural change carries two implications. It reduces earnings volatility for suppliers by locking in revenue visibility. And it provides demand certainty that extends the planning horizon for capacity investment, reinforcing the cycle’s duration rather than shortening it.

How memory demand is broadening across devices, vehicles, and AI workloads

Even if hyperscaler spending were to plateau tomorrow, the demand base has already diversified into channels that compound independently.

KB Securities projects that AI token consumption at leading cloud platforms will increase approximately threefold over the following six months and roughly sevenfold on a year-over-year basis:

AI token consumption at leading cloud platforms is projected to grow approximately sevenfold year over year, according to KB Securities estimates.

Three demand vectors outside the data centre are now contributing to the broadening:

- Agentic AI and edge workloads: Agentic AI refers to systems that chain tools, perform multi-step tasks, or operate continuously on behalf of users. These persistent, multi-step workloads require more continuous memory access than single-inference queries, pushing memory requirements higher at both the data centre and edge device level.

- AI smartphones and PCs: Running local AI models on-device requires higher DRAM and LPDDR configurations. Analysts expect AI PCs to normalise 12-16 GB DRAM configurations in mid-to-high-end devices, up from lower prior norms, raising average memory content per unit sold.

- Automotive AI: Advanced driver-assistance systems (ADAS) and autonomous driving functions require higher DRAM and NAND content per vehicle. As in-vehicle models grow more capable and run more locally, each car becomes another durable hardware platform pulling on memory supply.

Agentic AI workloads favour continuous memory access patterns over the burst-read profile of single-inference queries, and AMD’s Q1 2026 procurement data identified this shift as the primary driver behind its decision to more than double its server CPU total addressable market growth forecast, from 18% to 35% annually, a signal that the memory intensity per compute cycle is rising across the hardware stack.

KB Securities characterises the trend directly: agentic AI applications are anticipated to broaden memory demand beyond cloud server environments into on-device and physical AI use cases. The memory intensity of the economy is rising at every layer, not just at the hyperscaler tier.

The next major ASX story will hit our subscribers first

The forces that could deepen or disrupt the cycle from here

The supply-demand imbalance has been established. The question is whether geopolitical and structural risk factors alter that balance, and in which direction.

Three risk scenarios warrant attention, and each carries a directional assessment:

- U.S. export controls on advanced GPUs and memory-adjacent equipment: Tightened progressively since 2022, these controls constrain Chinese AI buildout, potentially reducing one demand pocket. They simultaneously restrict the technology transfer that would allow new competitors to enter the supply side. The net effect is ambiguous on demand but clearly negative on supply diversification.

- Micron’s China exposure: Chinese cybersecurity reviews have previously targeted Micron, raising the risk of reduced market share in China. This reconfigures supply flows, potentially redirecting Micron volumes to non-Chinese buyers, without reducing global demand. The risk is to Micron’s revenue geography, not to the industry’s overall demand level.

- Geographic concentration of manufacturing: The majority of HBM and DRAM production is located in South Korea and Taiwan. South Korea’s position as a U.S. security ally with deep economic ties to China creates policy uncertainty around Korean firms serving Chinese customers. Any physical or geopolitical disruption to a major fab cluster would exacerbate rather than relieve tightness.

The asymmetry is consistent across scenarios. The geopolitical risks most likely to materialise reduce effective supply or redistribute demand without adding new capacity. Research across multiple sell-side sources supports the observation that these factors are skewed toward supply disruption or demand reconfiguration rather than supply surplus.

A supply-demand gap that compounding AI investment will not close on its own schedule

The analytical conclusion is structural, not sentiment-driven. Demand is already committed: hyperscaler capex reaching $725 billion in 2026, with no deceleration signal from any of the four largest operators. Supply cannot physically arrive before the latter half of the decade, a constraint imposed by fab construction timelines and equipment lead times, not by capital availability.

The demand picture operates on three layers. Hyperscaler capex is the primary engine. Agentic and on-device AI form the structural broadening layer, raising memory intensity across consumer and enterprise hardware. Automotive AI extends the demand curve into a long-duration hardware category with multi-decade replacement cycles.

The transition to multi-year supply agreements and foundry-style contracts marks a regime change in how the memory industry operates. Suppliers now have revenue visibility extending to 2028-2030. Buyers have committed capital on timelines that assume sustained tightness. The market structure itself has shifted to reflect the expectation that this cycle will not resolve on the timeline of any previous one.

Readers seeking to understand the implications at the individual company level may wish to examine SK Hynix and Samsung’s forward earnings profiles in the context of KB Securities’ average selling price projections, alongside Micron’s U.S. fab timeline relative to the post-2027 supply normalisation window.

This article is for informational purposes only and should not be considered financial advice. Investors should conduct their own research and consult with financial professionals before making investment decisions. Financial projections are subject to market conditions and various risk factors.

Frequently Asked Questions

What is a memory chip supercycle and how is it different from a normal DRAM cycle?

A memory chip supercycle is a prolonged period of rising prices and tight supply that extends well beyond the typical boom-and-bust rhythm of the memory market. Unlike previous cycles driven by consumer electronics refresh events, the current supercycle is fuelled by committed, multi-year hyperscaler infrastructure spending and a supply response lag that pushes meaningful new capacity past 2027.

Why can new memory chip factories not solve the current supply shortage quickly?

Building a new DRAM or HBM fabrication facility takes four to five years from ground-breaking to meaningful commercial output, covering equipment procurement, cleanroom construction, and process qualification. Major programmes from Micron, Samsung, and SK Hynix are all expected to deliver significant new supply only in the latter half of the decade, not during the 2025-2026 period of peak demand.

Which companies produce High Bandwidth Memory (HBM) for AI chips?

Only three companies produce HBM globally: SK Hynix, Samsung, and Micron. SK Hynix holds the dominant share of approximately 62%, with capacity fully allocated through at least 2025-2026, while Samsung and Micron are ramping and qualifying their HBM3E offerings with major GPU vendors.

How is agentic AI driving memory demand beyond data centres?

Agentic AI systems perform continuous, multi-step tasks that require persistent memory access rather than single-inference bursts, raising memory requirements at both the data centre and edge device level. This is broadening demand into AI smartphones, AI PCs normalising 12-16 GB DRAM configurations, and automotive ADAS platforms, extending the demand curve well beyond hyperscaler spending.

What do multi-year HBM supply agreements mean for the memory market structure?

SK Hynix has transitioned to foundry-style multi-year supply agreements extending through 2028-2030, locking in revenue visibility for suppliers and demand certainty for buyers. This structural shift reduces spot-market volatility and reinforces the cycle's duration, signalling that the market itself is pricing in sustained tightness rather than a near-term resolution.