Why Agentic AI Is Redrawing the AI Hardware Investment Map

Key Takeaways

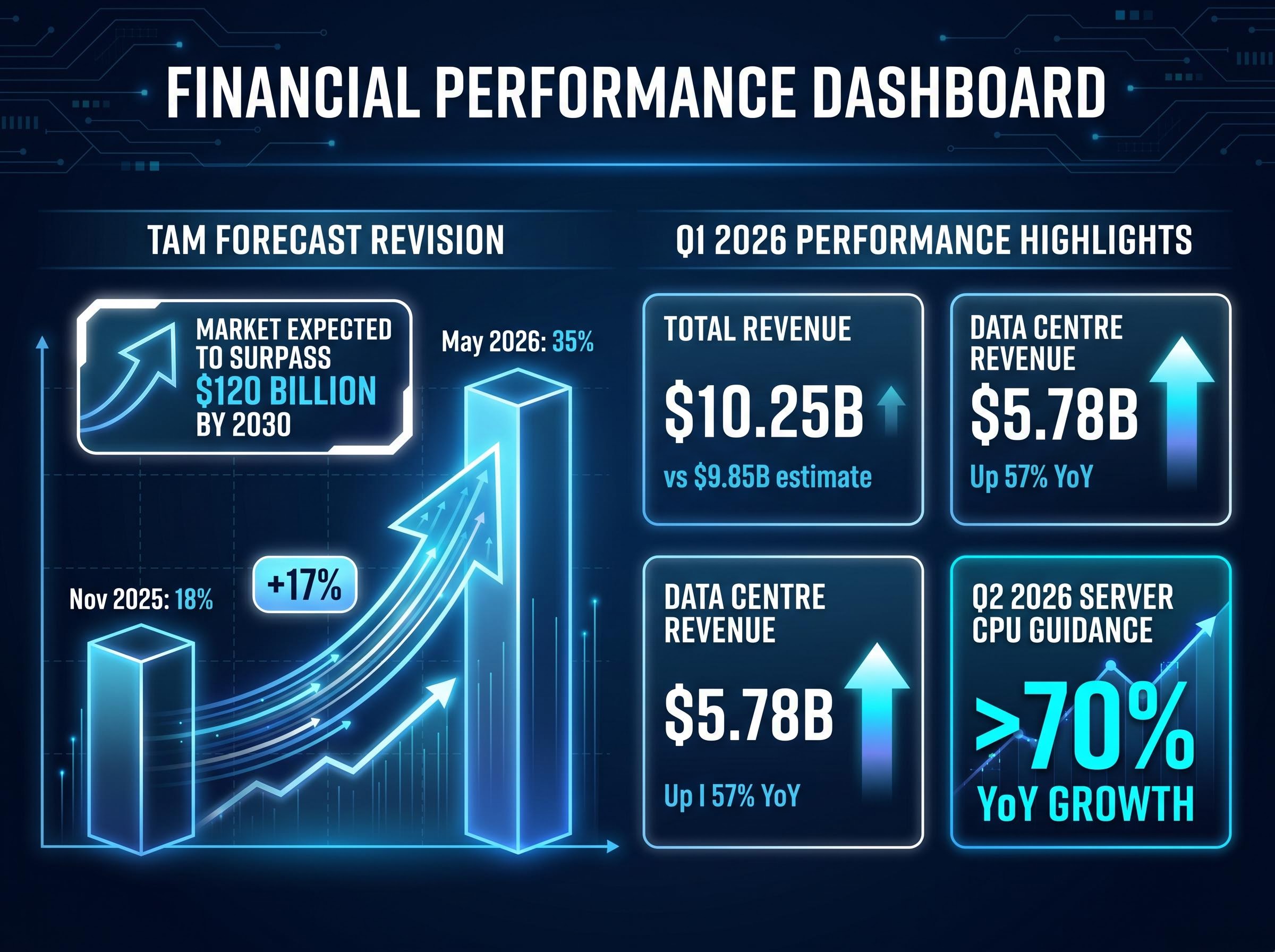

- AMD raised its server CPU total addressable market growth forecast from 18% to 35% annually, implying a market above $120 billion by 2030, driven by agentic AI workload demand identified in Q1 2026 procurement data.

- Q1 2026 results beat consensus on every headline metric, with data centre revenue of $5.78 billion representing 57% year-over-year growth and adjusted EPS of $1.37 exceeding the $1.27 estimate.

- Agentic AI workloads favour CPU architecture over GPUs for sequential reasoning and agent orchestration tasks, with industry estimates placing 35-45% of inference workloads as CPU-bound.

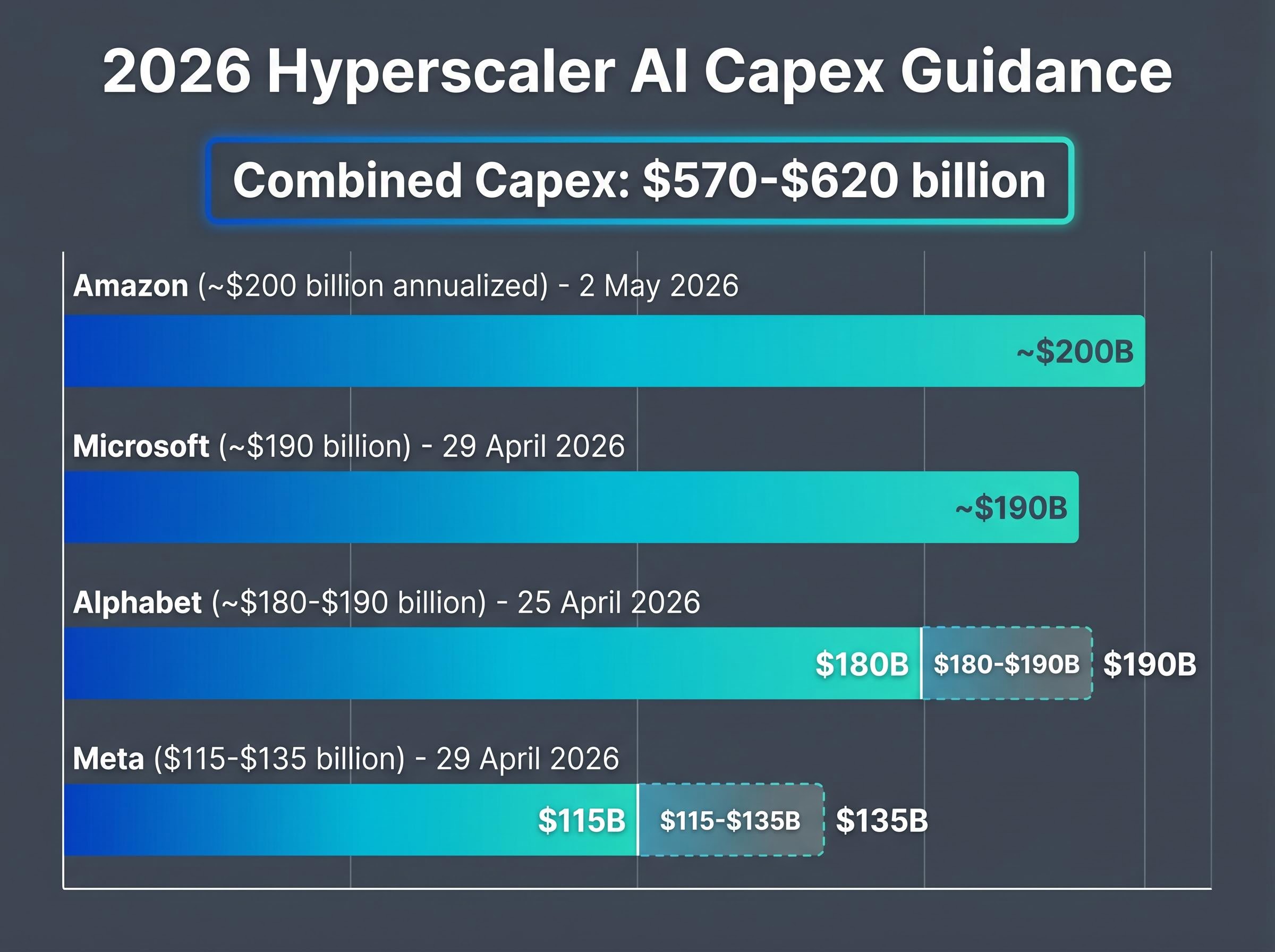

- Combined hyperscaler AI infrastructure capex across Meta, Microsoft, Alphabet, and Amazon is estimated at $570-$620 billion for 2026, with each company disclosing specific CPU infrastructure signals tied to agentic deployments.

- Key risks include China export restrictions affecting approximately 15% of AMD data centre revenue, potential gross margin compression from Intel's competitive pricing, and execution uncertainty on the Zen 6 architecture timeline.

AMD just doubled its server CPU market growth forecast, and it was not a narrative exercise. On the Q1 2026 earnings call delivered 5 May 2026, the chipmaker raised its server CPU total addressable market (TAM) growth projection from 18% annual growth to 35%, with the market expected to surpass $120 billion by 2030. The revision sits inside a quarter that beat consensus on every headline metric: revenue of $10.25 billion against a $9.85 billion estimate, data centre revenue of $5.78 billion (up 57% year-over-year), and adjusted earnings per share of $1.37 versus $1.27 expected. The driver behind the revision is agentic AI, a class of autonomous, multi-step reasoning workloads that demands CPU architecture in ways the GPU-first investment thesis never anticipated. What follows is an analysis of what AMD’s forecast change implies for the CPU-versus-GPU demand split, how hyperscaler capital expenditure commitments validate the structural argument, and where the risks sit for investors evaluating the broader AI hardware investment opportunity.

The forecast revision that changes the AI hardware calculus

In November, AMD projected the server CPU TAM would grow at 18% annually. Six months later, that figure nearly doubled to 35%. For a chipmaker of AMD’s scale, a revision of that magnitude is not a guidance tweak; it is a restatement of the demand environment the company expects to operate in through the end of the decade.

The explanation lies in what became visible between those two dates. The November forecast preceded the first full quarter of EPYC shipments into agent-heavy cluster configurations at hyperscaler scale. Procurement data from Q1 2026 revealed that agentic AI deployments, autonomous systems performing sequential reasoning, tool use, and inter-agent coordination, were consuming CPU capacity at rates the prior model had not anticipated.

The causal sequence matters for how investors interpret credibility:

- November 2025: AMD issues 18% annual TAM growth forecast based on pre-agentic deployment data

- Q1 2026: First full quarter of EPYC shipments into agentic cluster configurations; procurement volumes exceed prior modelling

- May 2026: AMD revises TAM growth to 35%, implying a market above $120 billion by 2030

- Q2 2026 guidance: Server CPU revenue growth of greater than 70% year-over-year serves as the near-term validation point

AMD’s Q2 server CPU guidance of greater than 70% year-over-year growth is the figure that separates the 2030 projection from speculation. If that growth rate materialises, the 35% TAM CAGR begins to look conservative rather than aspirational.

The quarterly results provide the numerical foundation. Data centre revenue of $5.78 billion represents a 57% year-over-year increase. Total revenue of $10.25 billion and adjusted EPS of $1.37 both exceeded Wall Street estimates. The forecast revision was issued from a position of demonstrated execution, not projected ambition.

The scale of hardware spending underpinning AMD’s TAM revision is part of a broader semiconductor supercycle in which approximately 75% of hyperscaler capex, estimated at $450 billion for 2026, flows directly to physical hardware and data centre construction rather than software licences or cloud services.

When big ASX news breaks, our subscribers know first

Why agentic AI needs CPUs, not just GPUs

The assumption underpinning most AI hardware investment since 2023 has been straightforward: GPU parallelism is the bottleneck, and whoever controls GPU supply controls the AI infrastructure cycle. Agentic AI complicates that assumption at the architectural level.

GPU cores are optimised for matrix operations, the mathematical backbone of model training and batch inference. They excel when thousands of identical calculations can run simultaneously. Agentic AI tasks do not fit that profile. Sequential reasoning, memory management, inter-agent coordination, and tool-use loops require decisions to happen in order, not in parallel. CPU thread architecture handles these workloads with greater efficiency because it is designed for exactly the kind of branching, conditional logic that agent orchestration demands.

Industry estimates place 35-45% of inference workloads as CPU-bound, depending on task type. The inference share of total AI compute is itself expanding, with some projections placing it above 50-70% of deployed AI workload compute by 2026. The directional consensus from Morgan Stanley, Bernstein, and IDC points to the CPU-to-GPU ratio in AI infrastructure shifting from roughly 1:2 in 2025 toward 1:1.5 by 2027.

Microsoft’s Satya Nadella has noted that CPU utilisation has doubled in agent-heavy Azure deployments. Meta’s Mark Zuckerberg has characterised Llama 4 inference clusters as CPU-heavy for agent coordination. These are not theoretical observations; they describe procurement decisions already in motion.

| Attribute | GPU | CPU |

|---|---|---|

| Primary workload fit | Matrix operations, batch inference, model training | Sequential reasoning, agent orchestration, tool-use loops |

| Power draw | Approximately 700W (high-end accelerators) | Approximately 200W |

| Cost-per-token for agentic tasks | Higher for non-matrix workloads | Materially lower at scale for non-FLOPS-bound tasks |

| Sequential reasoning strength | Limited by parallel-first architecture | Designed for branching, conditional logic |

Power and cost economics favour CPUs at inference scale

The approximately 200W versus approximately 700W power differential between CPUs and high-end GPU accelerators carries direct consequences for data centre budgets. At hyperscaler scale, where power allocation is a binding constraint on rack density, CPU-heavy inference clusters consume roughly a third of the energy per unit for non-matrix workloads.

Cost-per-token economics reinforce the procurement case. For agent loops that require sequential decisions rather than parallel matrix multiplication, CPUs deliver more compute per dollar spent on power and cooling. This is a procurement efficiency argument as much as an architectural one, and it explains why hyperscaler capex is flowing toward hybrid configurations rather than GPU-only builds.

Hyperscaler capex as the structural floor under AMD’s forecast

The spending commitments disclosed during Q1 2026 earnings season are, by any measure, extraordinary.

Combined hyperscaler AI infrastructure capex across Meta, Microsoft, Alphabet, and Amazon now sits in the range of approximately $570-$620 billion for full-year 2026, a figure that functions as a forward order book signal for the semiconductor supply chain.

| Company | 2026 Capex Guidance | AMD Product Integration | CPU Infrastructure Signal |

|---|---|---|---|

| Meta | $115-$135 billion (raised, 29 April 2026) | EPYC and Instinct for Llama 4 inference | Zuckerberg: CPU-heavy clusters for agent coordination |

| Microsoft | Approximately $190 billion (29 April 2026) | Azure inference workloads for Copilot agents | Nadella: CPU utilisation doubled in agent-heavy deployments |

| Alphabet | Approximately $180-$190 billion (25 April 2026) | EPYC in hybrid TPU-CPU configurations | Gemini agentic deployments incorporating CPU infrastructure |

| Amazon | Approximately $200 billion annualised (2 May 2026) | EPYC in agentic Bedrock cluster deployments | Integration alongside Trainium and Inferentia custom silicon |

The detail that matters for AMD’s thesis is not simply the headline capex totals. It is what sits inside them. Each of these four companies has disclosed specific CPU infrastructure signals tied to agentic AI workloads. Meta confirmed EPYC procurement for Llama 4 inference. Microsoft described a doubling of CPU utilisation. Alphabet is deploying EPYC in hybrid TPU-CPU configurations. Amazon has integrated EPYC into agentic Bedrock clusters.

These buyers are not substituting CPU for GPU spend. They are expanding total AI infrastructure budgets, which means CPU growth is additive to GPU spending cycles. For investors evaluating AMD’s 35% TAM forecast, the fact that four independent buyers reaffirmed or expanded infrastructure budgets in the same earnings season provides a structural floor to the demand assumptions.

The AI revenue to capex ratio has emerged as the metric analysts use to test whether hyperscaler infrastructure spending is commercially self-sustaining, with AWS reaching a $15 billion annualised AI run rate in Q1 2026 and Google Cloud posting 48% revenue growth serving as the leading commercial validation signals for the hardware investment thesis.

AMD’s competitive standing in a market with expanding CPU demand

AMD’s EPYC broke above 40% server CPU revenue share in Q4 2025, according to Mercury Research measurements, the highest level the company has achieved. Intel retains approximately 59-71% depending on whether the measurement uses unit or revenue methodology, but the trajectory has been consistently moving in AMD’s direction.

Intel reported data centre and AI revenue of approximately $5.1 billion for Q1 2026 (reported 23 April 2026), up approximately 22% year-over-year. Under CEO Lip-Bu Tan, who took the role in March 2025, Intel is pursuing Xeon 6 as its primary competitive response, with aggressive pricing of approximately 20% below AMD on comparable inference-optimised bundles. A 2nm roadmap targeting 2027 is in progress, though execution against that timeline remains unproven.

The competitive forces that could fragment AMD’s TAM capture include:

- Intel Xeon 6: Aggressive pricing and a refreshed product line under new leadership, though server CPU share has been declining for multiple consecutive quarters

- Arm-based server CPUs: IDC projects approximately 20% market share by 2030, with AWS Graviton, NVIDIA Grace, and Ampere Computing as the primary players

- Hyperscaler custom silicon: Google TPUs and Amazon Trainium/Inferentia reduce merchant silicon TAM for training and some inference tasks

Arm and custom silicon: the longer-term competitive variables

The 20% Arm share projection by 2030 represents a structural challenge to x86 dominance shared by both AMD and Intel. Energy efficiency advantages make Arm architectures well suited to inference workloads, precisely the segment where CPU demand is accelerating.

Custom silicon introduces a different dynamic. Google’s TPUs and Amazon’s Trainium handle significant internal AI workloads, reducing reliance on merchant chips. However, AMD EPYC often serves as the host CPU in those same systems, creating a partial offset. AMD’s approximately 71.5% year-to-date stock gain (versus NVIDIA’s approximately 6.4% and the S&P 500’s 6.1% as of 5 May 2026) reflects the market’s current assessment that AMD captures more than its proportional share of the expanding TAM; the question is whether that assessment holds as Arm and custom silicon scale.

The next major ASX story will hit our subscribers first

The risks investors are not pricing in yet

A credible TAM revision does not eliminate downside scenarios. Three risks deserve the same analytical weight as the bull case.

- China export restrictions: US controls limit AMD’s most advanced accelerators (MI300-series and successors) to Chinese customers, affecting an estimated 15% of AMD’s data centre revenue. No resolution is in sight as of mid-2026, and Morgan Stanley explicitly flagged this risk in its post-earnings note.

- Gross margin pressure under competitive pricing: AMD’s Q1 2026 gross margin of 53% sits below NVIDIA’s margins in comparable periods. If AMD has to match Intel’s approximately 20% pricing discount in competitive bids, margin compression becomes a structural concern even as revenue grows.

- Zen 6/Venice execution timing: AMD’s TAM projection is partly contingent on continued architecture advances. Any delay in the Zen 6 roadmap would create a window for Intel’s Xeon 6 or Arm-based competitors to recover share in new cluster deployments.

Morgan Stanley maintained an overweight rating but set a price target of approximately $280, below the consensus of approximately $314.92 across roughly 41 analysts. The firm’s caution centred on China restrictions and Zen 6 ramp execution, a reminder that the broadly bullish analyst consensus (approximately 38 buy-equivalent ratings, with Bernstein’s upgrade to Outperform at approximately $365) is not unanimous.

China restrictions alone represent a material revenue overhang. The margin profile under competitive pricing pressure deserves scrutiny before investors treat the TAM expansion as fully capturable earnings upside.

Inference cost sustainability sits at the centre of the bear case for AI hardware equities: if escalating per-token inference costs make generative AI applications structurally unprofitable, hyperscalers face pressure to decelerate capital deployment regardless of their current guidance, a scenario that would compress AMD’s TAM capture well below the 35% CAGR the company projects.

What the CPU re-rating means for the AI hardware investment map

The structural argument that emerges from AMD’s quarter is not a single-stock story. It is a recalibration of how AI infrastructure spending distributes across the semiconductor supply chain. CPU, GPU, custom silicon, and memory all grow, but CPUs are now growing faster than the prior consensus assumed, and AMD is the primary public-market vehicle for that specific exposure.

AMD’s approximately 71.5% year-to-date gain already prices in meaningful optimism. The relevant question for investors is whether the 35% TAM CAGR through 2030 represents a ceiling on how high the market is willing to discount future earnings, or a floor on what the business can actually deliver. IDC’s projection of AI infrastructure driving semiconductor markets past the $1 trillion threshold, alongside Gartner’s estimate of approximately $2.52 trillion in total AI spending for 2026, provides the macro frame.

For investors who want a structured framework for assessing whether AMD’s projected TAM capture will translate into durable earnings growth, our comprehensive walkthrough of the AI infrastructure investment cycle covers how to read FCF compression signals from hyperscaler balance sheets, how to identify operating cash margin trends that distinguish genuine monetisation from revenue inflation, and how to assess the S&P 500 concentration risk created by advanced computing stocks representing 45% of market capitalisation.

Three forward catalysts will test the thesis:

- Q2 2026 earnings: Confirmation or challenge of the greater than 70% server CPU growth guidance, with revenue guided to approximately $11.2 billion (plus or minus $300 million), representing approximately 50.6% year-over-year growth

- Zen 6/Venice roadmap disclosure: Timing and specifications will determine whether AMD maintains its architecture lead through the next product cycle

- Meta deal shipment commencement: Scheduled for H2 2026, providing the first volume evidence of hyperscaler EPYC procurement at the scale the TAM forecast implies

Past performance does not guarantee future results. Financial projections are subject to market conditions and various risk factors.

This article is for informational purposes only and should not be considered financial advice. Investors should conduct their own research and consult with financial professionals before making investment decisions.

Frequently Asked Questions

What is agentic AI and why does it increase CPU demand?

Agentic AI refers to autonomous systems that perform sequential reasoning, tool use, and multi-step decision-making. Unlike batch inference tasks suited to GPUs, these workloads require branching conditional logic that CPU thread architecture handles more efficiently, driving increased CPU procurement at hyperscaler scale.

What did AMD report for Q1 2026 earnings?

AMD reported Q1 2026 revenue of $10.25 billion against a $9.85 billion estimate, data centre revenue of $5.78 billion (up 57% year-over-year), and adjusted EPS of $1.37 versus the $1.27 consensus expectation, beating on every headline metric.

What is AMD's updated server CPU total addressable market forecast?

AMD revised its server CPU TAM growth projection from 18% annual growth to 35%, with the market expected to surpass $120 billion by 2030, a revision driven by higher-than-anticipated CPU demand from agentic AI deployments at hyperscaler customers.

How does AMD's server CPU market share compare to Intel in 2026?

AMD's EPYC processors broke above 40% server CPU revenue share in Q4 2025 according to Mercury Research, while Intel retains approximately 59-71% depending on measurement methodology, though the trajectory has consistently moved in AMD's direction over recent quarters.

What are the main risks to AMD's AI hardware investment thesis?

The three primary risks are US export restrictions on advanced accelerators to China (affecting an estimated 15% of data centre revenue), gross margin pressure from Intel's approximately 20% pricing discounts on comparable bundles, and potential delays in AMD's Zen 6 architecture roadmap that could allow competitors to recover share.