Hyperscalers Hit $725B AI Capex in 2026, Eyeing $1 Trillion by 2027

Key Takeaways

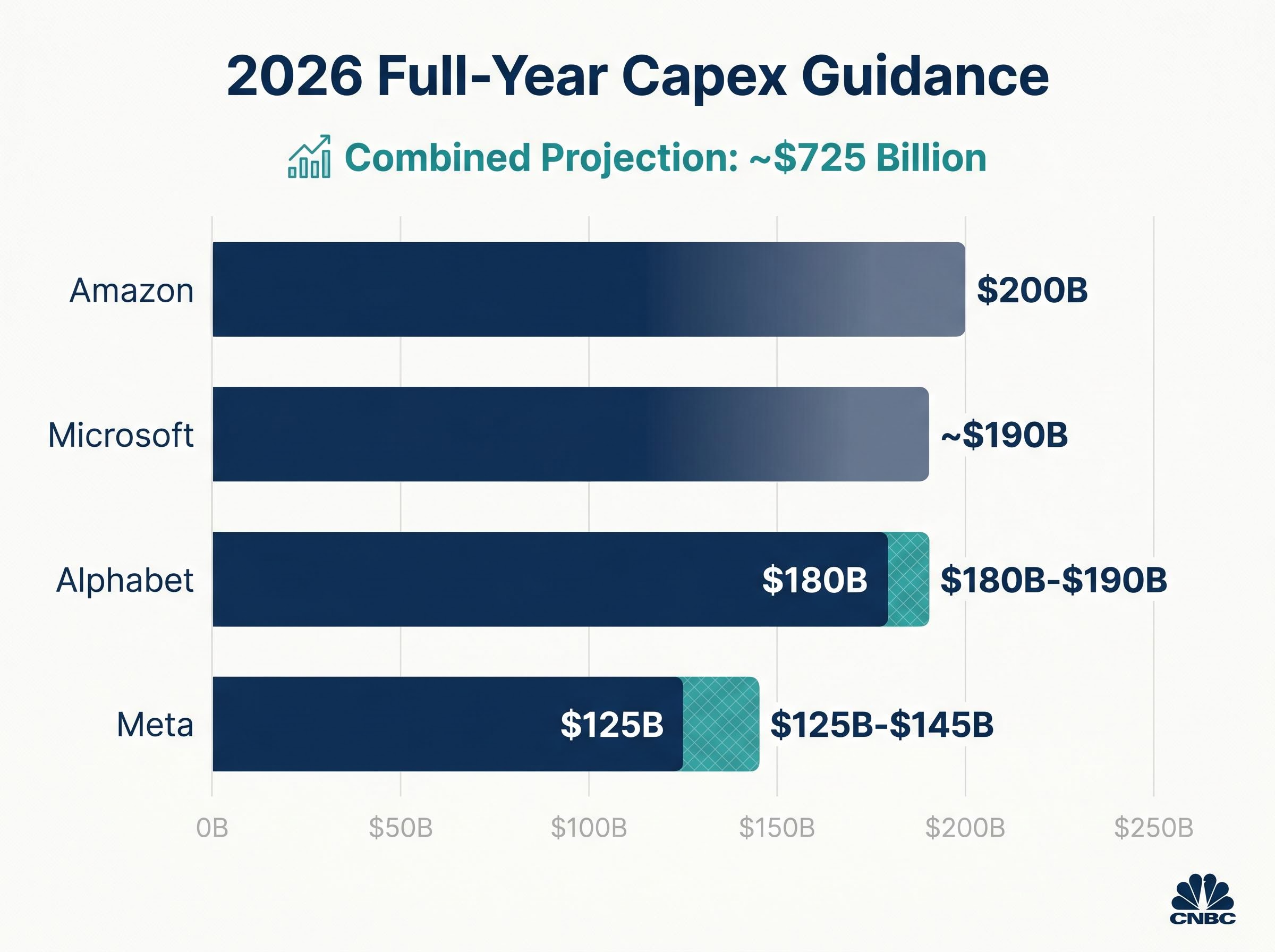

- Amazon, Microsoft, Alphabet, and Meta collectively spent $130 billion on AI capital expenditure in Q1 2026 alone, with full-year 2026 combined guidance reaching approximately $725 billion.

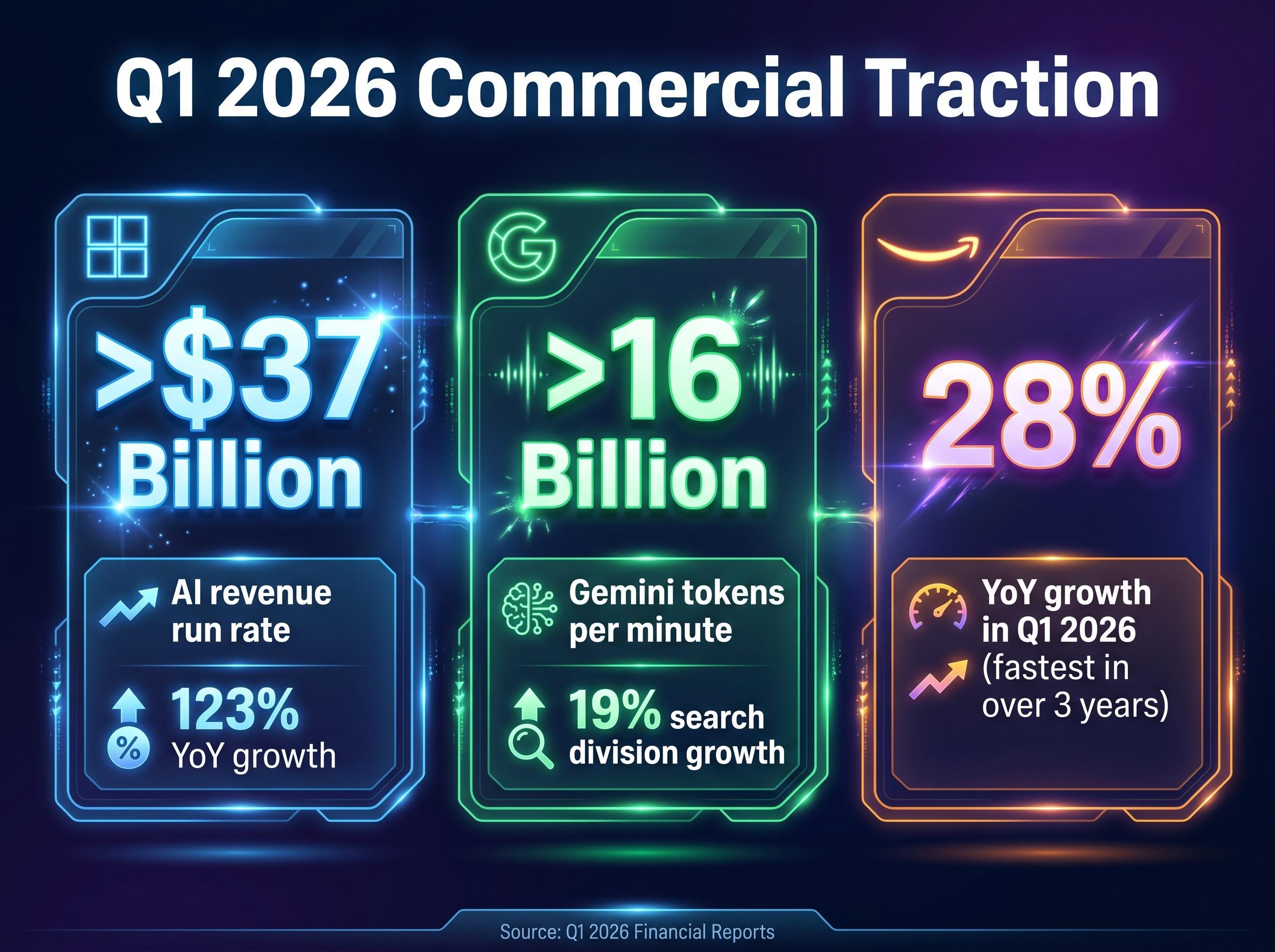

- Microsoft reported an annualised AI revenue run rate surpassing $37 billion, up 123% year-over-year, while AWS posted its fastest quarterly growth in over three years at 28%, providing commercial justification for elevated spending.

- Hyperscalers issued $121 billion in debt in 2025, approximately four times the five-year average, with another $100 billion projected in 2026, raising structural concerns about debt-funded capex sustainability.

- Nvidia and the broader AI supply chain, including memory, optical components, and power semiconductors, stand to benefit as $725 billion in annual capex flows across multiple hardware categories.

- Q2 2026 earnings reports in late July and early August will be the critical next data point, with the AI revenue to capex ratio being the key sustainability metric to monitor.

In Q1 2026 alone, Amazon, Microsoft, Alphabet, and Meta collectively spent $130 billion on AI infrastructure. That single-quarter figure exceeds the entire annual capital expenditure budget of most Fortune 500 industries combined.

The Q1 2026 earnings season closed with every major hyperscaler raising or maintaining historically elevated spending guidance, pushing full-year 2026 combined projections to approximately $725 billion and setting the stage for a $1 trillion annual run rate by 2027. The scale of these revisions has split Wall Street. On one side, analysts point to accelerating AI revenue as commercial justification. On the other, warnings that debt-funded spending has reached a structurally precarious level are growing louder.

What follows breaks down the verified spending figures from each hyperscaler, explains what is driving the upward revisions, and presents the competing institutional views on whether the AI buildout is commercially grounded or financially overextended.

$725 billion and climbing: what Q1 2026 earnings revealed about the AI buildout

$130 billion. That is what Amazon, Microsoft, Alphabet, and Meta spent on AI infrastructure in a single quarter, Q1 2026, setting the pace for a combined full-year figure of approximately $725 billion.

The individual company figures make the collective acceleration concrete. Microsoft raised its full-year capex guidance to approximately $190 billion, up roughly $55 billion year-over-year. Amazon disclosed 2026 capex guidance of $200 billion. Alphabet lifted its full-year target to $180 billion-$190 billion, a further $5 billion increase from prior guidance. Meta raised its range to $125 billion-$145 billion.

These were not isolated decisions. Each disclosure landed within days of the others during late April 2026, and each moved in the same direction: up.

Financial Times reporting on hyperscaler capex confirmed the combined $725 billion figure for 2026 represents a 77% year-over-year increase, contextualising the scale of Q1 2026 disclosures within the broader trajectory of AI infrastructure commitment.

Investor tolerance, however, was not uniform. Meta’s guidance raise triggered a stock decline of approximately 6% following its Q1 2026 report, a signal that even companies posting strong results face scrutiny when spending accelerates faster than the market expects.

| Company | Q1 2026 capex (disclosed) | Full-year 2026 guidance | Year-over-year change |

|---|---|---|---|

| Microsoft | Part of $130B combined | ~$190B | +~$55B |

| Amazon | Part of $130B combined | $200B | Sustained increase |

| Alphabet | Part of $130B combined | $180B-$190B | +~$5B from prior guidance |

| Meta | Part of $130B combined | $125B-$145B | Significant raise |

When big ASX news breaks, our subscribers know first

What hyperscalers actually mean when they talk about “AI capital expenditure”

Before assessing whether $725 billion in annual spending is sustainable, it helps to understand what sits inside the number.

Hyperscale AI capital expenditure covers three broad categories:

- Compute hardware: GPU clusters (primarily from Nvidia) and custom silicon such as Google’s TPUs and Amazon’s Trainium chips.

- Data centre construction and power infrastructure: Physical facilities, cooling systems, and the electrical capacity to support high-density AI workloads.

- Networking buildout: High-speed interconnects and optical infrastructure linking compute clusters within and across facilities.

Hyperscalers are now deploying capital across both merchant GPU procurement and proprietary chip development simultaneously, meaning spending is distributed across multiple hardware tracks rather than concentrated on a single supplier.

Microsoft attributed approximately $25 billion of its 2026 capex increase specifically to higher component pricing, providing a concrete example of cost inflation being absorbed by hyperscalers rather than passed through.

Hardware refresh cycles add another layer. IBM Chief Executive Officer Arvind Krishna has noted that AI hardware faces a roughly five-year obsolescence window, meaning equipment is effectively replaced rather than maintained, creating a structural drag on return on investment. According to Dell’Oro Group data, Q1 2025 data centre capex reached $134 billion, up 53% year-over-year, establishing the pace of acceleration that preceded the 2026 figures. BofA’s December 2025 analysis found that AI infrastructure supply grew more than 1,000% from 2024 to 2025.

Power grid constraints have emerged as a structural bottleneck alongside chip supply, with data centres projected to consume 9% of US domestic electricity by 2030, up from 4% in 2023, a factor that is compressing the realistic pace of physical data centre deployment regardless of capex commitments.

Rising AI revenue: the commercial engine driving hyperscaler investment

The hyperscalers are not spending into a vacuum. Revenue data from Q1 2026 provides the demand-side engine that is actively justifying the capex escalation, not merely accompanying it.

Three metrics illustrate the scale of commercial traction:

- Microsoft reported an annualised AI revenue run rate surpassing $37 billion, up 123% year-over-year.

- Alphabet disclosed it is processing more than 16 billion Gemini tokens per minute, with its search division growing 19%, attributable to AI query integration.

- AWS posted 28% year-over-year growth in Q1 2026, described as its fastest quarterly rate in over three years, driven largely by AI-related workload demand.

The contrast between operators is worth noting. Microsoft’s revenue acceleration directly parallels its spending trajectory; the 123% AI revenue growth makes a $55 billion capex raise legible as a response to verified demand. Meta’s posture is more reactive; its spending raises have preceded clearer monetisation timelines, which helps explain the market’s sharper reaction to its guidance.

For the largest cloud platforms, AI monetisation has crossed from pilot to production. That transition is the strongest argument the bull case has.

The bull and bear cases for sustaining a $1 trillion annual spending pace

The same data set supports two sharply different conclusions. That is what makes the current debate genuine rather than rhetorical.

The bull case

The market’s behaviour in April 2026 provides the broadest endorsement. The S&P 500 closed at approximately 7,165 on 24 April 2026, its strongest April performance in six years, driven in part by strong AI cloud results from Microsoft, Alphabet, and Amazon. JPMorgan updated its year-end 2026 S&P 500 target to 7,600 as of 21 April 2026, reflecting confidence that AI revenue growth can sustain the spending trajectory.

The revenue numbers add commercial weight. When Microsoft is growing AI revenue at 123% year-over-year and AWS is posting its fastest quarterly growth in three years, the argument that spending is chasing verified demand rather than speculative potential carries force.

The bear case

BofA Global Research warned in December 2025 of an “air pocket” risk in 2026. The concern centres on funding mechanics: hyperscaler capex funded by operating cash flow is running out. Hyperscalers issued $121 billion in debt in 2025, approximately 4 times the five-year average, with another $100 billion projected in 2026.

AI debt issuance mechanics have grown complex enough that institutional capital is now flowing into hybrid instruments, with Payment in Kind structures, NAV lending, and EBITDA add-backs masking leverage levels that straightforward balance sheet analysis would otherwise surface, a pattern that matters for any investor assessing whether hyperscaler debt is genuinely serviceable at scale.

IBM Chief Executive Officer Arvind Krishna has warned that the implied $8 trillion total capex trajectory would require $800 billion in annual profit just to cover interest payments.

BofA’s year-end 2026 S&P 500 target of 7,100-7,200, set in December 2025, was already nearly reached by late April 2026, suggesting the market may have front-loaded the optimism that BofA expected to unfold over a full year.

Both sides draw from the same earnings data, the same revenue figures, and the same capex disclosures. The disagreement is not about the facts. It is about whether AI monetisation can scale fast enough to justify the debt-funded portion of the buildout.

The next major ASX story will hit our subscribers first

Beyond the hyperscalers: tracing capital to the broader AI supply chain

Translating $725 billion in aggregate capex into specific sector exposures requires tracing where the money flows.

Nvidia remains the primary compute beneficiary. With Microsoft absorbing $25 billion in higher component costs, the pricing power sitting with GPU suppliers is visible in the capex disclosures themselves. Nvidia closed at approximately $198.45 on 1 May 2026.

The beneficiary picture extends well beyond a single chipmaker. Data centre expansion at this scale creates demand across multiple supply chain layers:

- Compute: Merchant GPUs and custom silicon (TPUs, Trainium)

- Memory: High-bandwidth memory for AI training and inference workloads

- Optical components: Interconnects linking GPU clusters within data centres

- Semiconductor capital equipment: The machines that fabricate the chips

- Power semiconductors: Components managing energy delivery for high-density compute

The simultaneous deployment of merchant GPUs and custom silicon by hyperscalers creates a more distributed beneficiary set than a single-supplier narrative suggests. BofA’s December 2025 finding that AI infrastructure supply grew more than 1,000% from 2024 to 2025 underscores the demand surge creating supplier leverage across these categories.

Semiconductor concentration risk has reached a level with no modern precedent: semiconductor companies now account for 13% of the US equity market in April 2026, surpassing dot-com era concentration, meaning a deceleration in hyperscaler procurement budgets would transmit across the broad index rather than remaining confined to the tech sector.

The path to a $1 trillion annual AI spend

The arithmetic is straightforward. Combined hyperscaler guidance of approximately $725 billion for 2026, if growth rates hold at even a fraction of recent pace, implies a $1 trillion annual threshold by 2027. That is not a projection requiring additional acceleration. It is the trajectory that current company-level commitments already describe.

The open question is not whether spending reaches that level. It is whether AI revenue scales fast enough to justify the debt-funded portion of the investment. BofA’s December 2025 framing captures the tension precisely: no imminent bubble, but debt-funding risk that is real and proximate.

Q2 2026 earnings reports, due in late July and early August, will provide the next concrete data point. The metric to watch is whether the ratio of AI revenue to capex spending is tightening or widening. That single datapoint will tell investors more about sustainability than any price target revision.

This article is for informational purposes only and should not be considered financial advice. Investors should conduct their own research and consult with financial professionals before making investment decisions. Forward-looking statements regarding capex trajectories and revenue projections are subject to change based on market developments and company performance.

Frequently Asked Questions

What is AI capital expenditure and why does it matter for investors?

AI capital expenditure refers to the money hyperscalers like Amazon, Microsoft, Alphabet, and Meta spend on GPU clusters, data centre construction, and networking infrastructure to support AI workloads. It matters for investors because the scale and sustainability of this spending directly affects the earnings outlook for these companies and their supply chain partners.

How much are the major hyperscalers spending on AI infrastructure in 2026?

For full-year 2026, Microsoft guided to approximately $190 billion, Amazon to $200 billion, Alphabet to $180-190 billion, and Meta to $125-145 billion, bringing the combined total to approximately $725 billion, a 77% year-over-year increase.

Is the AI spending boom sustainable or are there financial risks investors should know about?

BofA Global Research warned of an air pocket risk in 2026, noting that hyperscalers issued $121 billion in debt in 2025, roughly four times the five-year average, with another $100 billion projected in 2026, raising concerns about whether AI revenue can scale fast enough to service debt-funded portions of the buildout.

Which companies and sectors benefit most from hyperscaler AI capital expenditure?

Nvidia is the primary compute beneficiary given its GPU dominance, but capital flows across multiple supply chain layers including high-bandwidth memory, optical interconnects, semiconductor capital equipment, and power semiconductors, creating a broad set of potential investment exposures.

When is the next major data point for tracking AI spending sustainability?

Q2 2026 earnings reports, due in late July and early August 2026, will be the next concrete checkpoint, with the key metric being whether the ratio of AI revenue to capex spending is tightening or widening.