What AI Infrastructure Investment Means for ASX Investors

Key Takeaways

- NVIDIA revised its global AI infrastructure investment outlook to approximately US$1 trillion at its March 2026 GTC conference, with cumulative industry spending projected to reach US$3 trillion by 2028.

- Australia's data centre capacity is expected to more than double from 1,350 MW to 3,100 MW between 2024 and 2030, requiring an estimated A$26 billion in new investment.

- NEXTDC secured a 250MW contract at its S4 facility in April 2026 and signed an MoU with OpenAI for a Stargate data centre valued at approximately A$6.75 billion, though the MoU is not a binding revenue commitment.

- AI infrastructure operators offer greater revenue visibility than software plays through long-term contracted agreements, but carry meaningful dilution risk from capital-intensive build programmes, as illustrated by NEXTDC's A$2.2 billion April 2026 capital raise.

- ASX investors can access the AI infrastructure theme through multiple models including colocation (NEXTDC), property (Goodman Group), and network services (Megaport), each representing a distinct risk and return profile within the same structural trend.

At NVIDIA’s GTC conference in March 2026, the company revised its outlook for global AI infrastructure investment to approximately US$1 trillion, effectively doubling earlier expectations. The figure landed as confirmation of what many institutional investors had already suspected: the physical systems underpinning artificial intelligence require capital deployment at a scale that dwarfs the software layer most retail investors associate with the AI trade.

For Australian investors, the question has shifted. It is rarely whether to have AI exposure, but how to access it without concentrating risk on which model, platform, or application ultimately wins. The answer, increasingly, lies in the physical infrastructure these systems depend on: the data centres, power systems, cooling networks, and interconnects that must be built and operated before a single algorithm runs. This article explains what AI infrastructure investment means in practice, how it differs from software-focused AI exposure in risk and return profile, what demand forces are shaping the Australian market, and which ASX-listed options give investors access to this theme.

Why AI needs a physical home, and why that matters to investors

Every chatbot response, every large language model training run, and every enterprise AI deployment depends on compute power housed in physical facilities. These facilities require reliable electricity supply, high-density cooling systems, and low-latency network connectivity. Without them, the software layer of AI does not function.

AI infrastructure investment by hyperscalers is now projected to reach US$660-690 billion in 2026 alone, a capital commitment that is reshaping the semiconductor ecosystem, compressing free cash flow at the world’s largest technology companies, and making electricity access a more critical constraint than compute availability.

The scale of the build: NVIDIA’s March 2026 GTC revision placed global AI infrastructure investment at approximately US$1 trillion, with industry estimates projecting cumulative spending to reach US$3 trillion by 2028.

Most retail investors see the visible side of AI: the models, the applications, the platforms competing for market share. The invisible side, the physical layer, is where the capital intensity concentrates. Australia’s data centre capacity alone is expected to more than double between 2024 and 2030, reflecting a structural expansion cycle that extends well beyond any single product generation.

The Mandala Partners capacity projections for the Australian market estimated deployable data centre capacity growing from 1,350 MW in 2024 to 3,100 MW by 2030, requiring an additional A$26 billion in investment, a figure that contextualises the scale of the structural build cycle the article describes.

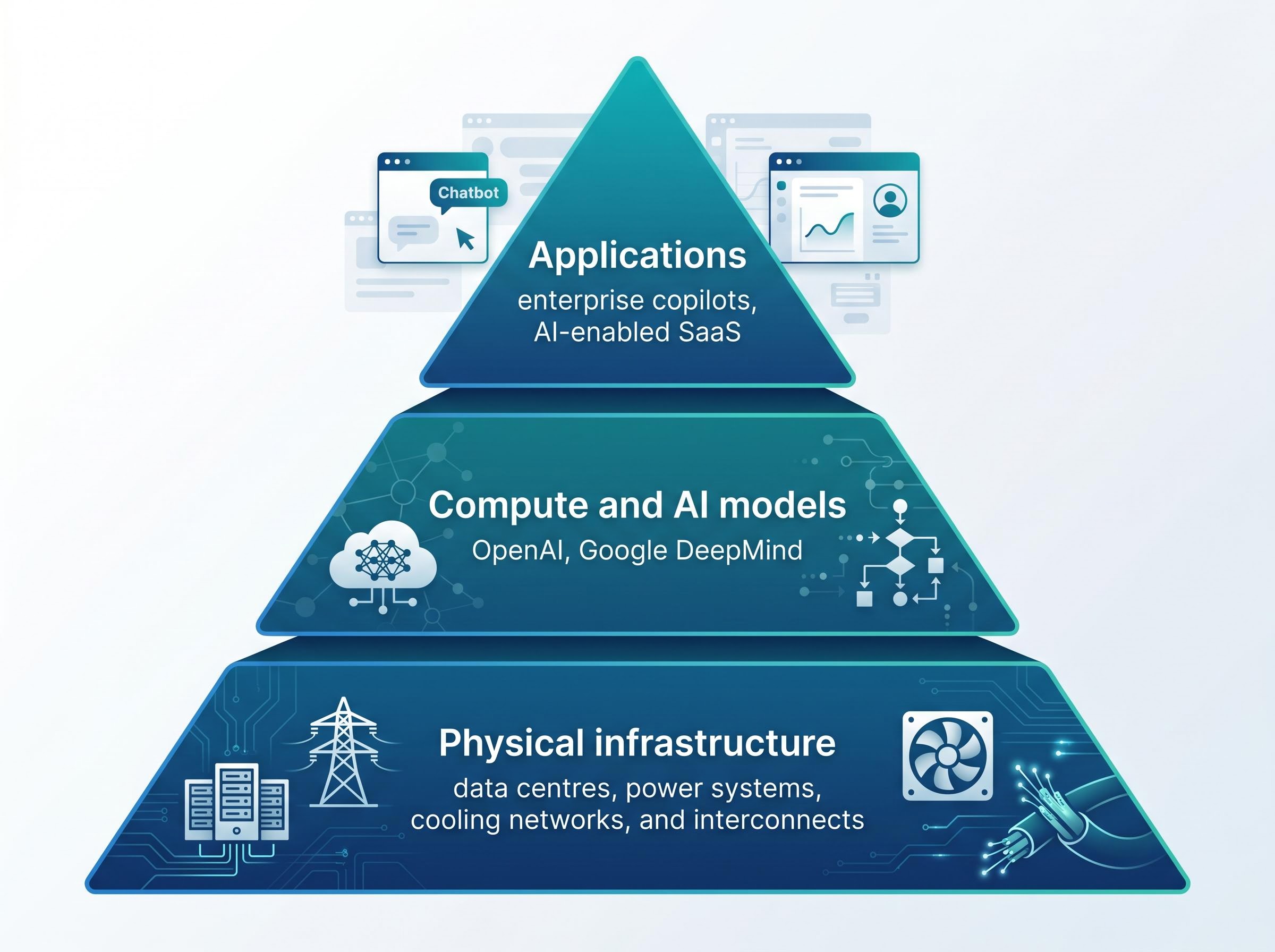

The investment logic follows from this structure. Three distinct layers make up the AI value chain:

- Compute and AI models: The algorithms, training frameworks, and inference engines (e.g., OpenAI, Google DeepMind)

- Applications: The software products and platforms built on top of those models (e.g., enterprise copilots, AI-enabled SaaS)

- Physical infrastructure: The data centres, power systems, cooling networks, and interconnects that house and run everything above

Whoever builds and operates that physical layer captures a position in the AI value chain that functions regardless of which model or platform ultimately wins. That structural durability is the foundation of the infrastructure investment case.

When big ASX news breaks, our subscribers know first

The physical layer explained: what data centre infrastructure actually does

A data centre, at its most basic, is a facility purpose-built to house and power computer servers, provide interconnection between networks, and maintain the uptime and environmental conditions that enterprise and hyperscaler customers require. The business model for operators centres on colocation: customers lease space and power within the facility rather than owning the building themselves. This produces long-term contracted revenue streams for the operator, often spanning 10-15 years for large hyperscaler agreements.

The colocation model means the operator’s revenue is tied to the physical capacity it can deploy and the utilisation rate it achieves, not to any individual technology product or software cycle.

How AI workloads changed the infrastructure equation

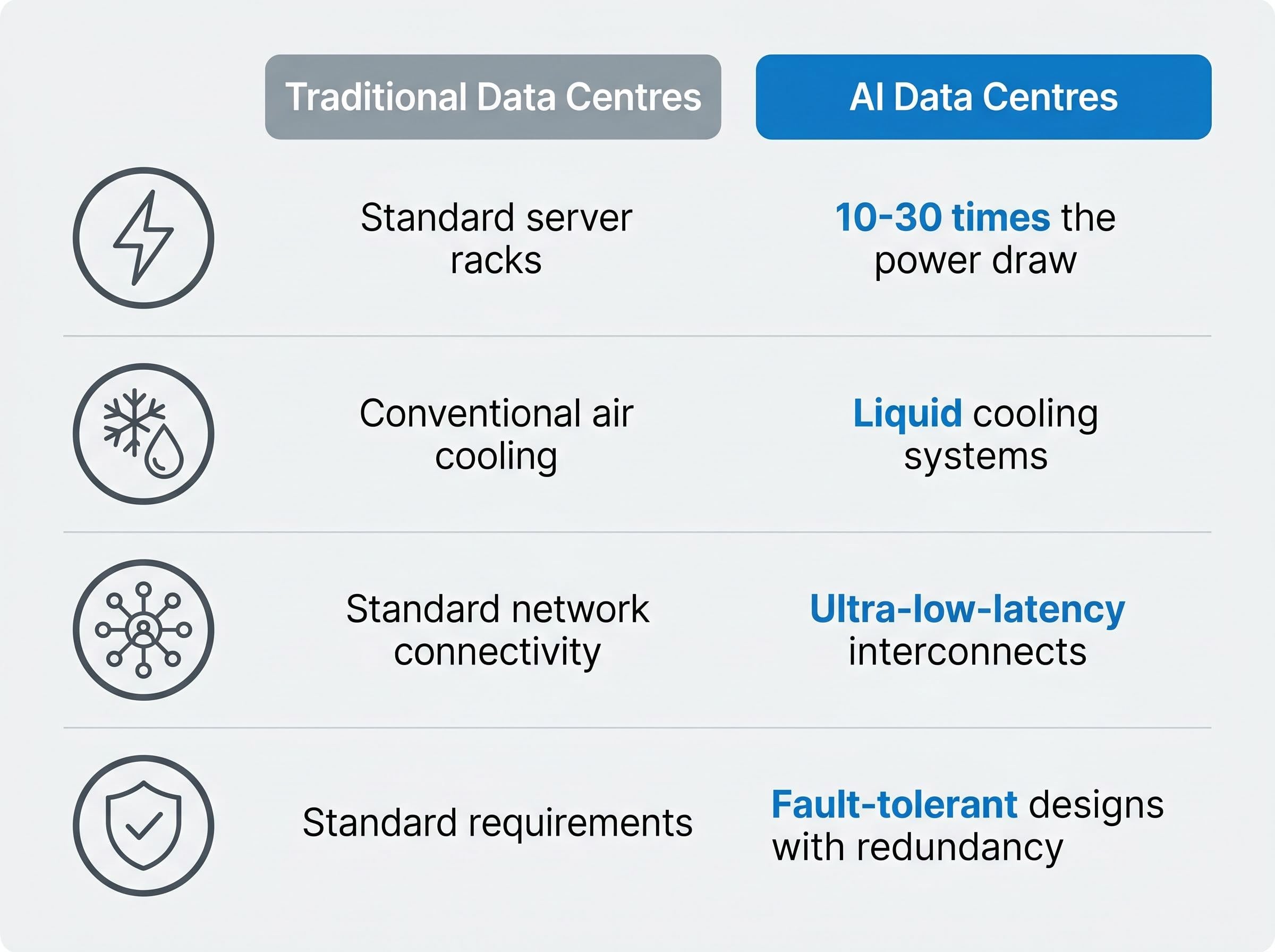

The shift to AI workloads has fundamentally altered what a data centre must deliver. Traditional enterprise workloads, such as cloud storage, email hosting, and web applications, operate at moderate power densities across standard server racks. AI workloads, particularly model training and large-scale inference, require GPU-dense configurations that consume dramatically more power per rack and generate substantially more heat.

The practical implications of this shift are significant:

- Power density: AI-ready racks may require 10-30 times the power draw of traditional enterprise configurations

- Cooling type: GPU-dense environments demand liquid cooling systems rather than conventional air cooling

- Connectivity requirements: AI workloads require ultra-low-latency interconnects between GPU clusters, not just standard network connectivity

- Uptime standards: Hyperscaler customers deploying AI at scale require fault-tolerant designs with redundancy across power, cooling, and network systems

Much of the existing global data centre supply was engineered for traditional workloads and is not fit-for-purpose for frontier AI deployments. This creates a structural advantage for operators who have designed their facilities around high-density GPU configurations from the outset.

Infrastructure vs. software: the risk profile comparison Australian investors need to understand

The “picks and shovels” framework, where investors buy exposure to the tools enabling a boom rather than betting on individual prospectors, has been identified by Australian fund managers as a top investment theme for 2026. The logic is straightforward: infrastructure operators benefit from AI growth regardless of which model or application dominates. The risk profile, however, differs from software plays in ways that demand careful evaluation.

The hardware versus software divergence playing out across global technology markets in 2026 is not a subtle rotation; the Morningstar Global Semiconductor Equipment index has gained 47.6% year-to-date while the Software Applications index has fallen 22.7%, a spread that validates the structural logic behind preferring physical infrastructure exposure over model or application bets.

Australian fund managers have identified AI infrastructure as one of the top investment themes for 2026, favouring it for its long-duration exposure to the AI cycle relative to more volatile software and application plays.

| Attribute | Infrastructure AI investment | Software/Model AI investment |

|---|---|---|

| Revenue visibility | Long-term contracted revenues (10-15 year agreements) | Subscription-based, subject to churn and competitive displacement |

| Asset tangibility | Physical facilities, land, power infrastructure | Intellectual property, code, trained models |

| Key risk | Capital intensity, execution risk, dilution from ongoing raises | Winner-takes-all dynamics, valuation compression, technology obsolescence |

| Valuation driver | Contracted megawatts, forward order book, EBITDA trajectory | Revenue growth rate, market share, earnings multiple expansion |

Infrastructure carries risks that software plays do not. NEXTDC’s FY26 capex guidance of A$2.7-A$3.0 billion illustrates the scale of capital commitment required. Operators must fund multi-year construction programmes, manage complex power procurement, and execute simultaneously across multiple campus developments. Balance sheet intensity and the need for repeated capital raises introduce dilution risk that software businesses, which are typically asset-light, do not face.

The trade-off is genuine. Infrastructure offers greater revenue visibility and asset backing, but demands investor patience with capital-intensive business models where current earnings lag far behind the contracted revenue opportunity.

The Australian opportunity: demand dynamics driving ASX-listed data centre assets

The global AI infrastructure thesis is well established. The Australian-specific opportunity is shaped by a distinct set of demand signals and supply constraints that merit separate analysis.

Hyperscaler expansion is the primary demand driver. NEXTDC secured a 250MW contract at its S4 facility in April 2026 with an AAA-rated counterparty, widely speculated to be Microsoft. The company described it as the largest customer contract in its history. In December 2025, NEXTDC signed a Memorandum of Understanding (MoU) with OpenAI for a planned Stargate data centre in Eastern Creek, Sydney, valued at approximately A$6.75 billion (USD $4.5 billion).

The OpenAI Stargate announcement is an MoU, not a contracted revenue commitment. MoU-stage announcements reflect potential demand and may not convert to executed agreements on the anticipated terms or timeline. Investors should distinguish clearly between contracted commitments and pipeline-stage interest.

Beyond hyperscalers, enterprise AI adoption, sovereign data requirements, and government demand for domestic processing are adding incremental demand across the Australian market.

| NEXTDC demand metric | Figure (April 2026) |

|---|---|

| Contracted utilisation | 667MW (+60%) |

| Forward order book | 544MW (+83%) |

| Largest single contract (S4) | 250MW |

| FY26 EBITDA guidance | A$235 million |

Why supply constraints matter as much as demand growth

Demand figures tell only half the story. Power procurement, planning approvals, and grid connection costs are the binding constraints on how quickly operators can convert contracted demand into operational revenue.

Australia’s data centre market is characterised by demand exceeding supply, with AI workload growth outstripping traditional infrastructure planning cycles. The 2026 Australian regulatory framework adds further complexity, requiring operators to underwrite renewable energy commitments and pay full grid connection costs for new campuses. These requirements add both cost and timeline uncertainty to development programmes, particularly for facilities requiring grid augmentation. The result is a market where new supply cannot arrive as quickly as demand signals suggest, reinforcing the competitive position of operators with existing land banks, power agreements, and development approvals already in place.

The National Data Centre Expectations, published by the Australian Government in March 2026, require operators to secure new and additional clean energy generation, cover their share of transmission and distribution infrastructure costs, and adopt industry-leading energy efficiency measures, with proposals aligned to these requirements prioritised through Commonwealth regulatory processes.

Beyond NEXTDC: other ways to access AI infrastructure on the ASX

NEXTDC dominates the Australian AI infrastructure conversation, but it is not the only route to exposure on the ASX. Each alternative represents a genuinely different angle on the same structural theme.

- NEXTDC (ASX: NXT): Pure-play data centre colocation operator; direct exposure to hyperscaler demand through contracted megawatts and long-term leasing agreements

- Goodman Group (ASX: GMG): Data centre property and logistics infrastructure; exposure through the real estate asset class rather than direct technology operations

- Megaport (ASX: MP1): Network-as-a-service provider supporting AI data movement and interconnection; a distinct infrastructure layer from physical compute colocation

- Firmus (watch list): Neocloud AI-focused operator targeting a A$2 billion ASX IPO as of April 2026; not yet listed, but positioned as a competitive alternative to incumbent colocation models

Traditional colocation versus neocloud: two models for the same megatrend

The Australian market is developing two competing infrastructure models. Traditional colocation operators like NEXTDC sell proven resilience, sovereign-grade compliance, and mission-critical uptime to hyperscalers and large enterprises. Their competitive position rests on execution capability at scale and the engineering required for high-density GPU workloads.

Neocloud entrants like Firmus sell AI capability, speed to deployment, and flexible consumption models. Their positioning appeals to customers prioritising agility and rapid access to GPU compute over the guaranteed scale and compliance infrastructure that hyperscalers require.

These models are not in direct competition for all customer segments. Investors evaluating the space should understand which demand pool each operator is positioned to capture, rather than treating all AI infrastructure operators as interchangeable.

The next major ASX story will hit our subscribers first

What investors need to stress-test before buying the AI infrastructure thesis

The AI infrastructure investment case is compelling on its structural merits, but the specific risks are equally structural. Four categories warrant direct attention before building a position.

- Dilution risk: Infrastructure at this scale requires repeated access to capital markets. NEXTDC’s April 2026 capital raise totalled A$2.2 billion, including approximately A$1.5 billion via an entitlement offer at a 1-for-5.4 ratio at A$12.70 per share. Shareholders who do not participate in entitlement offers face dilution. This is not an exceptional event; it is a structural feature of capital-intensive infrastructure business models.

The S4 Western Sydney expansion involves more than a capacity milestone; the A$2.2 billion capital package supporting it includes A$1.7 billion in hybrid securities anchored by CDPQ alongside the equity raise, a financing structure that offers investors insight into how capital-intensive infrastructure operators are managing dilution while funding multi-year construction programmes.

- Execution risk: NEXTDC’s valuation assumes successful simultaneous execution across multiple large campus developments. Delays in construction, power procurement, or customer deployment timelines represent material downside scenarios that could compress the contracted revenue trajectory.

- MoU-versus-contract risk: The OpenAI Stargate MoU valued at approximately A$6.75 billion is not a binding contract. Investors should apply appropriate caution when interpreting demand announcements at the MoU stage, as conversion to executed agreements is not guaranteed.

- Regulatory and planning risk: The 2026 Australian regulatory framework introduces renewable energy underwriting obligations and full grid connection cost recovery for new data centre developments. These requirements add cost, complexity, and timeline risk to new campus programmes.

The investment-versus-earnings gap: NEXTDC’s FY26 EBITDA guidance of A$235 million sits against FY26 capex guidance of A$2.7-A$3.0 billion. The contracted EBITDA target exceeding A$1.0 billion has no confirmed public timeline. This gap between current earnings and the long-duration payoff the investment case depends on is the defining feature of the risk profile.

Investors who enter AI infrastructure positions without stress-testing these four risks may be surprised by volatility events that are, in fact, predictable features of the investment model rather than anomalies.

For investors wanting to stress-test the structural bull case against credible failure conditions, our full explainer on AI capex concentration risk examines semiconductor companies now representing 13% of the US equity market, the disconnect between OpenAI’s missed user targets and the hardware investment cycle those targets were meant to justify, and the specific portfolio construction questions commercial allocators should address before sizing infrastructure positions.

The physical layer is the long game, but it requires patience and clarity

AI infrastructure investment via ASX-listed operators offers genuine, durable exposure to the AI growth cycle without the winner-takes-all risk of individual software or model bets. The physical layer must be built regardless of which algorithm prevails, and operators with contracted demand, existing land banks, and engineering capability carry structural advantages that new entrants cannot replicate quickly.

The trade-off is time and capital intensity. Investors considering this theme should evaluate each operator against four criteria: the business model type (colocation, property, network), the stage of contracted revenue versus pipeline, the balance sheet position and likelihood of further capital raises, and the specific risk factors relevant to each company’s development programme.

Primary institutional research sources remain the most reliable basis for current valuations and broker consensus. Investors are best served by assessing each ASX-listed AI infrastructure option against their own portfolio goals, risk tolerance, and time horizon, using the structural framework above as a starting point rather than a recommendation.

This article is for informational purposes only and should not be considered financial advice. Investors should conduct their own research and consult with financial professionals before making investment decisions. Past performance does not guarantee future results. Financial projections are subject to market conditions and various risk factors.

Frequently Asked Questions

What is AI infrastructure investment?

AI infrastructure investment refers to capital deployed into the physical systems that power artificial intelligence, including data centres, power systems, cooling networks, and high-speed interconnects. These assets must be built and operated before any AI software or model can function at scale.

How is investing in AI infrastructure different from buying AI software stocks?

AI infrastructure operators generate long-term contracted revenues, often spanning 10-15 years, backed by physical assets, while software and model plays face winner-takes-all dynamics, valuation compression, and technology obsolescence risk. Infrastructure exposure benefits from AI growth regardless of which specific model or application ultimately wins.

Which ASX stocks give exposure to AI infrastructure investment?

The main ASX-listed options include NEXTDC (ASX: NXT) as a pure-play data centre colocation operator, Goodman Group (ASX: GMG) for data centre property exposure, and Megaport (ASX: MP1) for network-as-a-service infrastructure supporting AI data movement.

What are the key risks of investing in AI infrastructure companies on the ASX?

The four primary risks are dilution from repeated capital raises, execution risk across simultaneous large campus developments, the possibility that MoU-stage demand announcements do not convert to binding contracts, and regulatory requirements around renewable energy underwriting and grid connection costs in Australia.

How much is Australia expected to invest in data centre capacity by 2030?

According to Mandala Partners projections, Australia's deployable data centre capacity is expected to grow from 1,350 MW in 2024 to 3,100 MW by 2030, requiring approximately A$26 billion in additional investment over that period.