APRA’s AI Risk Warning Exposes a Gap Boards Cannot Ignore

Key Takeaways

- APRA's 30 April 2026 letter formally identified AI-amplified cyber threats and vendor concentration risk as interconnected vulnerabilities running through the same governance gaps across Australian banking, insurance, and superannuation.

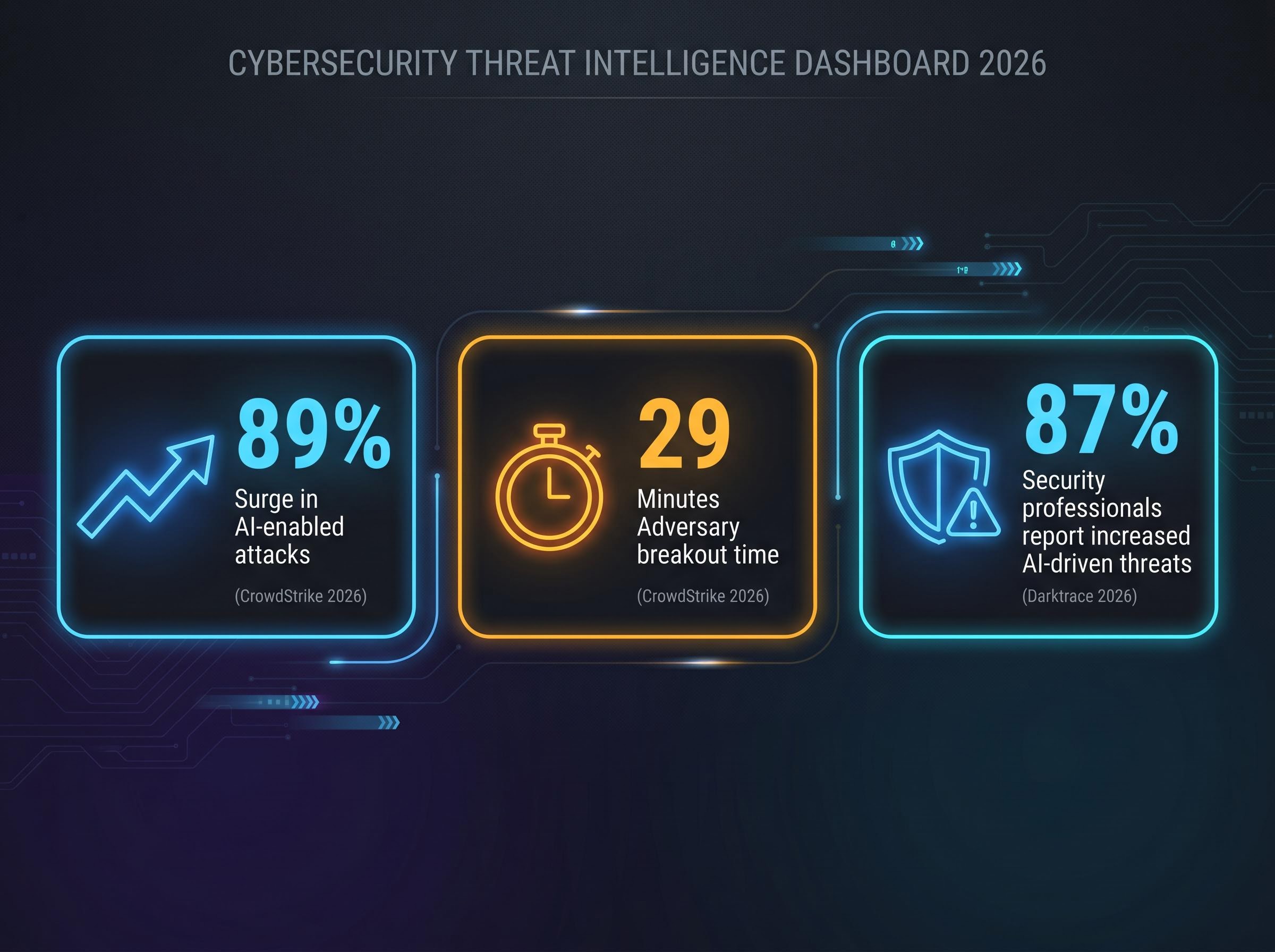

- CrowdStrike's 2026 Global Threat Report recorded an 89% surge in AI-enabled attacks and adversary breakout times falling to just 29 minutes, validating APRA's assessment that defensive practices are not keeping pace with AI-assisted threat actors.

- APRA found that some regulated entities depend on a single AI provider across multiple functions without adequate contingency planning or exit strategies, a pattern independently confirmed by surveys of 628 global financial institutions.

- Boards across regulated entities were found to lack the technical literacy to meaningfully challenge management on AI risk, with reliance on vendor-supplied summaries creating a structural conflict that APRA flagged directly.

- Global regulatory convergence from the BIS, ECB, and Bank of England signals that current AI risk management expectations represent a starting point, and institutions moving early on board literacy and vendor exit planning will hold a structural compliance advantage.

The Australian Prudential Regulation Authority (APRA) published a letter to industry on 30 April 2026 carrying a warning that few regulated institutions can afford to treat as routine. Frontier AI models, with Anthropic’s Claude Mythos named explicitly, are expected to increase the probability, speed, and scale of cyberattacks against Australian financial institutions. That assessment came not from a vendor pitch or a consultancy white paper but from the regulator itself, drawing on a structured supervisory review conducted in late 2025 across banking, insurance, and superannuation. The findings expose a widening gap between what institutions can do with AI and what they can safely manage, spanning AI-amplified cyber threats, dangerous vendor concentration, and governance structures that have not kept pace with the technology’s velocity. What follows unpacks both threat vectors, explains the structural risk most boards are not yet equipped to see, and places APRA’s response within a global regulatory convergence that is likely to harden over time.

The two threats APRA just put on the table

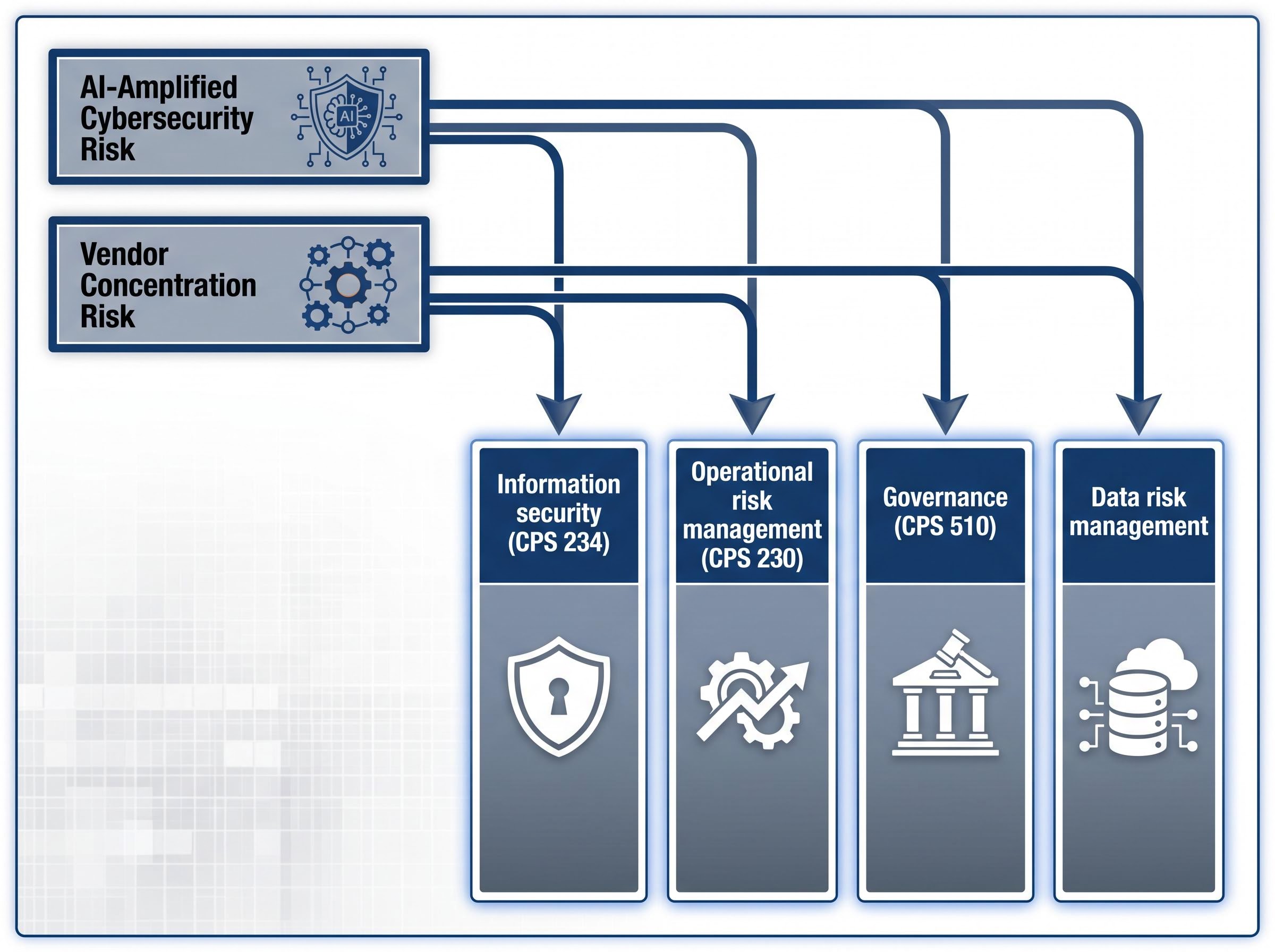

APRA Member Therese McCarthy Hockey presented the regulator’s findings across two distinct but interlocking risk categories. The first is AI-amplified cybersecurity risk: the observation that frontier models are accelerating the tools available to threat actors faster than defensive practices are adapting. The second is vendor concentration risk: the finding that some regulated entities depend on a single AI provider across multiple functions without adequate contingency planning or exit strategies.

These are not abstract concerns. They emerged from direct supervisory engagement with regulated entities and are mapped against four existing prudential standards:

- Information security (CPS 234)

- Operational risk management (CPS 230)

- Governance (CPS 510)

- Data risk management

APRA is not introducing new rules. It is demanding measurable improvement against standards that already exist, a distinction that defines the compliance baseline every regulated institution is now being measured against.

APRA’s letter to industry on artificial intelligence sets out the regulator’s supervisory findings across banking, insurance, and superannuation, with explicit reference to frontier models and the five cyberattack pathways APRA identified as posing elevated risk to regulated institutions.

When big ASX news breaks, our subscribers know first

How AI is changing the speed and shape of cyberattacks on financial institutions

APRA identified five specific attack pathways that frontier AI models are enabling or accelerating:

- Prompt injection

- Data leakage

- Insecure integrations

- Exploit injection

- Manipulation or misuse of autonomous AI agents

Each pathway represents a vector where the same models improving institutional productivity can be turned against the institutions deploying them. APRA observed that patching and security practices are struggling to keep pace with the speed at which AI-assisted threats evolve.

Independent data supports the regulator’s assessment. The CrowdStrike 2026 Global Threat Report documented an 89% surge in AI-enabled attacks, with adversary breakout times, the interval between initial compromise and lateral movement, falling to just 29 minutes.

CrowdStrike 2026 Global Threat Report: Adversary breakout times have fallen to 29 minutes, with AI-enabled attacks surging 89% year on year.

Darktrace’s State of AI Cybersecurity 2026 report found that 87% of security professionals reported increased AI-driven threats, with credential abuse and faster attack cycles as primary concerns. The 29-minute breakout figure should recalibrate how readers think about cyber risk in 2026. These are no longer slow-moving intrusions that detection systems can intercept at leisure; they are fast, AI-assisted, and targeting financial institutions whose defensive architectures were designed for a slower threat environment.

What vendor concentration risk means, and why it is harder to see until it is too late

AI vendor concentration risk occurs when a single provider supplies multiple distinct AI functions across an institution, creating a condition where failure or disruption in that provider cascades across several operations simultaneously. Unlike a conventional supplier dependency, where the institution typically knows what it has purchased and from whom, AI concentration risk is often opaque.

AI-driven vendor consolidation is reshaping enterprise software procurement at speed, with autonomous AI agents automating functions previously distributed across multiple specialist platforms and compressing the number of providers institutions realistically depend upon, a structural shift that concentrates operational exposure into fewer relationships precisely as regulators are warning about the risks of that concentration.

The 2026 Global AI in Financial Services Report, surveying 628 financial institutions, identified concentration risks where firms relying on single AI vendors lack exit strategies sufficient to manage disruption. Deloitte’s 2025 EMEA Model Risk Management Survey documented vendor dependencies creating governance blind spots across European financial institutions. APRA’s own finding that some regulated entities lack adequate contingency planning aligns directly with this global pattern.

The scale of Claude’s penetration across financial services illustrates the concentration dynamic:

Anthropic’s exclusion from defence contracts, following the Pentagon’s decision to collapse a $200 million agreement after the company refused to permit its models for autonomous lethal weapons systems, illustrates that institutional deployment boundaries are being drawn by governance posture as much as technical capability, a dynamic that directly shapes which AI providers financial regulators can reasonably expect their supervised entities to rely upon.

- Piraeus Bank and Accenture launched a Claude-powered AI Hub for Greek banking in April 2026

- Intuit partnered with Anthropic in February 2026 to deploy Claude for financial intelligence and custom AI agents

- Goldman Sachs restricted Claude access for Hong Kong bankers in April 2026 due to regional regulatory concerns, a sign that deployment limits are already emerging

When AI is hidden in the stack: transparency gaps that obscure provider dependency

AI functionality is increasingly embedded within third-party software platforms and developer tooling. An institution may be using a frontier model without its risk or procurement teams fully understanding which underlying model is running, how it is updated, or what constraints apply to its use. This opacity is precisely what APRA identified as limiting institutions’ ability to fully assess and manage associated risks. The dependency is real; the visibility is not.

How governance structures are struggling to keep pace with AI adoption

APRA’s supervisory review found that boards across regulated entities demonstrate strong enthusiasm for AI adoption but lack the technical literacy to meaningfully challenge management on AI-related risks. This gap connects both threat vectors. Boards that cannot evaluate cyber risk in an AI-accelerated environment and cannot assess the concentration implications of vendor selection are approving strategies they are not equipped to oversee.

The reliance on vendor-supplied summaries compounds the problem. Boards are evaluating AI risk through material prepared by the parties that profit from AI adoption, a structural conflict that APRA flagged directly. Risk management, assurance, and change management approaches are frequently fragmented across AI-related risks, leaving no single governance body with a complete picture.

Fragmented oversight in a multi-domain risk environment

AI-related risks simultaneously touch operational resilience, cyber and information security, privacy, and procurement. No single governance committee owns the full scope. When risk oversight is distributed across committees that each see only their domain, blind spots emerge at the intersections. APRA’s finding that fragmented assurance frameworks are not keeping pace with adoption is a direct consequence of this structural mismatch between how AI risk behaves and how boards are organised to manage it.

The next major ASX story will hit our subscribers first

Australia is not alone: how global regulators are converging on the same concerns

APRA’s findings sit within a broader international regulatory convergence. The Bank for International Settlements (BIS), the European Central Bank (ECB), and the Bank of England’s Prudential Regulation Authority (PRA) have all moved to address AI governance in 2026, with thematic overlaps that are direct and substantive.

The BIS adaptive governance framework for AI adoption recommends ten practical actions for central banks and supervisory bodies, covering model risk, third-party dependencies, and board accountability in ways that closely parallel APRA’s own supervisory expectations for regulated Australian institutions.

| Regulator | Key AI Governance Action (2026) | Primary Focus Area |

|---|---|---|

| BIS | Published adaptive governance framework with ten practical actions for central banks | AI adoption governance in central banking operations |

| ECB | Speech assessing implementation of OECD AI recommendations | Frontier model deployment risks in the euro area |

| Bank of England / PRA | Plans for review of AI governance frameworks across regulated firms | Board oversight and AI innovation safety |

Goldman Sachs’s decision to restrict Claude access for Hong Kong bankers illustrates that financial institutions themselves are discovering deployment limits even as they expand AI adoption. APRA’s approach differs from European regulators in one notable respect: it is working within existing prudential standards rather than signalling new framework development. The global convergence, however, means current expectations are likely a floor, not a ceiling.

What APRA’s findings actually signal for financial system stability

Three interlocking failure conditions sit at the centre of APRA’s supervisory findings: faster external threats driven by AI-accelerated cyberattacks, concentrated internal dependencies on a small number of AI providers, and governance structures that cannot yet adequately oversee either. When these conditions coincide across multiple regulated institutions simultaneously, the risk profile shifts from institution-specific to potentially systemic.

AI market concentration risk extends beyond prudential compliance into portfolio construction: semiconductor companies now represent 13% of US equity market capitalisation, surpassing dot-com era concentration levels, and analysts tracking the gap between capital expenditure commitments and commercial return timelines are raising structurally similar questions to those APRA is now posing at the institutional governance level.

APRA’s decision to demand improvement against existing standards rather than introduce new rules is a deliberate mechanism. It places the burden of self-assessment and correction on institutions themselves, with the regulator retaining the ability to escalate if progress is insufficient. APRA has stated a forward commitment to ongoing engagement with government agencies, industry, and international regulators. The cross-domain nature of AI risk, spanning operational resilience, cyber, privacy, and procurement, makes it resistant to the single-domain frameworks most institutions currently rely on.

APRA expects regulated entities to demonstrate measurable improvement in closing the gap between their AI capabilities and their capacity to monitor and control those systems.

This article is for informational purposes only and should not be considered financial advice. Investors should conduct their own research and consult with financial professionals before making investment decisions.

The risks are on record. What matters now is the pace of institutional response.

APRA’s 30 April 2026 letter formalised what many in the industry suspected but few had documented with regulatory authority: AI-amplified cyber threats and vendor concentration risk are not separate compliance items but interconnected vulnerabilities running through the same governance gaps. The decision to work within existing prudential standards rather than introduce new rules places the burden squarely on institutions to self-assess and self-correct.

Global regulatory convergence from the BIS, ECB, and Bank of England signals that APRA’s current expectations represent a starting point. Institutions that move early on board literacy, vendor exit planning, and cross-domain governance integration will hold a structural advantage as international requirements tighten. Those that treat the letter as a routine supervisory communication may find the gap between their AI capabilities and their control capacity is the risk their boards failed to see.

For investors tracking the institutional response to APRA’s direction, our dedicated guide to the Australian AI regulatory compliance market examines the Hubify and HubLab investment structure in detail, including how Labrynth’s US regulatory AI platform is being commercialised locally and what the emergence of insurance-backed compliance assurance products signals about where enterprise demand is forming.

Forward-looking statements regarding regulatory developments are subject to change based on market conditions and policy decisions by regulatory bodies.

Frequently Asked Questions

What is AI vendor concentration risk in financial services?

AI vendor concentration risk occurs when a single provider supplies multiple distinct AI functions across an institution, meaning any failure or disruption cascades across several operations simultaneously, often without the institution fully understanding the extent of its dependency.

What five cyberattack pathways did APRA identify as elevated risks from frontier AI models?

APRA identified prompt injection, data leakage, insecure integrations, exploit injection, and manipulation or misuse of autonomous AI agents as the five specific attack pathways that frontier AI models are enabling or accelerating against financial institutions.

How fast are AI-enabled cyberattacks moving in 2026?

According to the CrowdStrike 2026 Global Threat Report, adversary breakout times have fallen to just 29 minutes, with AI-enabled attacks surging 89% year on year, meaning defensive architectures designed for slower threats are now significantly outpaced.

What existing prudential standards is APRA using to address AI risk management?

APRA is demanding measurable improvement against four existing standards: CPS 234 (information security), CPS 230 (operational risk management), CPS 510 (governance), and data risk management, rather than introducing new rules.

How are global regulators responding to AI governance risks in 2026?

The BIS, ECB, and Bank of England's PRA have all moved to address AI governance in 2026, with overlapping focus areas covering model risk, third-party dependencies, and board accountability, signalling that APRA's current expectations are likely a floor rather than a ceiling.