APRA Warns Banks on AI Risk Controls in Blunt Supervisory Letter

Key Takeaways

- APRA's 30 April 2026 supervisory letter formally declared that AI risk management controls across Australian banks, insurers, and superannuation trustees are materially inadequate under existing prudential standards.

- Boards were specifically cited for lacking the technical literacy to challenge management on AI risks, making AI governance a board-level accountability issue rather than a technology or compliance team responsibility.

- APRA identified dangerous vendor concentration risk, with some entities heavily reliant on a single AI provider across multiple critical functions and lacking adequate contingency planning for provider failure or disruption.

- Frontier AI models were found to materially elevate the cyber threat environment, increasing the probability, speed, and scale of attacks against Australian financial institutions.

- No new rules have been introduced, but the supervisory letter carries real enforcement weight: APRA's toolkit includes intensified oversight, licence conditions, and enforcement action where measurable improvement is not demonstrated.

Australia’s prudential regulator has issued its most direct intervention on artificial intelligence governance in the financial system to date. In a formal supervisory letter published 30 April 2026, the Australian Prudential Regulation Authority (APRA) told banks, insurers, and superannuation trustees that their AI risk management controls are materially inadequate. The warning follows a targeted supervisory review conducted in late 2025, which found that governance, risk management, and operational resilience frameworks across all three regulated sectors have failed to keep pace with the speed and complexity of AI deployment. APRA has stopped short of introducing new rules, but the expectation of measurable improvement under existing prudential standards is explicit. What follows unpacks what APRA found, which specific failings were identified, what the cyber threat escalation means in practical terms, and what regulated entities are now expected to do.

From pilots to production: how AI adoption outran oversight in Australian finance

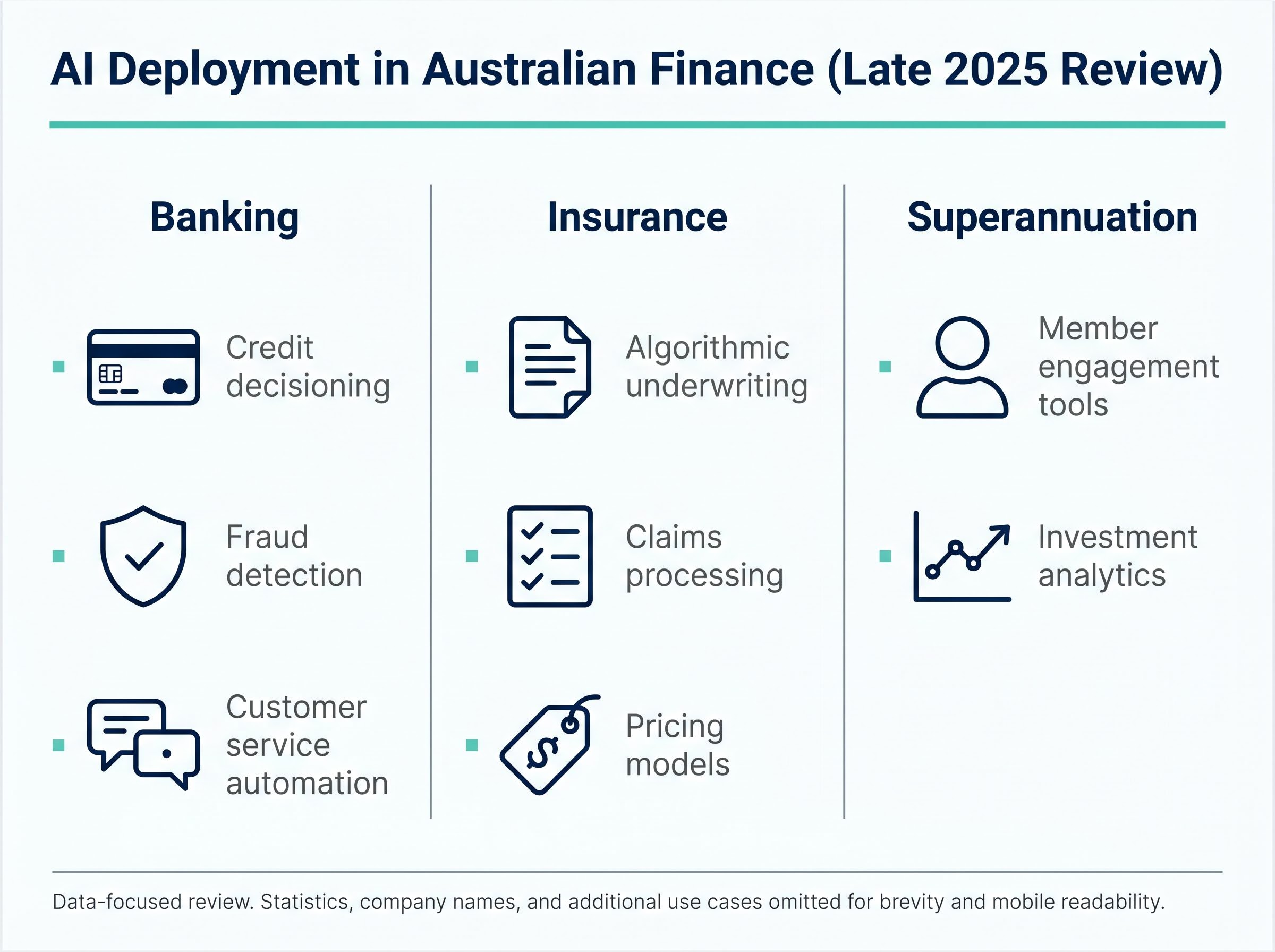

APRA’s supervisory review, conducted across late 2025, found that Australian financial institutions have moved beyond experimental AI pilots into operationally embedded and customer-facing implementations. The velocity, scale, and complexity of that adoption has outpaced institutions’ capacity to monitor and control the technology across all three regulated sectors:

- Banking: AI deployed across credit decisioning, fraud detection, and customer service automation

- Insurance: Algorithmic underwriting, claims processing, and pricing models increasingly reliant on AI-driven outputs

- Superannuation: Member engagement tools and investment analytics incorporating AI-generated recommendations

The transition has been rapid. APRA’s review characterised the pace as structurally exceeding the development of corresponding risk frameworks, a gap that widened as deployment accelerated through 2025.

The transparency problem with embedded AI tools

A compounding factor is how AI functionality now reaches regulated entities. AI capabilities are increasingly embedded within broader commercial software platforms and developer tooling, rather than deployed as standalone models that institutions procure and govern directly.

This reduces transparency about where models operate, how they are trained, and when they are updated or constrained. APRA identified this as a material barrier to institutions’ ability to fully assess associated risks. Reduced transparency is not an edge case; it is a structural feature of how AI is now delivered commercially, and it sits at the centre of every governance gap the regulator subsequently identified.

When big ASX news breaks, our subscribers know first

Vendor lock-in and the concentration risk problem APRA wants fixed

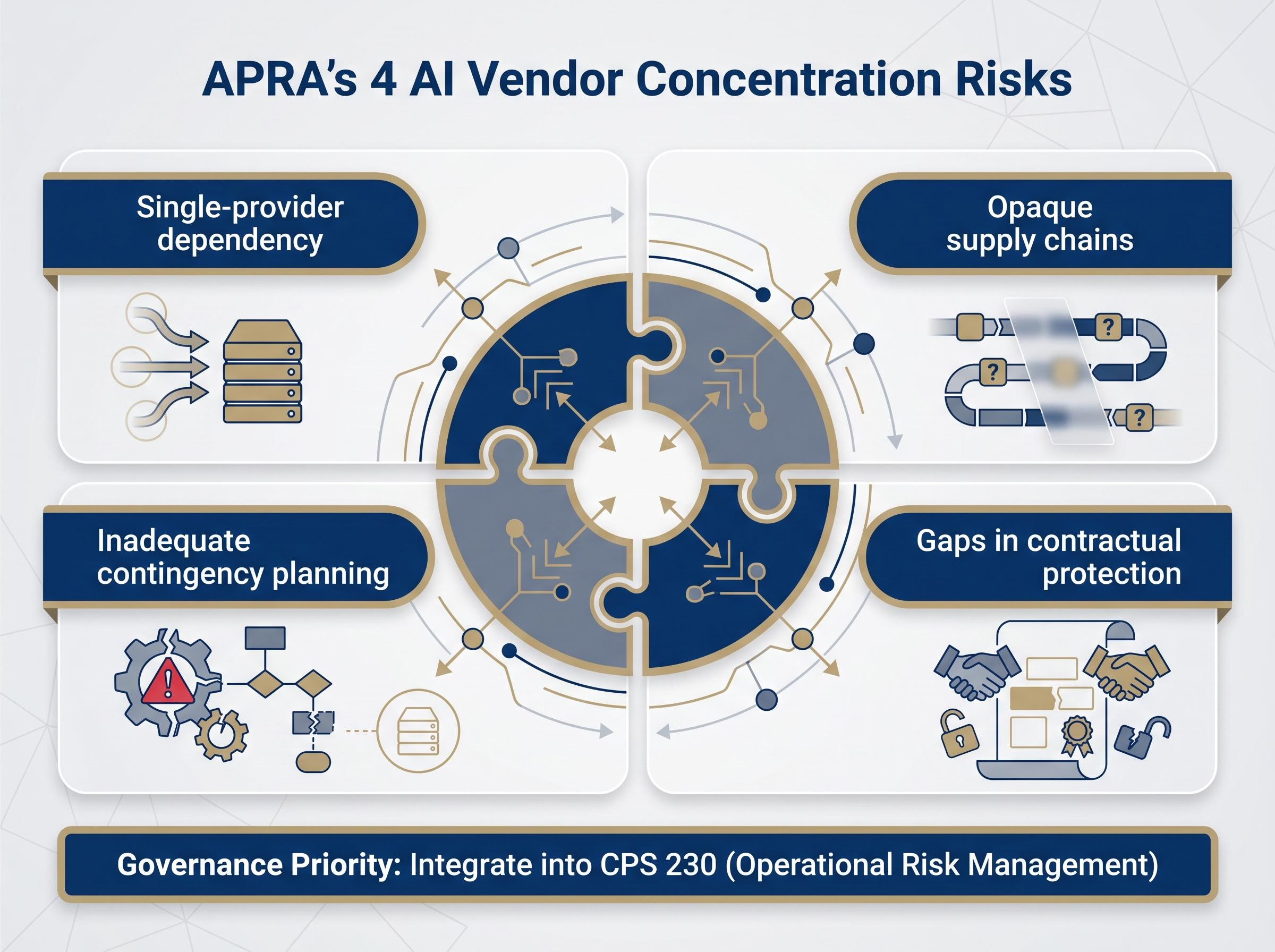

APRA’s review surfaced a vulnerability that may be less visible to senior leadership than headline AI capabilities: concentration risk in AI vendor relationships. The regulator found that some entities have concentrated significant AI dependency on a single provider across multiple, often critical, use cases.

Four distinct concerns emerged from the review:

- Single-provider dependency: Heavy reliance on one AI vendor for multiple operational functions, creating correlated failure risk

- Opaque supply chains: AI procurement chains that obscure the extent and nature of dependencies from risk and governance teams

- Inadequate contingency planning: Limited or absent fallback arrangements if a sole provider experiences failure, disruption, or contractual withdrawal

- Gaps in contractual protection: Contractual arrangements that leave entities exposed to third-party AI risk without adequate safeguards

The parallels with APRA’s longstanding concerns about cloud provider concentration are direct. The risk is not new in prudential terms; only the technology context has changed.

The FSB monitoring framework for AI in financial sectors, published in October 2025, identified vendor concentration as a systemic vulnerability across member jurisdictions, lending international weight to APRA’s domestic findings and situating Australia’s governance gaps within a pattern regulators have observed globally.

What APRA expects entities to do about concentration risk

APRA directed regulated entities to map their AI dependencies comprehensively and to treat concentration risk as a governance priority, not a procurement afterthought. Contingency planning for vendor failure or disruption should be integrated into operational resilience frameworks, consistent with the requirements of CPS 230 (Operational Risk Management). The message is that boards and risk committees, not technology teams alone, own this exposure.

What APRA’s review actually found: a governance gap across all three sectors

The findings form a pattern of institutional failure, not isolated incidents at a handful of underperforming entities. APRA identified governance structures across the regulated system that have not developed at a rate commensurate with the pace of AI adoption.

| Failure Category | What APRA Found | Relevant Prudential Domain |

|---|---|---|

| Board oversight | Boards demonstrate enthusiasm for AI’s potential but lack the technical literacy to challenge management on associated risks | Governance |

| Risk management frameworks | Risk approaches are frequently fragmented, failing to capture AI’s cross-domain exposure | Operational risk management |

| Assurance processes | Assurance and change management functions have not adapted to the speed and opacity of AI model updates | Information security, data risk |

| Operational resilience | Resilience planning does not account for AI-specific failure modes, including vendor concentration and model degradation | Operational risk management (CPS 230) |

AI risks span operational resilience, cyber security, privacy, and procurement simultaneously. Existing siloed governance structures are struggling to contain risks that cut across every traditional committee boundary.

APRA Member Therese McCarthy Hockey framed the findings as reflecting a systemic gap: governance structures across regulated entities have not kept pace with the speed, scale, and complexity of AI adoption, and boards must treat AI risk as a board-level accountability issue.

For finance professionals and regulated entity staff, the board oversight finding carries the most immediate commercial weight. APRA considers AI risk a matter of board-level accountability, not a concern to be delegated to technology or compliance teams alone.

APRA’s super fund oversight has already surfaced board accountability failures in the superannuation sector that mirror the pattern identified in the AI governance review, with funds demonstrating strategic enthusiasm at the board level while lacking the analytical rigour to challenge management on the underlying risks being taken with member capital.

How AI is changing the cyber threat calculus for Australian financial institutions

APRA’s letter moves beyond governance and into threat assessment. The regulator found that frontier AI models materially change the cyber threat environment facing Australian financial institutions, and not in their favour.

Three dimensions of elevated cyber threat were identified:

- Increased probability: Frontier AI models enhance adversaries’ ability to discover and exploit system vulnerabilities, raising the baseline likelihood of successful attacks

- Increased speed of exploitation: AI-accelerated reconnaissance and attack generation compress the window between vulnerability discovery and active exploitation

- Increased scale of potential impact: AI-enabled attacks can be executed at a scale and level of sophistication that outstrips many current defensive frameworks

APRA specifically referenced Anthropic’s Claude Mythos as an example of a frontier AI model anticipated to elevate cyber attack probability, speed, and scale, a concrete illustration of the dual-use challenge facing regulated entities.

The dual-use framing is explicit: the same AI capabilities that benefit institutions can be weaponised against them. Information security practices across the regulated system are struggling to keep pace with this shift in the threat environment.

The timing reinforces the urgency. On 28-29 April 2026, just days before APRA’s letter, Bundesbank chief supervisor Michael Theurer stated (as reported by Reuters) that European banks need access to frontier models specifically to defend against AI-enabled cyberattacks. Two major prudential regulators arrived at converging conclusions within the same week, reflecting a shared global read on frontier AI risk that Australian institutions now operate within.

Understanding what APRA actually regulates: the existing standards framework

APRA is not introducing new AI-specific regulation. Instead, the regulator is directing entities to apply existing prudential standards to AI risks, a distinction that carries material compliance implications. The obligations are already live; only the application to AI contexts is being clarified.

The key existing standards most directly implicated include:

- CPS 234 (Information Security): Requires entities to maintain information security capabilities commensurate with the size and extent of threats to their information assets, now explicitly inclusive of AI-driven threats

- CPS 230 (Operational Risk Management): Sets expectations for operational resilience, business continuity, and third-party risk management, directly relevant to AI vendor concentration

- CPG 235 (Data Risk Management): Provides guidance on managing risks associated with data quality, governance, and security, all areas where AI deployment amplifies existing exposure

- Governance standards: Existing board oversight obligations that APRA now expects to encompass AI-related risk assessment and challenge

How APRA’s supervisory model creates compliance urgency without new rules

APRA’s supervisory model operates through a cycle of standards, reviews, and escalation. The regulator sets standards, conducts supervisory reviews to assess compliance, and expects entities to self-remediate where gaps are identified.

The formal supervisory letter format itself signals elevated attention, distinct from informal guidance or information papers. Where measurable improvement is not demonstrated, APRA’s supervisory toolkit includes intensified oversight, the imposition of licence conditions, and enforcement action. The expectation of progress is not optional; it carries real supervisory consequence.

The commercial market for AI governance platforms is already responding to the regulatory tailwind APRA has formalised, with vendors targeting financial services, defence, and government sectors under frameworks including the EU AI Act and, increasingly, APRA’s own prudential standards.

The next major ASX story will hit our subscribers first

Australia’s financial system faces a narrowing window to close the AI governance gap

APRA’s stated forward posture combines ongoing supervisory monitoring with engagement across government agencies, industry participants, and international regulatory counterparts. The Bundesbank’s parallel intervention on frontier AI cyber risk, issued within days of APRA’s letter, indicates that Australian institutions are operating in a globally converging supervisory environment, even where formal coordination between regulators is not yet explicit.

APRA has not set a public remediation deadline. The absence of a specific date does not signal patience; the supervisory review format and letter issuance establish that the next supervisory interaction will assess whether progress has been made.

APRA stated its objective as preserving the safety and resilience of the broader Australian financial system, a framing that positions AI governance failures as a systemic concern rather than an institutional compliance matter.

The decision not to introduce new regulatory requirements is deliberate policy, not an indication that the regulator considers the current position adequate. APRA has placed the expectation formally on the record: the window for treating AI governance as aspirational rather than operational is closing.

The APRA letter is the starting gun, not the finish line

Three findings from the 30 April 2026 letter carry the most weight for regulated entities going forward: the board oversight deficit, the acceleration of AI-enabled cyber threats, and the concentration risk embedded in vendor relationships. Each represents a governance obligation that APRA considers enforceable under existing prudential standards, not contingent on future regulation.

APRA Member Therese McCarthy Hockey has put the regulator’s position on the public record. The supervisory expectation is now documented, and the next review cycle will measure progress against it.

APRA’s formal supervisory letter on AI, published 30 April 2026, sets out the regulator’s findings and expectations in full, covering governance structures, vendor concentration, cyber threat escalation, and the application of existing prudential standards to AI risks across all three regulated sectors.

Institutions that treat this letter as a compliance prompt rather than a risk management signal may find themselves on the wrong side of that assessment. The letter is the starting gun; the supervisory scrutiny that follows will determine who moved fast enough.

This article is for informational purposes only and should not be considered financial advice. Investors should conduct their own research and consult with financial professionals before making investment decisions.

Frequently Asked Questions

What is APRA AI risk management and why does it matter for Australian financial institutions?

APRA AI risk management refers to the Australian Prudential Regulation Authority's expectations that banks, insurers, and superannuation trustees apply existing prudential standards to govern, monitor, and control AI deployment. It matters because APRA's April 2026 supervisory letter found that governance frameworks across all three sectors have materially failed to keep pace with the speed and complexity of AI adoption.

What specific AI governance failures did APRA identify in its 2026 supervisory review?

APRA identified four core failure categories: boards lacking the technical literacy to challenge management on AI risks, fragmented risk management frameworks that fail to capture AI's cross-domain exposure, assurance processes that have not adapted to the speed and opacity of AI model updates, and operational resilience plans that do not account for AI-specific failure modes including vendor concentration and model degradation.

How does AI vendor concentration risk affect APRA-regulated entities?

APRA found that some regulated entities have concentrated significant AI dependency on a single provider across multiple critical use cases, creating correlated failure risk if that provider experiences disruption or contractual withdrawal. Entities are now expected to map AI dependencies comprehensively and integrate contingency planning into their operational resilience frameworks under CPS 230.

Which existing prudential standards apply to AI risk under APRA's current expectations?

APRA is not introducing new AI-specific rules but expects entities to apply CPS 234 (Information Security), CPS 230 (Operational Risk Management), and CPG 235 (Data Risk Management) to AI contexts, alongside existing board governance obligations that now encompass AI-related risk assessment and challenge.

What action should APRA-regulated institutions take following the April 2026 supervisory letter?

Regulated institutions should treat the April 2026 letter as an enforceable supervisory prompt rather than guidance, prioritising board-level AI risk accountability, comprehensive AI vendor dependency mapping, strengthened contingency planning, and updated information security practices that account for AI-accelerated cyber threats. The next supervisory review cycle will assess measurable progress against these expectations.