Why AI Hardware Stocks Are Beating the U.S. Chip Giants in 2026

Key Takeaways

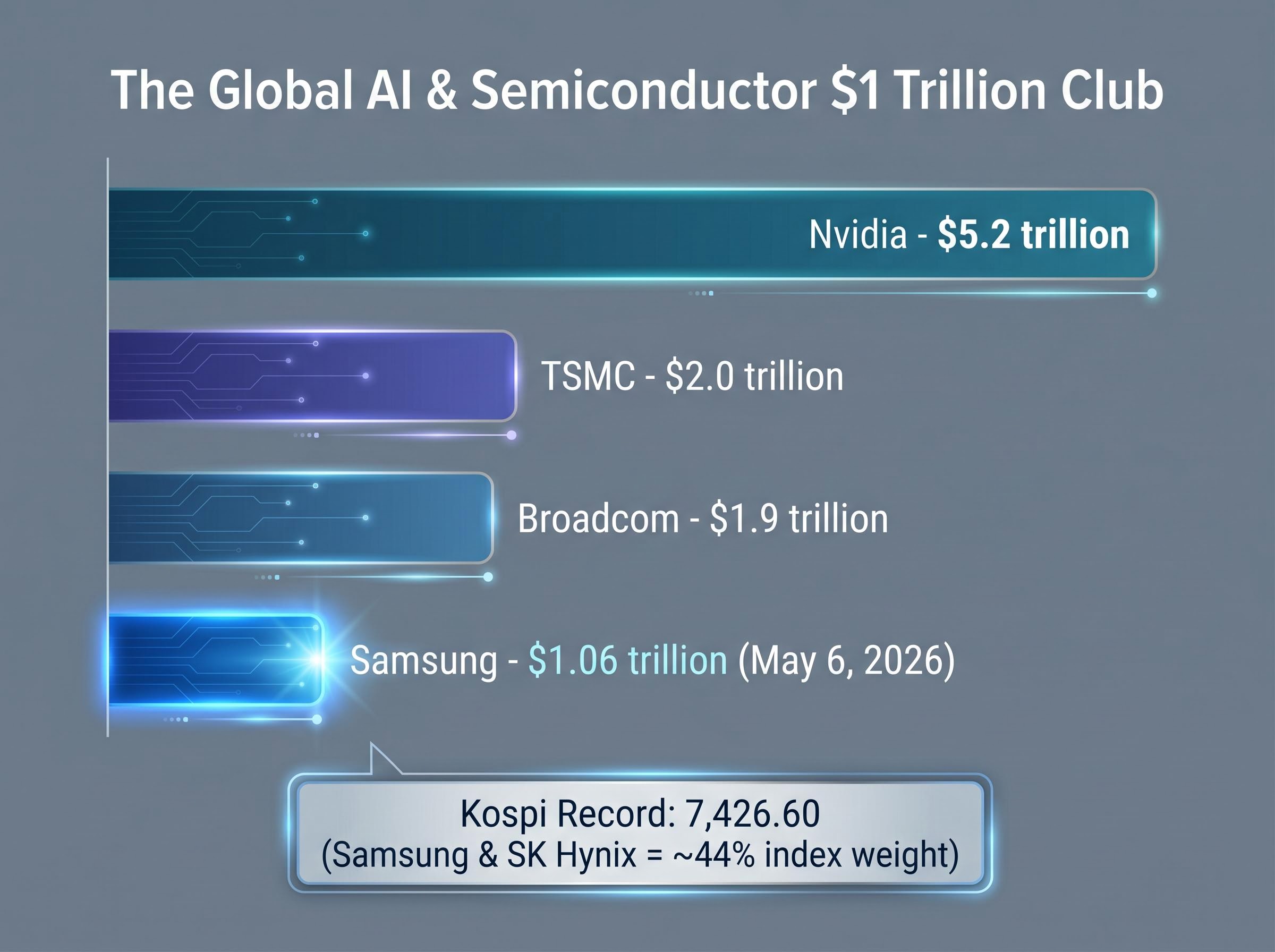

- Samsung Electronics crossed $1 trillion in market capitalisation on 6 May 2026, becoming only the second Asian company after TSMC to reach that threshold, as institutional capital rotated into AI hardware stocks on the same day AMD fell approximately 16%.

- Foreign investors poured a record approximately $2.88 billion into Korean equities in a single session, concentrated in Samsung and SK Hynix, which together comprise roughly 44% of the Kospi index.

- SK Hynix holds an estimated 52-70% of Nvidia's HBM orders, while Samsung is working to close a qualification gap that represents the most critical variable in its semiconductor revenue outlook.

- ASML holds a global monopoly on EUV lithography equipment, making it an upstream dependency for every advanced AI chip manufactured by TSMC, Samsung, and others, and its stock gained approximately 45% year-to-date as of May 2026.

- Asian semiconductor stocks traded at a forward P/E of approximately 12x as of early May 2026, roughly half the Nasdaq 100's multiple, despite earnings growth forecasts running nearly three times faster than their U.S. peers, creating a potential valuation rerating catalyst.

On 6 May 2026, Samsung Electronics crossed $1 trillion in market capitalisation during early Seoul trading, a threshold only one other Asian company has ever reached. The milestone arrived on the same day AMD shares fell roughly 16% on earnings. That is not coincidence. It is the clearest signal yet that institutional capital is rotating out of U.S. fabless semiconductor names and into the Asian companies that build the physical infrastructure AI actually runs on.

Memory, foundry, and lithography stocks are capturing money at a pace the Kospi’s record intraday high of 7,426.60 reflects directly. Foreign investors alone poured approximately $2.88 billion (4.2557 trillion won) into Korean equities on this single day. The shift is measurable, concentrated, and accelerating.

What follows examines what Samsung’s milestone reveals about where AI hardware investment flows are heading, which companies are positioned in that current, and what investors should understand before adding Asian hardware exposure to a portfolio.

Samsung just joined a very exclusive club, and the timing explains everything

Samsung’s intraday peak reached approximately 1.54 trillion won (roughly $1.06 trillion), making it only the second Asian company to cross the $1 trillion threshold after TSMC. The Kospi gained approximately 5.12% on the session, setting a record high of 7,426.60.

The same day, AMD fell roughly 16% on earnings. A U.S. fabless chipmaker selling off while an Asian memory manufacturer hits an all-time valuation record, on the same trading day, is a divergence that demands attention.

- Samsung’s market capitalisation peaked at approximately $1.06 trillion on 6 May 2026

- The Kospi reached a record intraday high of 7,426.60, with Samsung and SK Hynix together comprising approximately 44% of index weight

- Samsung became only the second Asian company to reach $1 trillion, after TSMC ($2.0 trillion), in a global tier led by Nvidia ($5.2 trillion) and Broadcom ($1.9 trillion)

Foreign investors made net purchases of approximately $2.88 billion in Korean shares on 6 May alone, a record single-day inflow.

The money did not flow into broad Korean equities. It flowed into the companies building AI’s physical supply chain.

When big ASX news breaks, our subscribers know first

Why AI money is moving toward hardware and away from software promises

The rotation has a mechanical explanation. AI model training and inference at scale requires enormous quantities of high-bandwidth memory (HBM) and advanced logic fabrication. These are physical products manufactured in physical fabs, not software deliverables on quarterly guidance slides.

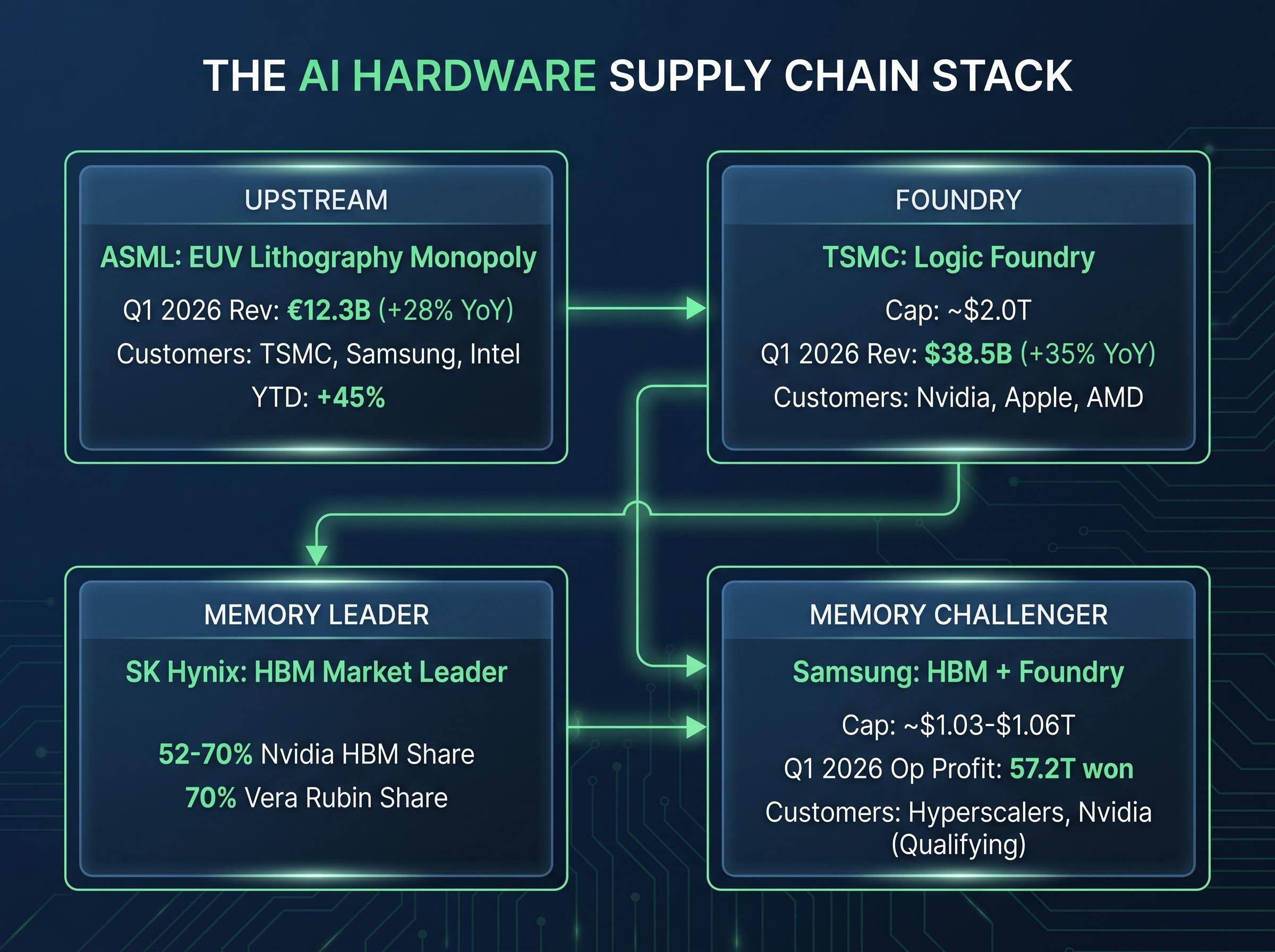

Samsung (HBM memory), SK Hynix (HBM market leader), TSMC (logic foundry), and ASML (extreme ultraviolet lithography) form a supply chain that cannot be replicated by U.S. software or fabless companies. More importantly, that supply chain is currently capacity-constrained, a condition that creates pricing power and translates directly into earnings beats rather than earnings projections.

| Company | Q1 2026 Revenue / Operating Profit | Year-over-Year Growth | Primary AI Driver |

|---|---|---|---|

| Samsung | 57.2 trillion won (operating profit) | Semiconductor division: 53.7T won (94% of total) | HBM memory for AI data centres |

| TSMC | $38.5 billion (revenue) | +35% YoY | Logic foundry for Nvidia AI accelerators |

| ASML | €12.3 billion (revenue) | +28% YoY | EUV lithography monopoly |

These are not speculative bets on AI adoption. They are supply-constrained producers with confirmed hyperscaler demand delivering earnings growth now.

The scale of this reallocation becomes clearer when viewed through the lens of global capital flows in 2026, where the SOXX ETF posted a 40.4% single-month gain in April and total market inflows reached $86 billion, a pattern analysts describe as structural realignment rather than a temporary sentiment swing.

The supply constraint that turns demand into pricing power

HBM is a specialised memory product requiring months of customer qualification and years of fab investment. Supply cannot respond quickly to demand spikes. Samsung and SK Hynix together account for the overwhelming majority of global HBM output, creating an oligopoly position that shields margins. According to Reuters, HBM shortages are projected to worsen through 2027 due to limited fab expansion capacity.

The map of Asian AI hardware: what each major player actually does

Treating “Asian AI hardware” as a single category risks misallocation. Each company serves a distinct part of the AI stack, with different risk and return profiles.

TSMC operates the foundry that fabricates Nvidia’s AI accelerators at leading-edge process nodes. Its $2.0 trillion valuation reflects that fabrication monopoly. TSMC does not manufacture memory; it is complementary to, not competing with, the Korean memory firms.

Samsung and SK Hynix supply the HBM memory that AI processors require. SK Hynix currently holds the lead, with an estimated 52-70% of Nvidia’s HBM orders, according to Seeking Alpha, including a reported 70% share for the Vera Rubin platform. Samsung is investing heavily to close that gap.

ASML sits upstream of all of them. Without ASML’s EUV lithography tools, neither TSMC nor Samsung can manufacture advanced chips. ASML holds a global monopoly on this technology.

- ASML’s EUV monopoly means every advanced AI chip, whether logic or memory, depends on its equipment

- U.S. export restrictions on ASML’s most advanced tools to China create both regulatory risk and a competitive moat for non-Chinese customers

- ASML stock was up approximately +45% year-to-date as of May 2026

U.S. chip export restrictions on ASML tools have expanded significantly since October 2022, with proposed legislation such as the MATCH Act targeting both EUV and DUV lithography systems, creating a regulatory layer that shapes which fabs can access leading-edge equipment and, by extension, which customers benefit from that constraint.

| Company | Market Cap (May 2026) | Role in AI Stack | Key Customer Relationship |

|---|---|---|---|

| TSMC | ~$2.0 trillion | Logic foundry (advanced node fabrication) | Nvidia, Apple, AMD |

| Samsung | ~$1.03-$1.06 trillion | HBM memory + foundry | Hyperscalers, Nvidia (qualification in progress) |

| SK Hynix | Major Kospi constituent | HBM memory (market leader) | Nvidia (52-70% HBM share) |

| ASML | Major European constituent | EUV lithography equipment | TSMC, Samsung, Intel |

What HBM actually is and why it has become the chokepoint of the AI era

High-bandwidth memory, or HBM, is a type of memory chip made by stacking multiple layers of DRAM (the standard memory in most electronics) on top of each other and connecting them with tiny vertical channels called through-silicon vias. This stacking delivers far higher data bandwidth to AI processors than conventional memory can, which is what makes it indispensable for AI workloads.

Large AI models, particularly the transformer architectures behind generative AI, require extremely fast memory access during both training runs and real-time inference. Standard DRAM cannot deliver the bandwidth these models need. HBM can.

The product has evolved through several generations:

- HBM3: Earlier generation deployed in initial AI data centre builds

- HBM3E: Current AI data centre standard; SK Hynix is already sampling 12-layer stacks, and Samsung is ramping production

- HBM4: Next generation targeting 2027 AI workloads; Samsung is targeting mass production for Q4 2026, while SK Hynix demonstrated prototypes at GTC 2026 (March) and CES 2026 (January)

HBM shortages are projected to worsen through 2027 due to limited fab expansion capacity.

The DRAM pricing cycle amplifies the supply constraint dynamic: overall DRAM prices rose approximately 180% since early 2026, with the HBM segment of that market projected to reach $16.72 billion by 2033, a trajectory that gives Samsung and SK Hynix sustained pricing power well beyond the current quarter.

Each generational transition requires not only supplier investment but also extensive customer qualification testing, a process that takes months and creates significant switching costs.

Samsung vs. SK Hynix: the qualification gap that matters to investors

AI customers, including Nvidia and major hyperscalers, require lengthy qualification testing before accepting a memory supplier into their production supply chain. Samsung currently trails SK Hynix in HBM qualification for major AI programmes. Closing this gap is the single most important variable for Samsung’s HBM revenue trajectory and, by extension, for its ability to sustain a $1 trillion valuation on semiconductor earnings alone.

The next major ASX story will hit our subscribers first

What the rotation signals and how to think about it as an investor

The rotation thesis is explicit: institutional capital is shifting from U.S. fabless and software-adjacent AI names toward Asian hardware companies with direct physical supply chain exposure, lower price-to-earnings multiples, and confirmed AI earnings. The question for investors is whether to treat this as a trend with further room to run or a momentum trade approaching exhaustion.

Signals the rotation is continuing:

- Sustained foreign inflows into Korean and Taiwanese equities (the $2.88 billion single-day record on 6 May is the most recent data point)

- Earnings beats from HBM suppliers on AI-specific revenue lines

- New hyperscaler supply agreements with Samsung or SK Hynix

Risks that could reverse it:

- U.S. export control escalation affecting Asian chip exports to China or restricting technology transfer

- A memory pricing cycle downturn as Samsung adds HBM capacity and supply catches demand

- Cooling AI capital expenditure from hyperscalers, which would reduce the order backlog that supports current pricing

The PHLX SOX semiconductor index gained approximately +55% year-to-date as of 6 May 2026, according to Morningstar, while the Kospi’s semiconductor-driven performance reportedly outpaced it. The Korean won strengthened approximately 1.7% versus the U.S. dollar on the session, reflecting inflow pressure.

Analysts note that price-to-earnings multiples on Korean semiconductor stocks have compressed even as earnings were upgraded, implying further multiple expansion potential if institutional allocation to Asia continues.

The AI boom emerging markets thesis runs deeper than a single trading session: the MSCI AC Asia Pacific IT Index traded at a forward P/E of approximately 12x as of early May 2026, roughly half the Nasdaq 100’s multiple, while earnings growth forecasts for the same companies ran nearly three times faster than their U.S. peers.

Samsung’s non-semiconductor segments add a layer of risk: smartphone profits fell 35% and display profits declined 20% in Q1 2026, making the stock a concentrated memory bet at its current valuation.

The valuation gap between Asian hardware and U.S. AI names

TSMC at $2.0 trillion and Samsung at approximately $1.03-$1.06 trillion trade at materially lower valuation multiples than Nvidia at $5.2 trillion, despite being direct supply chain dependencies for the same AI buildout. When earnings grow faster than a stock price appreciates, multiples compress, and that compression creates a potential rerating catalyst. If institutional allocation to Asia continues at the pace recorded on 6 May, that rerating may have room to develop further.

The $1 trillion threshold is a milestone, but the structural shift it reflects is what persists

Samsung’s $1 trillion market capitalisation is a data point. The underlying shift, where AI hardware investment is concentrating in the Asian companies that manufacture memory, fabricate logic, and build lithography equipment, is the story that persists beyond any single trading session.

TSMC, Samsung, SK Hynix, and ASML collectively form a supply chain chokepoint for the global AI buildout. That position is not easily displaced in the near term. The next catalysts to monitor include HBM4 production ramps expected through 2027, continued hyperscaler AI capital expenditure commitments, and whether Samsung can close its qualification gap with SK Hynix for major AI programmes.

Investors evaluating AI hardware exposure should consider three specific variables: the balance between foundry and memory names in their current semiconductor holdings; the HBM qualification status of Samsung versus SK Hynix as a leading indicator of earnings trajectory; and ASML as an upstream infrastructure position less exposed to any single memory supplier’s competitive standing.

For investors wanting to understand the Samsung foundry angle in more depth, our full explainer on the Apple foundry talks covers the operational readiness of Samsung’s Taylor, Texas facility, the market’s divergent reactions to Intel and Samsung on the day the news broke, and a practical framework for distinguishing preliminary supplier discussions from confirmed order flow.

This article is for informational purposes only and should not be considered financial advice. Investors should conduct their own research and consult with financial professionals before making investment decisions. Past performance does not guarantee future results.

Frequently Asked Questions

What is high-bandwidth memory (HBM) and why does it matter for AI?

High-bandwidth memory (HBM) is a type of memory chip made by stacking multiple layers of DRAM connected through tiny vertical channels, delivering far higher data bandwidth than conventional memory. AI workloads, particularly large transformer models used in generative AI, require this speed for both training and real-time inference, making HBM an indispensable component in AI data centres.

Which Asian semiconductor companies are most exposed to the AI hardware investment trend?

The four companies with the most direct exposure are TSMC (logic foundry for Nvidia AI accelerators), Samsung and SK Hynix (the dominant producers of HBM memory), and ASML (which holds a global monopoly on the EUV lithography equipment required to manufacture advanced chips). Together they form a supply chain chokepoint for the global AI buildout.

Why did Samsung Electronics reach a $1 trillion market cap on the same day AMD fell 16%?

The divergence reflects institutional capital rotating out of U.S. fabless semiconductor companies, which rely on outsourced manufacturing, and into Asian hardware companies that physically manufacture the memory and logic chips AI infrastructure requires. Foreign investors poured approximately $2.88 billion into Korean equities on 6 May 2026 alone, a record single-day inflow concentrated in companies like Samsung and SK Hynix.

How does SK Hynix compare to Samsung in HBM supply for Nvidia?

SK Hynix currently leads the HBM market, holding an estimated 52-70% of Nvidia's HBM orders, including a reported 70% share for the Vera Rubin platform. Samsung is investing heavily to close the qualification gap, and its ability to win a larger share of Nvidia's HBM orders is considered the single most important variable for its semiconductor revenue trajectory.

What risks could reverse the rotation into Asian AI hardware stocks?

The three primary risks are: escalation of U.S. export controls affecting Asian chip exports or technology transfers; a memory pricing cycle downturn if Samsung significantly expands HBM capacity and supply overtakes demand; and a slowdown in AI capital expenditure from hyperscalers, which would reduce the order backlog currently supporting elevated HBM pricing.