AI Sounds Like a Financial Adviser, but It Isn’t One

Key Takeaways

- AI tools including ChatGPT, Claude, and Gemini achieve only around 70% accuracy on Australian financial queries, meaning roughly three in ten responses may contain errors delivered with the same confident tone as correct ones.

- Under Australian law, AI platforms cannot provide personalised financial advice without a licence, and retail investors acting on unlicensed AI-generated recommendations have no regulatory protections if the output is wrong.

- SMSF rules, superannuation contribution limits, and ASIC compliance content represent the highest-risk error categories for AI tools, precisely the areas where retail investors are least likely to catch a mistake.

- Legitimate uses for AI in financial research include concept education, scenario modelling with user-supplied data, document summarisation, and generating informed questions to raise with a licensed adviser.

- No standalone AI financial advice regulation had been finalised in Australia as of May 2026, leaving the gap between what these tools can produce and what they are legally permitted to deliver unresolved.

One in five Gen Z Australians now turns to AI for financial information, according to Moneysmart research published in 2026. The tools they are using, including ChatGPT, Claude, and Gemini, carry a documented accuracy rate of approximately 70% on Australian financial queries. That means roughly three in ten answers may contain errors, and the tools deliver those errors with the same confident tone as their correct responses.

As AI platforms become household names, Australian retail investors are increasingly using them to research investments, understand superannuation, and draft financial plans. The technology is genuinely impressive. It is also genuinely limited in ways that are not obvious from a well-structured, fluent response. AI financial advice sits in a gap between what these tools can technically produce and what Australian law permits them to deliver.

This article maps exactly where AI adds real value for Australian investors, where it falls short in ways that can cause financial harm, and how to use these tools intelligently without crossing into legally and financially risky territory.

Why Australian investors are turning to AI for financial guidance

The appeal is straightforward. Licensed financial advice in Australia is expensive, often inaccessible for younger or lower-balance investors, and appointment-dependent. AI tools are none of those things, which explains why adoption is accelerating on both sides of the advice relationship.

Three factors drive retail investor interest:

- Accessibility: AI is available around the clock, from any device, with no waitlist or booking required.

- Cost: Consumer-tier tools are free or low-cost, removing the fee barrier that prices many Australians out of licensed advice.

- No-judgment interaction: AI responds to basic or repetitive questions without the social friction some investors associate with adviser meetings.

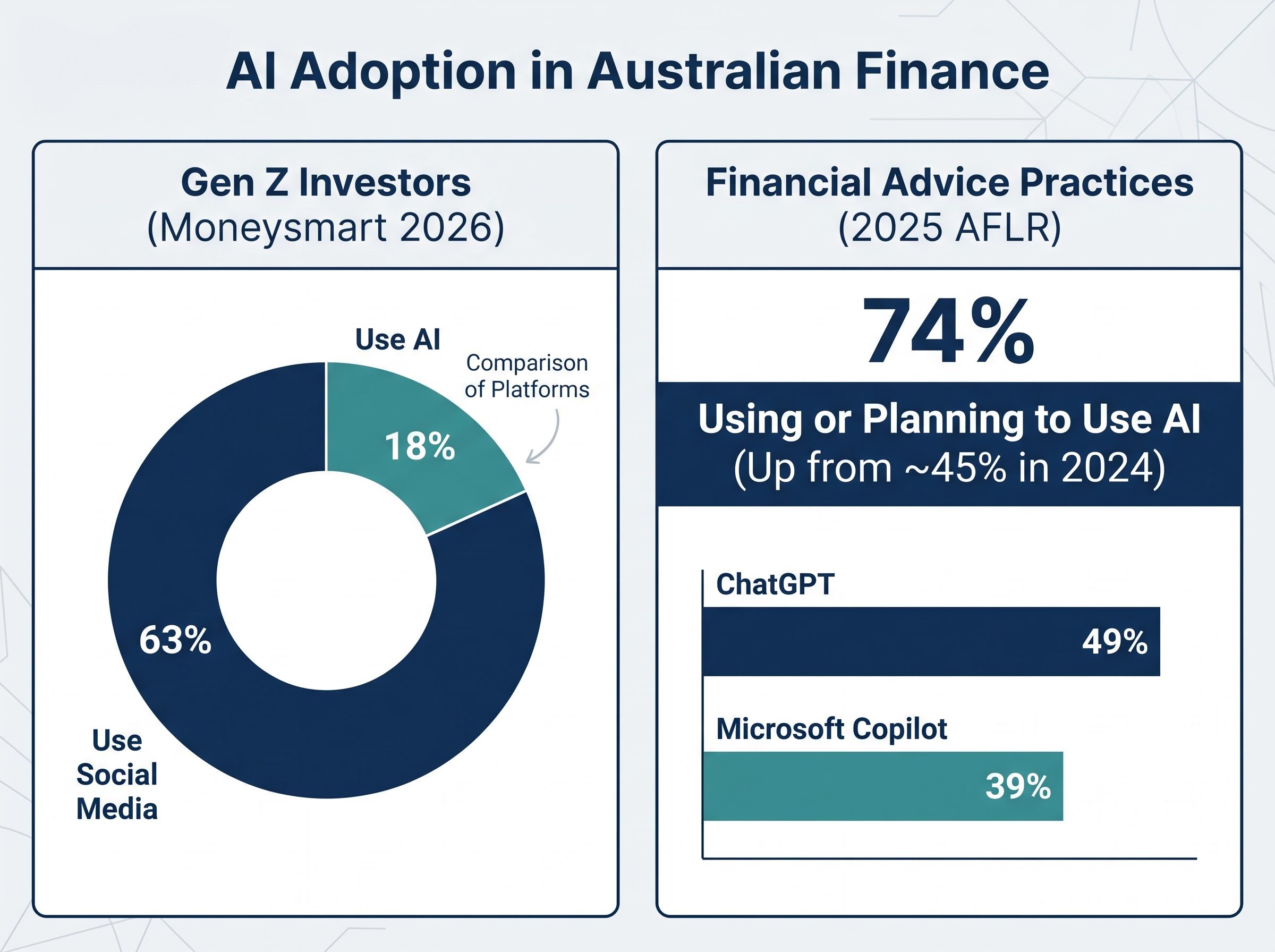

Moneysmart’s 2026 data places AI use at 18% among Gen Z Australians for financial information, sitting alongside a much larger 63% who turn to social media for the same purpose. Both figures reflect a broader shift away from licensed sources as the first point of contact.

Industry adoption is moving faster

On the adviser side, the numbers are even more striking. The 2025 Australian Financial Advice Landscape Report (AFLR, Adviser Ratings) found that 74% of Australian financial advice practices are using or planning to use AI tools, up from approximately 45% in 2024. Approximately 49% of practices use ChatGPT and 39% use Microsoft Copilot, primarily for back-end workflow tasks such as meeting transcription, file notes, and document drafting rather than client-facing advice.

The same fluency and confidence that makes AI feel authoritative to retail investors is, however, structurally disconnected from accuracy. Understanding why these tools sound credible is the first step to using them safely.

When big ASX news breaks, our subscribers know first

What the law actually says: AI and financial advice in Australia

The legal position is unambiguous. Under the Corporations Act and ASIC’s general regulatory framework (including RG 36), unlicensed platforms cannot provide personal financial advice. This applies regardless of how sophisticated the tool appears or how specific its output sounds.

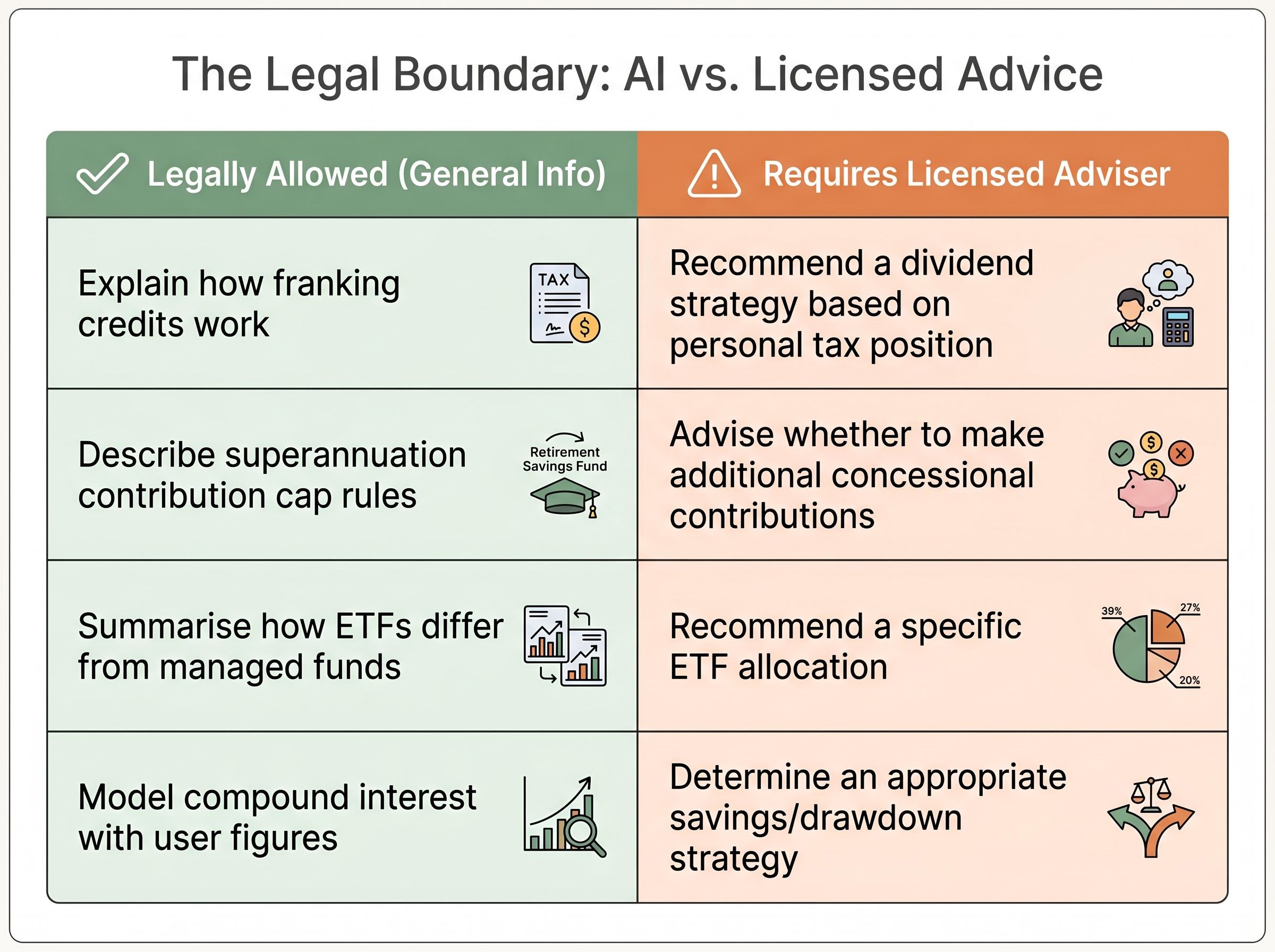

The distinction sits on a clear line: explaining a financial concept is permissible; determining what is appropriate for a specific individual’s circumstances requires a licence. An AI tool can describe how superannuation contribution caps work. It cannot tell a specific user whether they should maximise their concessional contributions this financial year based on their income and existing balance. The moment the output crosses from general education to personalised recommendation, it enters territory that requires a licensed adviser.

FAAA Position: The Financial Advice Association Australia has published guidance noting that “using AI in the advice process can open opportunities” while stressing the need for human oversight and advocating for AI-specific guidelines in submissions to ASIC.

ASIC identifies AI as a systemic risk area in 2026, with a specific enforcement focus on AI-powered investment scams and record scam site takedowns. The FAAA has flagged that AI tools used in client meetings may generate outputs that inadvertently constitute unlicensed advice if not properly supervised. No standalone AI advice regulation has been finalised as of early 2026.

ASIC’s enforcement focus sits alongside a parallel regulatory pressure point: APRA’s April 2026 supervisory letter formally placed AI governance at board level across banking, insurance, and superannuation, identifying insufficient board literacy on AI risks as a central oversight failure rather than a technology team problem.

| What AI can legally do | What requires a licensed adviser |

|---|---|

| Explain how franking credits work | Recommend a dividend strategy based on personal tax position |

| Describe superannuation contribution cap rules | Advise whether to make additional concessional contributions |

| Summarise how ETFs differ from managed funds | Recommend a specific ETF allocation for a portfolio |

| Model compound interest scenarios with user-supplied figures | Determine an appropriate savings or drawdown strategy |

The practical implication for investors is direct: anyone acting on AI-generated personal financial advice from an unlicensed platform has no regulatory protections if the output is wrong.

The accuracy problem: why AI sounds right even when it is wrong

Before looking at the numbers, it helps to understand what these tools are actually doing when they respond to a financial question. AI language models do not retrieve verified facts from a database. They generate probabilistic outputs, selecting the statistically most likely next word based on patterns in their training data. The result is text that reads with authority because it is structured to be fluent, not because it has been verified.

This architecture is what produces hallucinations: plausible-sounding but incorrect outputs delivered with equal confidence to accurate ones. A model generating a response about SMSF contribution rules is performing the same statistical prediction as when it writes a poem. It has no mechanism to flag uncertainty or distinguish between a well-supported fact and a fabricated figure.

The 2025 AFLR benchmarked AI tools across more than 500 financial queries and found an overall accuracy rate of approximately 70% on Australian financial content.

| AI Tool | Primary Use Case Accuracy | Australian Super/Regulatory Accuracy | Notable Weakness |

|---|---|---|---|

| ChatGPT (GPT-4o) | ~72% (investment research) | ~65% | Fabricated ASX earnings data; ~28% hallucination rate on Australian financial content |

| Claude 3.5 | ~78% (financial planning/SOA drafting) | Higher error rates on ASIC compliance content | Weaker on regulatory-specific queries |

| Gemini 1.5 | ~68% (portfolio risk assessment) | Reported weaknesses on SMSF rules | SMSF-specific regulatory errors |

| Microsoft Copilot | ~85% (client fact-find transcription) | Not widely tested on regulatory queries | Stronger on structured, document-based tasks |

Some 42% of financial planners cite reliability as a primary concern, according to the same report.

Where AI errors are most dangerous for Australian investors

The error rates are not distributed evenly. SMSF rules, superannuation contribution limits, and ASIC compliance represent the highest-risk categories for AI-generated errors. These are precisely the areas where a retail investor without specialist knowledge is least likely to catch a mistake.

Common hallucination failure modes include:

- Fabricated earnings data or company figures for ASX-listed stocks

- Incorrect interpretation of Australian superannuation rules

- Errors on SMSF-specific regulations and compliance requirements

- Misapplication of ASIC regulatory guides to specific scenarios

Community discussions on platforms such as r/AusFinance reflect consistent warnings about AI reliability on SMSF-specific content. The implication is that errors in these areas are more dangerous than general investment concept errors, because they require specialist knowledge to detect and can produce direct financial or compliance consequences.

Where AI genuinely adds value for Australian investors

The accuracy limitations are real, but they do not invalidate the technology. AI tools perform reliably across a defined set of financial tasks, and several Australian firms have already demonstrated how supervised AI deployment can lower costs and improve access to financial information.

The legitimate use cases fall into four categories:

- Concept explanation: Describing how superannuation works, what franking credits are, or how compound interest accumulates. General education tasks where accuracy rates are highest.

- Scenario modelling: Running projections with user-supplied data, such as calculating the impact of different contribution levels on a retirement balance over time.

- Document summarisation: Condensing product disclosure statements, annual reports, or policy documents into readable summaries.

- Question preparation: Generating informed questions to bring to a meeting with a licensed adviser, improving the quality of that conversation.

Australian deployments already reflect this framework in practice. Hudson Financial Planning launched a free client-facing AI portal (powered by ChatGPT) for questions on budgeting, investing, superannuation, and tax, with adviser supervision retained. YBL.AI, which launched in 2025, provides document scanning, meeting transcription, SOA drafting, and client finance reviews, with early adviser feedback reported as positive. Nuclieos offers SOA automation, portfolio monitoring, ASIC compliance checks, and risk profile generation, positioned explicitly as handling documentation rather than replacing adviser judgment.

George Lucas, YBL.AI, has described the platform as re-engineering the advice process to deliver more efficient service, an approach that reshapes how advice is delivered without substituting for the adviser relationship.

Vanguard’s 2025 commentary reinforces the distinction: AI’s strengths lie in data processing and real-time scenario modelling, while human relationships remain irreplaceable for behavioural coaching and trust-building.

The key distinction is between AI as a research and preparation tool versus AI as a decision-making substitute. The former delivers genuine value. The latter carries legal and financial risk.

Superannuation balance benchmarks by age illustrate why this domain is so high-stakes for retail investors: the average Australian aged 50-54 holds approximately $198,400 in super against an ASFA comfortable retirement threshold of $630,000, a gap large enough that an error in contribution strategy, whether AI-generated or otherwise, compounds materially over time.

Claude vs ChatGPT: how the leading tools compare for investment tasks

If an Australian investor sat down with both Claude and ChatGPT and asked the same investment research question, what would they notice?

The most immediate difference is accuracy on financial planning tasks. Claude 3.5 scores approximately 78% on financial planning and SOA drafting tasks, compared to ChatGPT’s (GPT-4o) approximately 72% on investment research. That 6-percentage-point gap translates to a meaningful difference in error rates at scale, particularly on structured tasks such as analysing a Statement of Advice or evaluating compliance documentation.

ChatGPT has the wider deployment base: approximately 49% of Australian advice practices use it, compared to 39% for Microsoft Copilot. It also demonstrates a meaningful improvement trajectory, with accuracy rising from approximately 55% in early 2024 to approximately 72% by 2025, suggesting ongoing model refinements. Some adviser groups have reported preferring Claude over Copilot for business workflows, with Copilot described as underperforming on specific financial planning use cases. In a stock-selection exercise referenced by industry commentators, Claude produced substantially better results.

| Metric | Claude 3.5 | ChatGPT (GPT-4o) |

|---|---|---|

| Accuracy on financial planning tasks | ~78% | ~72% |

| Australian super/regulatory accuracy | Higher error rates on ASIC compliance | ~65% on super rules |

| Noted strengths | Structured reasoning; SOA-style output | Wider deployment; improving accuracy trajectory |

| Noted weaknesses | Regulatory-specific content | Hallucinated ASX earnings data; 28% hallucination rate |

Data privacy consideration: The FPSB’s 2025 study found that 47% of financial planning professionals cite data privacy as a top concern. Inputting personal financial information (account numbers, balances, tax file numbers) into consumer-tier AI tools carries data handling risks that differ substantially from enterprise versions. Only 36% of Australian advice practices pay for professional or enterprise AI versions.

Neither tool should be treated as a substitute for licensed advice. But for investors using AI as a research starting point, tool choice affects the quality of that starting point.

The next major ASX story will hit our subscribers first

How to use AI for financial research without taking on unnecessary risk

The preceding sections establish what AI can do, what it cannot do legally, and where its accuracy breaks down. What follows is a practical framework for using these tools without crossing into risky territory.

- Use AI for concept education, not personal decisions. Queries about how superannuation works, what a franking credit is, or how compound interest accumulates are relatively safe. Queries about what you personally should do with your super balance are not.

- Treat every output as a draft, not an answer. Verify any specific figure, rule, or regulatory claim against an authoritative source such as ato.gov.au, moneysmart.gov.au, or ASIC’s published guidance.

- Never input personal financial data into consumer AI tools. Avoid sharing account numbers, tax file numbers, specific balances, or identifying information. The FPSB found 47% of financial planning professionals flag data privacy as a top concern for good reason.

- Use AI to generate better questions. The highest-value use case for retail investors is preparing for a conversation with a licensed adviser. Ask the AI to help identify what questions to raise about a financial product or strategy.

- Cross-check any Australian regulatory claim. SMSF rules, contribution caps, and ASIC compliance requirements are among the highest-error categories. If an AI tool cites a specific regulation or limit, verify it directly.

Red flags that suggest an AI tool is overstepping

Certain outputs should trigger immediate scepticism:

- Specific buy or sell recommendations for named securities

- Personalised superannuation drawdown strategies presented without adviser framing

- Tax advice tied to the user’s stated personal circumstances

- Recommendations for specific investment products with promised returns, which ASIC has flagged as a hallmark of AI-powered scams in its 2026 enforcement priorities

ASIC’s 2026 enforcement action on AI investment scams specifically identifies confident, personalised output as a hallmark of fraudulent AI-powered platforms, reinforcing why specific product recommendations with promised returns warrant immediate scepticism from retail investors.

The presence of confident, specific, personalised output is not evidence of accuracy. It is the default mode of these tools regardless of whether the answer is correct.

This article is for informational purposes only and should not be considered financial advice. Investors should conduct their own research and consult with financial professionals before making investment decisions.

AI is a powerful research assistant, not a licensed adviser, and the difference matters

AI tools are genuinely useful for Australian investors who deploy them as research and preparation tools. They lower the cost of financial education, improve the quality of questions investors bring to adviser meetings, and automate tasks that previously required hours of manual effort.

They are also genuinely risky for those who treat them as replacements for licensed advice. A 70% accuracy rate sounds reasonable until the 30% of errors is examined more closely: those errors concentrate in the most complex and consequential areas of Australian financial regulation, including SMSF compliance, superannuation rules, and ASIC regulatory interpretation.

AI capabilities are improving. ChatGPT’s accuracy rose from approximately 55% to approximately 72% between early 2024 and 2025. But the regulatory framework has not kept pace. No standalone AI advice regulation has been finalised in Australia as of May 2026, meaning the gap between what these tools can produce and what they are legally permitted to deliver remains unresolved. Until that gap closes, the operating principle for Australian investors is straightforward: use AI to become better informed, then take that information to a licensed professional.

For investors who want to move from understanding AI’s limitations to taking concrete action on their superannuation, our dedicated guide to superannuation strategy before the 2026 cap changes covers the carry-forward rules expiring on 30 June 2026, the confirmed cap increases from 1 July, and the investment option switches that can be worth hundreds of thousands of dollars over a working life, all in the context a licensed adviser conversation should address.

Past performance does not guarantee future results. AI tool accuracy figures cited in this article reflect reported industry observations and may vary as models are updated.

Frequently Asked Questions

What is AI financial advice and is it legal in Australia?

AI financial advice refers to financial guidance generated by tools like ChatGPT, Claude, or Gemini. In Australia, these tools can legally explain financial concepts but cannot provide personalised recommendations, as doing so requires a licensed adviser under the Corporations Act and ASIC's regulatory framework.

How accurate is ChatGPT for Australian financial questions?

According to the 2025 Australian Financial Advice Landscape Report, ChatGPT (GPT-4o) achieves approximately 72% accuracy on investment research tasks and around 65% accuracy on Australian superannuation and regulatory queries, with a reported 28% hallucination rate on Australian financial content.

What are the biggest risks of using AI for superannuation or SMSF advice?

SMSF rules, superannuation contribution limits, and ASIC compliance are the highest-error categories for AI tools, meaning mistakes are both more likely and harder for retail investors to detect, with errors potentially leading to direct financial or compliance consequences.

How should Australian investors practically use AI tools for financial research?

Australian investors should use AI to understand financial concepts, model scenarios with their own supplied figures, summarise documents, and prepare questions for a licensed adviser, while verifying any specific figures or regulatory claims against authoritative sources like ato.gov.au or moneysmart.gov.au.

Which AI tool is better for financial planning tasks, Claude or ChatGPT?

Claude 3.5 scores approximately 78% accuracy on financial planning and Statement of Advice drafting tasks, compared to ChatGPT's approximately 72% on investment research, though both tools carry meaningful error rates and neither should be used as a substitute for licensed financial advice.