APRA Puts Boards on Notice Over AI Risk Management Gaps

Key Takeaways

- APRA's 30 April 2026 letter escalates supervisory expectations for AI governance across banking, insurance, and superannuation without introducing new rules, applying heavier interpretive weight to existing standards including CPS 234.

- Boards are now explicitly accountable for AI governance gaps, with APRA identifying insufficient board-level AI literacy as a central oversight failure across regulated sectors.

- Concentration risk from embedded AI within broader software platforms is a key supervisory concern, with APRA flagging single-provider dependencies and inadequate contingency planning as immediate priorities.

- APRA warned that frontier AI models could amplify adversarial cyber threats, with Deloitte Australia reportedly predicting a 20-30% rise in AI-driven cyber incidents, requiring faster detection and remediation cycles from regulated institutions.

- Australia's principles-based regulatory posture may not persist, with the Assistant Treasurer reportedly hinting at potential CPS amendments if governance gaps continue, signalling this supervisory moment is the start of a longer arc of increasing regulatory intensity.

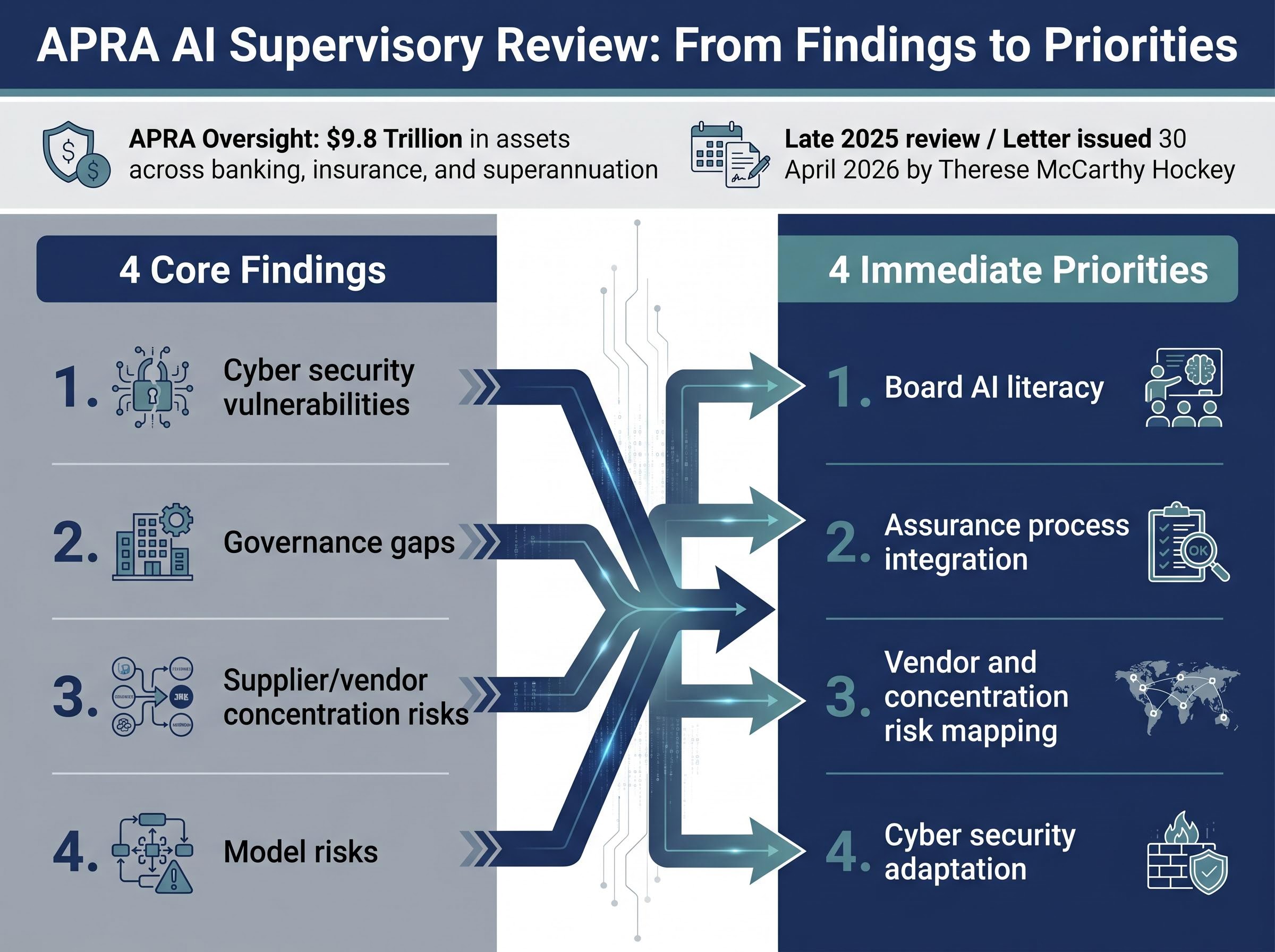

The Australian Prudential Regulation Authority (APRA) oversees $9.8 trillion in assets across the country’s banking, insurance, and superannuation sectors. Its letter to industry on 30 April 2026 carried an unambiguous message: the governance frameworks protecting those assets are not keeping pace with the speed at which artificial intelligence is being deployed inside regulated institutions. Published just days ago, the findings from APRA’s late 2025 supervisory review mark a sharp escalation in tone. No new rules have been introduced, but the regulator has made clear that existing prudential standards now carry elevated expectations when applied to AI. For boards, risk officers, and compliance teams, this is a direct accountability signal. What follows is a breakdown of exactly what APRA found, which specific weaknesses are in the regulator’s crosshairs, what institutions are expected to fix under current standards, and how Australia’s supervisory posture compares with peer regulators internationally.

The supervisory letter represents APRA AI risk management expectations being applied in real time across the full breadth of Australia’s regulated financial system, with boards, not technology teams, now carrying explicit accountability for the governance gaps identified.

What APRA’s supervisory review actually found

APRA’s review was not a targeted probe of specific firms. It was a sector-wide supervisory assessment conducted in late 2025 across banking, insurance, and superannuation, covering the full breadth of Australia’s regulated financial system. The 30 April 2026 letter, authored under the authority of APRA Member Therese McCarthy Hockey, published the findings across four core areas:

- Cyber security vulnerabilities linked to AI adoption

- Governance gaps at board and senior management level

- Supplier and vendor concentration risks

- Model risks arising from opaque AI systems

From pilots to production: the deployment gap

The concern is not that institutions are experimenting with AI. It is that they have moved well past experimentation. Entities across all three regulated sectors have shifted from pilot-stage testing into operationally embedded and customer-facing AI deployments. That transition is precisely what makes the governance gap dangerous.

AI capabilities are now frequently embedded inside broader software platforms, reducing transparency over where models are trained, updated, or constrained. APRA’s finding is cumulative: an industry that moved fast and left governance behind.

When big ASX news breaks, our subscribers know first

The three governance failures boards need to understand

APRA’s letter identifies three distinct governance failures that, taken together, describe a compounding deficit rather than isolated process gaps.

- Board-level AI literacy: Boards may be enthusiastic about AI’s potential but lack the technical knowledge to meaningfully challenge management on AI-related risk decisions. APRA Member Therese McCarthy Hockey identified this as a central oversight gap.

- Fragmented assurance processes: Current change management and assurance frameworks are frequently siloed, potentially failing to deliver coherent assurance on AI-specific risks across the organisation.

- Multi-domain risk exposure: AI risks simultaneously span operational resilience, cyber and information security, data privacy, and procurement. No single risk lens is sufficient, yet many institutions have not adapted their frameworks to reflect this reality.

APRA found that boards often lack the technical knowledge to effectively challenge management on AI-related risk decisions, leaving governance structures underdeveloped relative to the speed of deployment.

The Financial Services Council (FSC) reportedly welcomed APRA’s approach in a 2 May 2026 statement, urging members to focus on board AI literacy programmes. The Governance Institute of Australia’s CEO has reportedly called for mandatory AI governance training for directors, aligning with APRA’s findings on the board literacy gap.

These three failures are the specific pressure points APRA is expected to probe in future supervisory engagements.

Understanding what prudential standards already require on AI

APRA has not introduced new regulation. That fact is less reassuring than it sounds. It means existing prudential standards are doing heavier lifting than many institutions have assumed, and the interpretive bar has risen materially.

APRA expects AI-related risks to be managed within the current framework covering:

- Information security (CPS 234)

- Operational risk management

- Governance

- Data risk

CPS 234, the prudential standard for information security, is the primary compliance lever. It applies directly to AI-related cyber vulnerabilities and vendor risks. King & Wood Mallesons (KWM) reportedly emphasised in a client alert the need for rigorous application of existing standards, particularly CPS 234, to AI deployments.

APRA’s CPS 234 information security standard establishes binding cyber security requirements that APRA Member Therese McCarthy Hockey has explicitly connected to AI adoption risks, making it the primary compliance instrument through which supervised entities must demonstrate adequate controls over AI-related vulnerabilities.

The principles-based approach places the interpretive burden squarely on institutions. Each entity must determine how existing standards apply to AI deployments in its specific context. APRA’s stated objective remains maintaining ongoing safety and stability of the financial system amid rapid technological change.

What “compliance” looks like in practice now

Practical recommendations from legal and advisory commentary converge on several immediate steps: commissioning third-party AI audits, embedding vendor transparency clauses in procurement contracts, building contingency plans for concentration risk scenarios, and ensuring board minutes demonstrate meaningful technical challenge on AI matters. Allens Linklaters reportedly flagged procurement risks in embedded AI as a priority area, reinforcing the need for contractual safeguards.

Concentration risk and the hidden danger of embedded AI

The governance failures APRA identified sit at the board level. Concentration risk sits deeper, in the operational infrastructure many boards have not yet mapped.

AI capabilities are frequently embedded within broader software platforms and developer tooling rather than deployed as identifiable standalone AI systems. A regulated entity may be relying on a single AI provider across multiple use cases without recognising it, because the AI component was bundled inside a platform the institution selected for other reasons.

APRA found that reduced visibility into embedded AI systems limits entities’ capacity to evaluate and manage AI-related risks, creating a governance blind spot at the operational level.

APRA’s letter specifically flagged single-provider concentration risk and gaps in contingency planning. Allens Linklaters reportedly highlighted procurement risks associated with embedded AI, recommending vendor transparency clauses as a baseline response.

| Risk type | APRA finding | Recommended response |

|---|---|---|

| Concentration risk | Single-provider reliance across multiple use cases with insufficient contingency planning | Vendor mapping, alternative provider identification, and documented contingency plans |

| Embedded-AI opacity | AI capabilities hidden inside broader platforms, reducing institutional visibility | Vendor transparency clauses, procurement governance reviews, and third-party audits |

For procurement and technology risk teams, concentration risk in AI is now an explicitly identified supervisory concern, making vendor mapping and contingency planning an immediate priority.

How Australia’s approach compares with global AI regulators

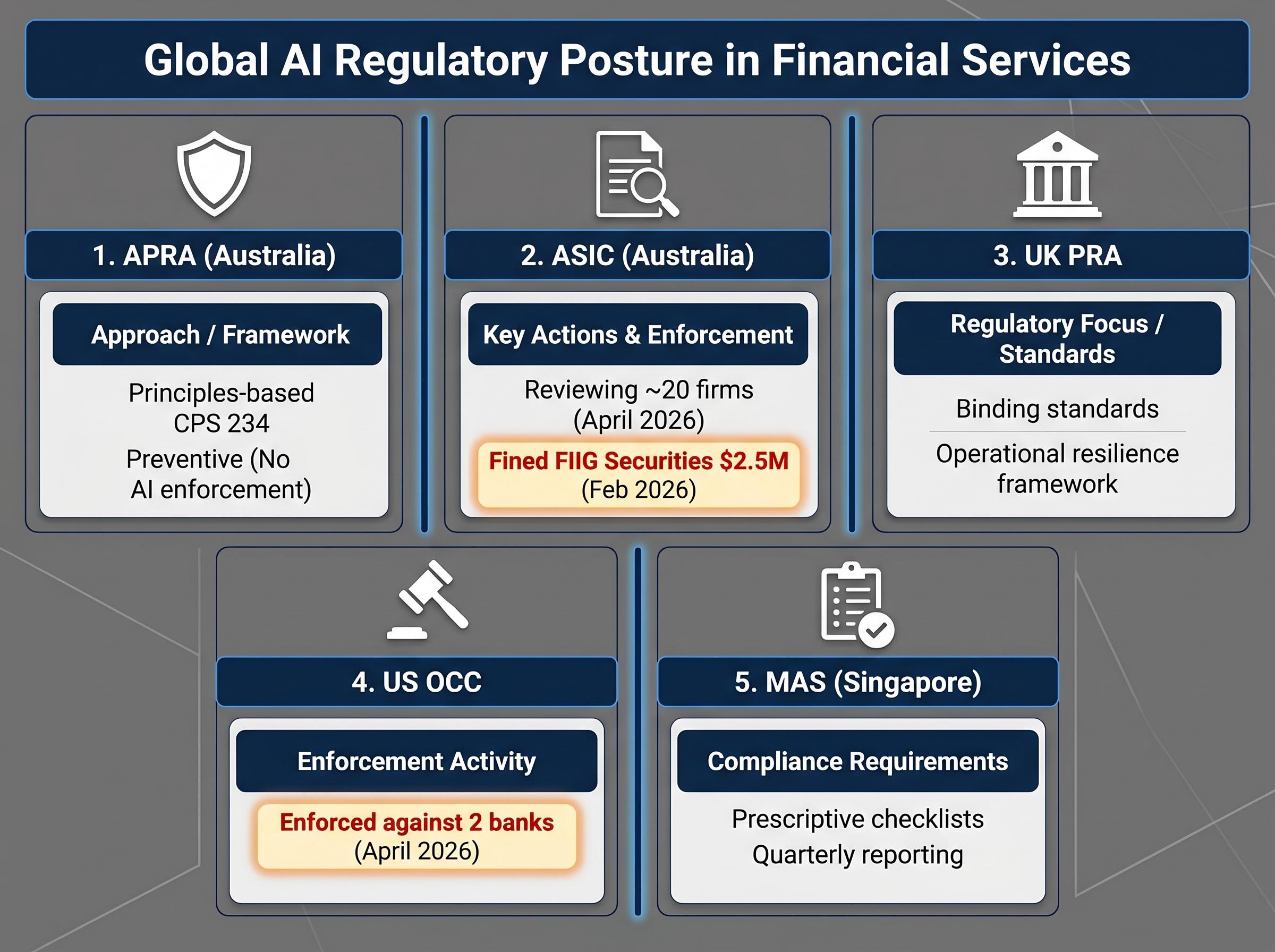

APRA’s supervisory review combined with elevated expectations under existing standards represents a collaborative, principles-based posture. Among peer regulators, that approach is increasingly an outlier.

The Australian Securities and Investments Commission (ASIC) is conducting parallel reviews of approximately 20 firms as of April 2026, focused on explainability and disclosure obligations. Domestically, the regulatory scrutiny is coordinated if not yet codified.

Internationally, the direction is toward binding standards. The UK Prudential Regulation Authority (PRA) has reportedly moved toward AI outsourcing rules under its operational resilience framework, including stress tests for AI in trading. The US Office of the Comptroller of the Currency (OCC) has reportedly enforced against two banks for inadequate AI controls in April 2026, signalling a more interventionist stance. The Monetary Authority of Singapore (MAS) has reportedly introduced quarterly reporting requirements for systemic institutions and prescriptive governance checklists.

| Regulator | Approach | Key instrument | Enforcement posture |

|---|---|---|---|

| APRA (Australia) | Principles-based; elevated expectations under existing standards | CPS 234, supervisory letters | Preventive; no AI-specific enforcement to date |

| ASIC (Australia) | Consumer-focused reviews of AI use | Explainability and disclosure reviews | Enforcement on technology risk (FIIG Securities, $2.5 million fine, February 2026) |

| UK PRA | Reportedly binding standards with stress tests | Reported operational resilience framework | Reportedly prescriptive |

| US OCC | Reportedly heightened supervision with enforcement | Reported model risk management bulletins | Reported enforcement against two banks (April 2026) |

| MAS (Singapore) | Reportedly prescriptive checklists and quarterly reporting | Reported AI governance framework | Reportedly ahead on reporting requirements |

Enforcement precedent: ASIC fined FIIG Securities $2.5 million in February 2026 for cyber security failures stemming from a 2023 breach, demonstrating that Australian regulators will enforce on technology risk deficiencies.

The gap between APRA’s principles-based stance and the prescriptive direction of peer regulators may not persist indefinitely.

The next major ASX story will hit our subscribers first

What APRA’s four immediate priorities mean for boards and risk functions

APRA used the word “step-change” deliberately. Incremental improvements to existing governance processes will not satisfy supervisory expectations. The regulator expects material action across four immediate priorities:

- Board AI literacy: Directors must be equipped to challenge management on AI-related risk decisions, not merely receive briefings.

- Assurance process integration: Fragmented change management and assurance frameworks must be consolidated to deliver coherent AI risk assurance.

- Vendor and concentration risk mapping: Institutions must identify where embedded AI creates single-provider dependencies and document contingency plans.

- Cyber security adaptation: Detection and remediation capabilities must be calibrated to the speed of AI-enabled threats.

APRA has signalled it will continue engagement with government bodies, regulated entities, and international peer regulators to monitor progress. The Assistant Treasurer has reportedly hinted at potential CPS amendments if governance gaps persist. This is the beginning of ongoing supervisory attention, not a one-time signal.

The cyber threat dimension

APRA’s letter explicitly warned that frontier AI models could improve bad actors’ ability to discover and exploit vulnerabilities. The letter cited Anthropic’s Claude as an example of a frontier model with potential adversarial capability. Deloitte Australia has reportedly predicted a 20-30% rise in AI-driven cyber incidents.

AI-amplified cyber threats are materialising faster than defensive postures can adapt, with CrowdStrike’s 2026 Global Threat Report recording adversary breakout times falling to just 29 minutes, a compression that validates APRA’s finding that current detection and remediation cycles are structurally mismatched to the speed of AI-enabled attacks.

The required response is not simply better tools. It is a faster detection and remediation cycle that matches the speed at which AI-enabled threats can identify and exploit weaknesses.

Australia’s regulated sector is now on notice, and the clock is running

APRA has not issued new rules, but it has raised the interpretive bar under existing standards to a point that requires material action from boards and risk functions across every regulated sector. The industry’s muted public response, characterised by the Australian Financial Review on 2 May 2026 as occurring “behind closed doors,” does not reflect the urgency of what the regulator has communicated.

The real activity is occurring in internal audits and board sessions rather than public announcements. Ongoing supervisory engagement, potential CPS amendments, and a government-wide AI regulatory roadmap mean this supervisory moment is the leading edge of a longer arc of increasing regulatory intensity. The gap between supervisory expectation and current institutional posture is real, measurable, and closing.

The AI investment cycle has entered the speculative financing stage under Minsky’s framework, with hyperscalers issuing more than $75 billion in bonds since September 2025, a dynamic that sits alongside APRA’s governance concerns and raises a structural question about whether the institutions managing these exposures have the board-level literacy to evaluate the risks embedded in their AI-related asset holdings.

This article is for informational purposes only and should not be considered financial advice. Investors should conduct their own research and consult with financial professionals before making investment decisions.

Forward-looking statements regarding regulatory developments and enforcement trends are subject to change based on government, regulator, and market developments.

—

Frequently Asked Questions

What is APRA AI risk management and what does it require from regulated institutions?

APRA AI risk management refers to how the Australian Prudential Regulation Authority expects banks, insurers, and superannuation funds to govern artificial intelligence under existing prudential standards such as CPS 234. Institutions must demonstrate board-level oversight, integrated assurance processes, vendor transparency, and cyber security controls calibrated to AI-enabled threats.

What specific governance failures did APRA identify in its 2025 supervisory review?

APRA identified three core governance failures: boards lacking the technical knowledge to challenge management on AI risk decisions, fragmented assurance frameworks that fail to deliver coherent AI risk oversight, and institutions not adapting their risk frameworks to reflect AI's simultaneous exposure across operational, cyber, data, and procurement domains.

What is CPS 234 and why does it matter for AI compliance in Australia?

CPS 234 is APRA's prudential standard for information security, and it is the primary compliance instrument through which regulated entities must demonstrate adequate controls over AI-related cyber vulnerabilities and vendor risks. APRA has explicitly connected CPS 234 to AI adoption risks without introducing new AI-specific rules.

What practical steps should compliance and risk teams take in response to APRA's April 2026 letter?

Compliance and risk teams should commission third-party AI audits, embed vendor transparency clauses in procurement contracts, map single-provider AI dependencies, build contingency plans for concentration risk scenarios, and ensure board minutes document meaningful technical challenge on AI matters.

How does APRA's approach to AI regulation compare with regulators in the UK, US, and Singapore?

APRA takes a principles-based approach, applying elevated expectations under existing standards without AI-specific rules, while peer regulators are moving toward binding requirements. The UK PRA has reportedly introduced AI outsourcing rules, the US OCC enforced against two banks in April 2026 for inadequate AI controls, and MAS has introduced quarterly reporting and prescriptive governance checklists.