Most Enterprise AI Is Theatre: What Real Adoption Looks Like

Key Takeaways

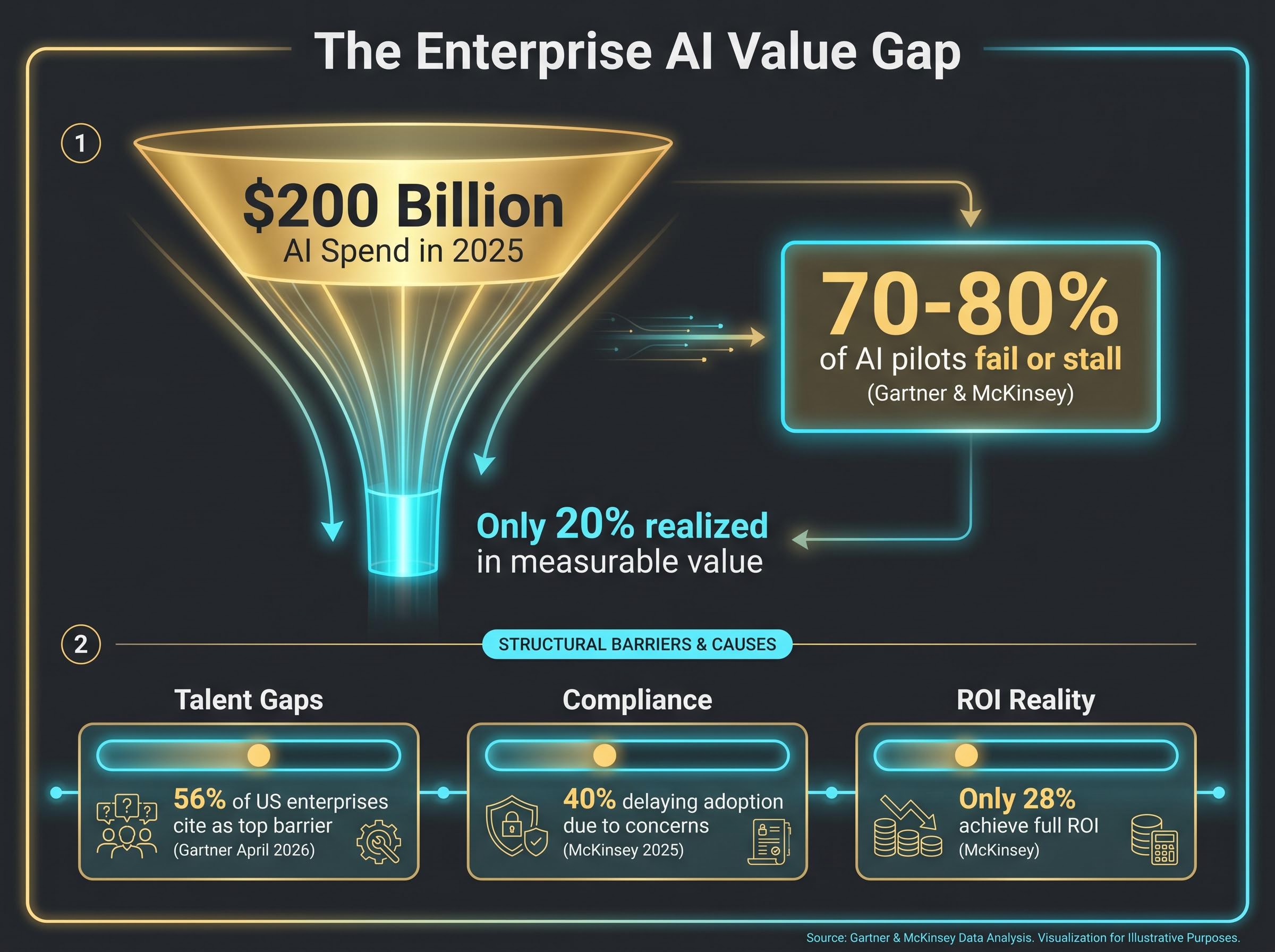

- An estimated 70-80% of enterprise AI pilots fail or stall, with poor data integration identified as the primary cause rather than talent or tooling shortfalls.

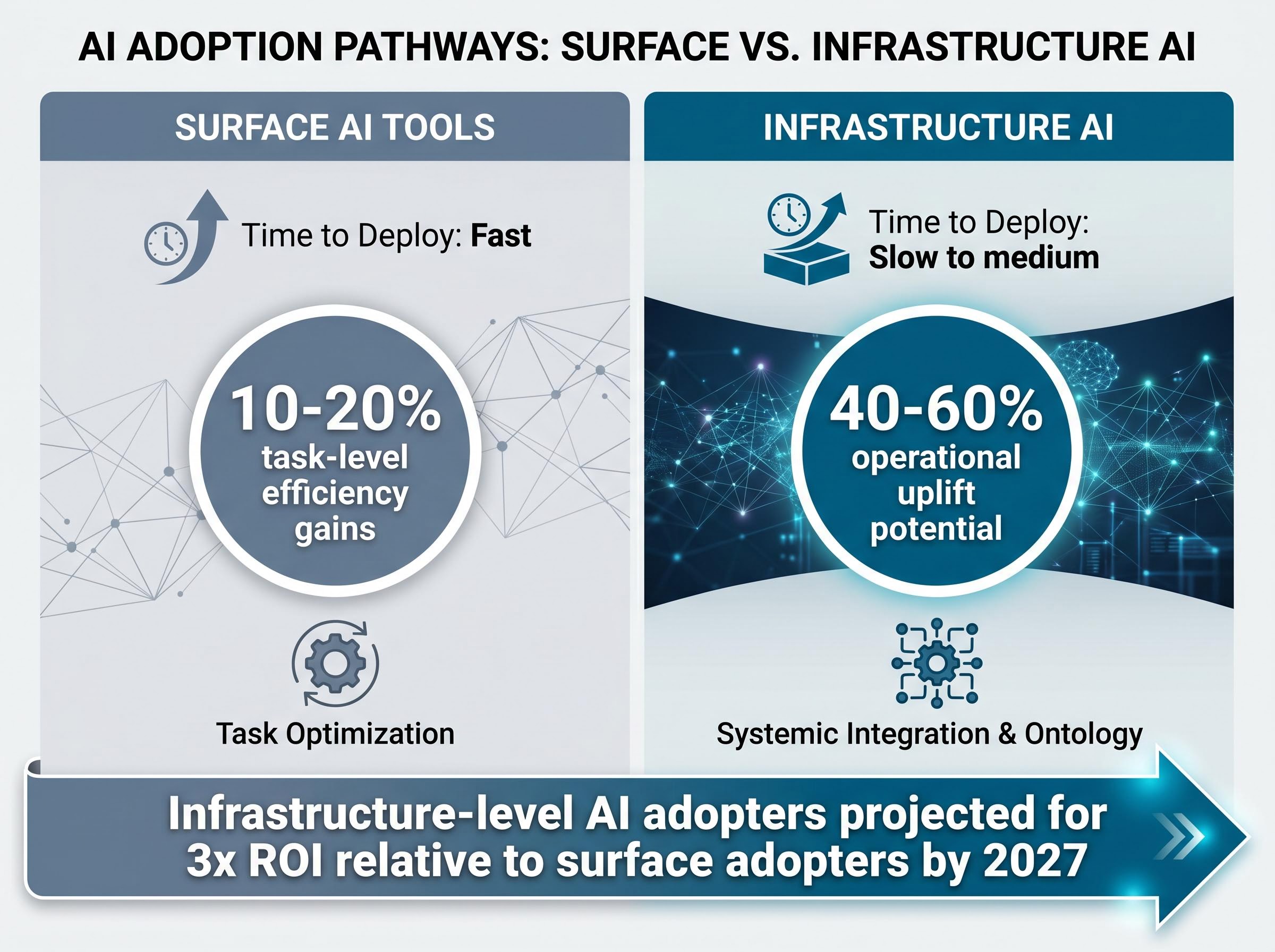

- Infrastructure-level AI adopters are projected to achieve 3x the ROI of surface-level counterparts by 2027, according to converging research from Gartner, McKinsey, and Forrester.

- Only an estimated 12-20% of enterprises achieve meaningful operational AI embedding, with agentic AI deployment sitting at just 17% among enterprises as of April 2026.

- Deep AI integration creates a self-reinforcing compounding dynamic: unified ontologies generate richer data, which trains better models, which enable broader autonomous action and deeper operational embedding over time.

- The architectural commitment decision, choosing at what layer to deploy AI, is becoming increasingly difficult to defer as the ROI gap between leaders and laggards continues to widen.

Roughly 70-80% of enterprise AI pilots fail or stall. The technology itself is not the problem. The implementation layer is. As US enterprises direct an estimated $200 billion into AI spending in 2025, a widening split has emerged between companies treating AI as a productivity overlay and those embedding it into their operational architecture. The distinction is not incremental. Research from Gartner, McKinsey, and Forrester converges on a striking projection: infrastructure-level AI adopters are on track for 3x the return on investment relative to surface-level counterparts by 2027. What follows maps the structural difference between AI that changes how a company operates and AI that produces dashboards no one acts on, drawn from documented enterprise deployments and converging research firm findings.

The $200 billion figure for US enterprise AI spending in 2025 represents only part of the total capital flowing into the AI stack; hyperscaler capex commitments from Amazon, Microsoft, Alphabet, and Meta reached $130 billion in Q1 2026 alone, with full-year combined projections at $725 billion, underwriting the infrastructure layer that enterprise AI deployments ultimately depend on.

Most enterprise AI is solving the wrong problem

It is tempting to equate AI investment with AI transformation. Boardrooms announce chatbot rollouts, copilot integrations, and generative AI pilots. Investors hear “AI-enabled” in earnings calls and assume the work is underway.

The data tells a different story. According to research from Gartner and McKinsey, 70-80% of AI pilots fail or stall, and the leading driver is not implementation quality. It is architectural. McKinsey’s State of AI 2025 report identifies poor data integration, not talent gaps, as the primary failure mechanism. Only an estimated 12-20% of enterprises achieve meaningful operational embedding, and Gartner’s April 2026 findings place agentic AI deployment at just 17% among enterprises.

McKinsey’s State of AI 2025 findings identify poor data integration, not talent or tooling gaps, as the primary mechanism behind AI project failure, with only roughly one-third of organisations that adopt AI managing to scale it enterprise-wide.

The distinction is between two fundamentally different categories:

- Surface AI deploys chatbots, copilot layers, and low-code LLM wrappers. It automates discrete tasks. It produces reports. It sits on top of existing systems without restructuring them.

- Deep operational AI embeds ontology-driven reasoning into the data architecture itself. It connects supply chain, finance, production, and risk data into a unified model that enables AI to reason causally across domains, not just pattern-match within silos.

Surface AI is not useless. It yields 10-20% efficiency gains in isolated tasks. But it is structurally limited: it cannot access the decision architecture underneath it.

Practitioners have a shorthand for the surface category: “AI theatre.” Announced loudly, deployed narrowly, and disconnected from the operational workflows where value actually compounds.

The failure rate is not a quality problem. It is an architecture problem.

When big ASX news breaks, our subscribers know first

What infrastructure-level AI actually looks like inside an organisation

If surface AI automates tasks, what does the alternative look like mechanically? A framework synthesised from Gartner, McKinsey, and Forrester research identifies four enabling conditions that distinguish infrastructure-level AI from its surface counterpart:

- Unified data ontology: A shared semantic model that connects operational data across domains so AI can reason across them simultaneously.

- Real-time decision integration: AI outputs connected to actual decision workflows, not dashboards or static reports.

- Agentic capability: AI that can take actions within defined parameters, not just generate recommendations for humans to review.

- Domain depth: Models and logic built around the specific causal structures of the enterprise’s operations, not generic off-the-shelf tools.

Agentic coordination infrastructure is emerging as its own architectural category, distinct from the underlying AI models it orchestrates; Decidr AI’s Agentic Graph framework, which connects enterprise workflows and decision architectures into an interoperable network using a common schema structure, reflects a broader industry bet that durable value will accrue to the orchestration layer as base models commoditise.

Without these four conditions, AI tools produce insights that do not change decisions, or automate tasks that are not bottlenecks.

The ontology layer: why it changes what AI can do

The ontology layer is the least intuitive of the four conditions, and the most consequential. It is a shared semantic model that connects operational data across domains (supply chain, finance, production, human resources) so AI can reason causally rather than pattern-match within individual data streams.

Palantir‘s management described its platform function during Q1 2026 earnings commentary in precisely these terms: data architecture, workflow mapping, user permissions, and AI agent oversight, all structured around a unified ontology. The ontology layer is also what creates the switching-cost dynamic. Once embedded, the cost of migration is substantial. That dynamic cuts both ways as a signal: it validates the depth of integration, but it also raises questions about lock-in that investors evaluating any deep-integration platform need to weigh.

Three deployments that show the gap in practice

The framework gains clarity when applied to specific enterprise outcomes. Three cases illustrate the same underlying logic, ontology plus real-time decision integration, producing very different results across sectors.

GE Aerospace represents the most viscerally concrete outcome. According to Palantir management commentary during Q1 2026 earnings (a figure not independently confirmed in GE Aerospace’s own earnings filings), the company’s supply chain analysis and AI platform was attributed with a significant increase in engine production output. GE Aerospace separately reported 29% revenue growth in Q1 2026. The AI was embedded at the supply chain ontology layer, connecting production scheduling, parts availability, and logistics data into a unified decision model.

AIG illustrates the risk-management dimension. According to Palantir’s Q1 2026 management commentary, the platform was deployed for risk assessment, insurance pricing, and fraud detection, with the goal of improving underwriting quality and claims outcomes. The AI operated within the underwriting and fraud detection data fabric, not as a reporting tool layered on top of existing workflows.

The telecom case may be the most conceptually significant. A client with approximately 10 million annual customer service calls originally approached Palantir for call-centre automation, a surface AI request. The proposal that emerged was fundamentally different: use the platform to proactively identify dissatisfied customers who had never contacted support. That shift, from automating calls that already happened to finding problems that had not yet surfaced, represents a different theory of where AI value lives.

| Company | Sector | AI Application Layer | Reported Outcome |

|---|---|---|---|

| GE Aerospace | Aerospace / Manufacturing | Supply chain ontology | Significant production increase (per Palantir management commentary; not independently confirmed in GE Aerospace filings) |

| AIG | Insurance | Underwriting and fraud detection data fabric | Improved underwriting quality |

| Telecom client | Telecommunications | Proactive churn prediction model | Shift from cost reduction to revenue preservation |

Note: GE Aerospace and AIG figures are sourced from Palantir management commentary during Q1 2026 earnings. These figures have not been independently verified in those companies’ own earnings filings.

The telecom pivot captures the infrastructure distinction in a single sentence: the client asked to automate calls that already happened; the platform identified dissatisfied customers who had never called.

Why most organisations are structurally blocked from getting here

Deep AI integration remains rare, and the reasons are structural rather than technological. The bottleneck inverts the common assumption.

According to Gartner’s April 2026 findings, 56% of US enterprises cite talent gaps as the top barrier to meaningful AI deployment. The technology itself is broadly available. The people who can architect, implement, and maintain ontology-level systems are not.

Three categories of friction define the barrier landscape:

- Talent: More than half of US enterprises identify workforce capability as the primary constraint; average AI project cost overruns reach 40%, compounding the skills shortage.

- Data architecture: Without a unified data model, even well-funded AI initiatives cannot connect the causal reasoning chains that infrastructure AI requires. Poor data integration remains the leading technical failure driver across multiple research sources.

- Regulatory compliance: McKinsey’s State of AI 2025 report indicates that 40% of enterprises are delaying AI adoption due to compliance concerns, and the US regulatory environment (including the AI Bill of Rights and California state-level AI transparency requirements) influences 65% of enterprises. Regulated industries face higher compliance friction, yet they also stand to gain the most from deep AI’s risk-management capabilities.

California’s AI training data transparency requirements, which took effect on January 1, 2026 under AB 2013, impose specific disclosure obligations on generative AI developers and represent the most concrete example of state-level regulation already shaping enterprise AI compliance costs in the United States.

Of the estimated $200 billion invested in enterprise AI in 2025, an estimated 20% has been realised in measurable value. The gap is not a technology problem. It is an architecture and capability problem.

McKinsey data reinforces the picture: only 28% of enterprises achieve full ROI on AI projects. The structural barriers that block laggards from catching up are the same dynamics that protect early movers who have already absorbed the implementation cost.

The architecture question every enterprise AI strategy has to answer

The cases and barriers converge on a single structural question: at what layer does an organisation commit to deploying AI?

The decision maps onto a build-versus-buy-versus-embed spectrum. Surface tools deploy quickly and cheaply. Infrastructure-level embedding carries higher upfront cost and complexity, but produces meaningfully different outcome trajectories. Both are legitimate choices; the right answer depends on the problem the organisation is actually trying to solve.

| Integration Approach | Time to Deploy | Typical Outcome Range | Key Trade-off |

|---|---|---|---|

| Surface AI tools | Fast | 10-20% task-level efficiency gains | Limited operational impact |

| Infrastructure AI / ontology platforms | Slow to medium | 40-60% operational uplift potential | High switching costs and implementation complexity |

Palantir’s Q1 2026 results illustrate the revenue dynamics of the infrastructure tier: 85% year-on-year revenue growth to $1.63 billion, with US commercial revenue growth of approximately 133% year-on-year compared with an estimated 8% growth rate at Accenture over a comparable period. The company’s 90% client retention rate can be read as validation of embedded integration depth, or as evidence of a lock-in effect. Both readings carry analytical weight.

Where the competitive moat actually lives

In commercial contexts, the ontology approach is replicable. Open-source tooling, including Neo4j and Dataiku, can approximate ontology functions. The durable edge for deep-integration platforms comes from deployment speed, domain expertise accumulated across clients, and the agentic workflow layer built on top of the data architecture.

In defence and regulated industries, the picture differs. Classified environment capabilities and compliance posture create differentiation that is materially harder to replicate. Research firm consensus projects infrastructure-level AI adopters on track for 3x ROI relative to surface adopters by 2027, and that gap is most pronounced where regulatory and security requirements limit the competitive field.

The next major ASX story will hit our subscribers first

The AI adoption split is widening, and the gap compounds

The surface-versus-infrastructure distinction is not a static snapshot. It is a compounding dynamic.

Organisations with deep AI integration accumulate structural advantages that reinforce themselves. Four conditions make this self-reinforcing:

- Better data: Unified ontologies generate richer, cleaner operational data over time.

- Better models: More comprehensive data trains more accurate, domain-specific AI models.

- More agentic capability: Improved models enable broader autonomous action within operational workflows.

- Deeper operational embedding: Each cycle of improvement embeds the platform more deeply into decision architecture, raising the cost of migration for competitors starting from scratch.

Forrester’s research places enterprise AI adoption at approximately 60%, but a large proportion remains stalled at proof-of-concept. The gap between adoption and value realisation is where the compounding dynamic matters most. Gartner’s recommendation emphasis on ontology platforms and structured data architectures as the path to “AI industrialisation” reflects this reality.

The research firm consensus on a 3x ROI trajectory gap by 2027 is not a prediction about technology. It is a projection about the compounding of organisational learning and data architecture investment, advantages that are expensive to replicate from a standing start.

For investors tracking which companies are positioned to capture infrastructure AI spending rather than lose revenue to it, our full explainer on the hardware-software performance split documents the more than 70-percentage-point performance gap between semiconductor equipment and software application indices in 2026, mapping which asset classes benefit from deep AI integration and which face structural repricing as the architecture commitment decision plays out across industries.

The enterprises building durable AI-era competitive advantages are not those deploying the most AI tools. They are the ones that made the architectural commitment earliest and deepest.

The question is not whether to adopt AI, but where to plant it

The surface-versus-infrastructure distinction is the variable that determines whether AI investment produces durable competitive advantage or expensive pilot activity. GE Aerospace, AIG, and the unnamed telecom client are not exceptional outliers. They illustrate a single underlying logic: when AI is embedded at the ontology and decision-integration layer, it restructures how an organisation operates, generates revenue, and manages risk. When it sits on top, it automates tasks.

As the cost of deep AI integration continues to fall and the ROI gap between leaders and laggards widens, the architectural commitment decision becomes increasingly difficult to defer. The relevant question for evaluating any corporate AI strategy is no longer whether the company is using AI. It is at what layer, and for how long.

This article is for informational purposes only and should not be considered financial advice. Investors should conduct their own research and consult with financial professionals before making investment decisions. Financial projections referenced are subject to market conditions and various risk factors. Past performance does not guarantee future results.

Frequently Asked Questions

What is infrastructure-level enterprise AI adoption?

Infrastructure-level enterprise AI adoption means embedding AI into the core operational architecture of a business, connecting supply chain, finance, production, and risk data into a unified ontology-driven model, rather than layering AI tools on top of existing systems without restructuring them.

Why do most enterprise AI pilots fail?

According to research from Gartner and McKinsey, 70-80% of enterprise AI pilots fail or stall, and the leading cause is architectural rather than a lack of talent or tooling. Poor data integration is identified as the primary failure mechanism, preventing AI from reasoning causally across business domains.

What is the ROI difference between surface AI and deep operational AI?

Research from Gartner, McKinsey, and Forrester projects that infrastructure-level AI adopters are on track for 3x the return on investment compared to surface-level counterparts by 2027, with surface AI typically yielding only 10-20% task-level efficiency gains versus 40-60% operational uplift potential for ontology-based platforms.

What are the biggest barriers to enterprise AI adoption?

Gartner's April 2026 findings show that 56% of US enterprises cite talent gaps as the top barrier, while poor data architecture and regulatory compliance concerns (cited by 40% of enterprises as a reason for delaying AI adoption) compound the challenge of scaling AI beyond proof-of-concept.

What is an AI ontology layer and why does it matter for enterprise AI?

An AI ontology layer is a shared semantic model that connects operational data across business domains so AI can reason causally across supply chain, finance, production, and HR simultaneously rather than pattern-matching within isolated data silos. It is considered the most consequential enabling condition for infrastructure-level AI because it creates the decision-integration foundation that surface AI tools cannot access.