Why APRA’s AI Risk Warning Threatens the Whole Financial Sector

Key Takeaways

- APRA's 30 April 2026 letter formally identified AI vendor concentration and frontier AI-enabled cyber threats as externally-originating systemic risks beyond the control of any individual institution.

- The $9.8 trillion asset base overseen by APRA means a disruption cascading through a dominant AI vendor could simultaneously impair fraud detection, credit decisioning, and compliance monitoring across multiple institutions.

- Frontier AI models are accelerating adversarial cyber capabilities, with CrowdStrike's 2026 Global Threat Report recording an 89% surge in AI-enabled attacks and adversary breakout times falling to just 29 minutes.

- APRA is not drafting new standards but expects AI risk to be managed under existing prudential frameworks including CPS 234, with the compliance runway shorter than a fresh rulemaking process would allow.

- No equivalent prudential mechanism currently exists to cap the proportion of the financial sector's AI infrastructure flowing through a single external provider, leaving a structural gap that individual institution compliance cannot resolve.

Australia’s financial regulator has told the sector its artificial intelligence risk management is not keeping pace with its AI adoption. In a letter published on 30 April 2026, the Australian Prudential Regulation Authority (APRA), which oversees institutions holding $9.8 trillion in assets on behalf of depositors, policyholders, and superannuation fund members, identified two structural risks that sit outside the control perimeter of any single institution: AI vendor concentration and frontier AI-enabled cyber threats. Neither risk can be resolved through internal governance alone. Both originate at the infrastructure level, where a small number of external providers and rapidly advancing AI models shape the operating environment for Australia’s banks, insurers, and super funds. This analysis examines what these two risks mean in practice, why APRA has distinguished them from conventional governance gaps, and what the implications are for the stability of the regulated financial sector.

Why APRA’s April 2026 findings represent a new category of systemic concern

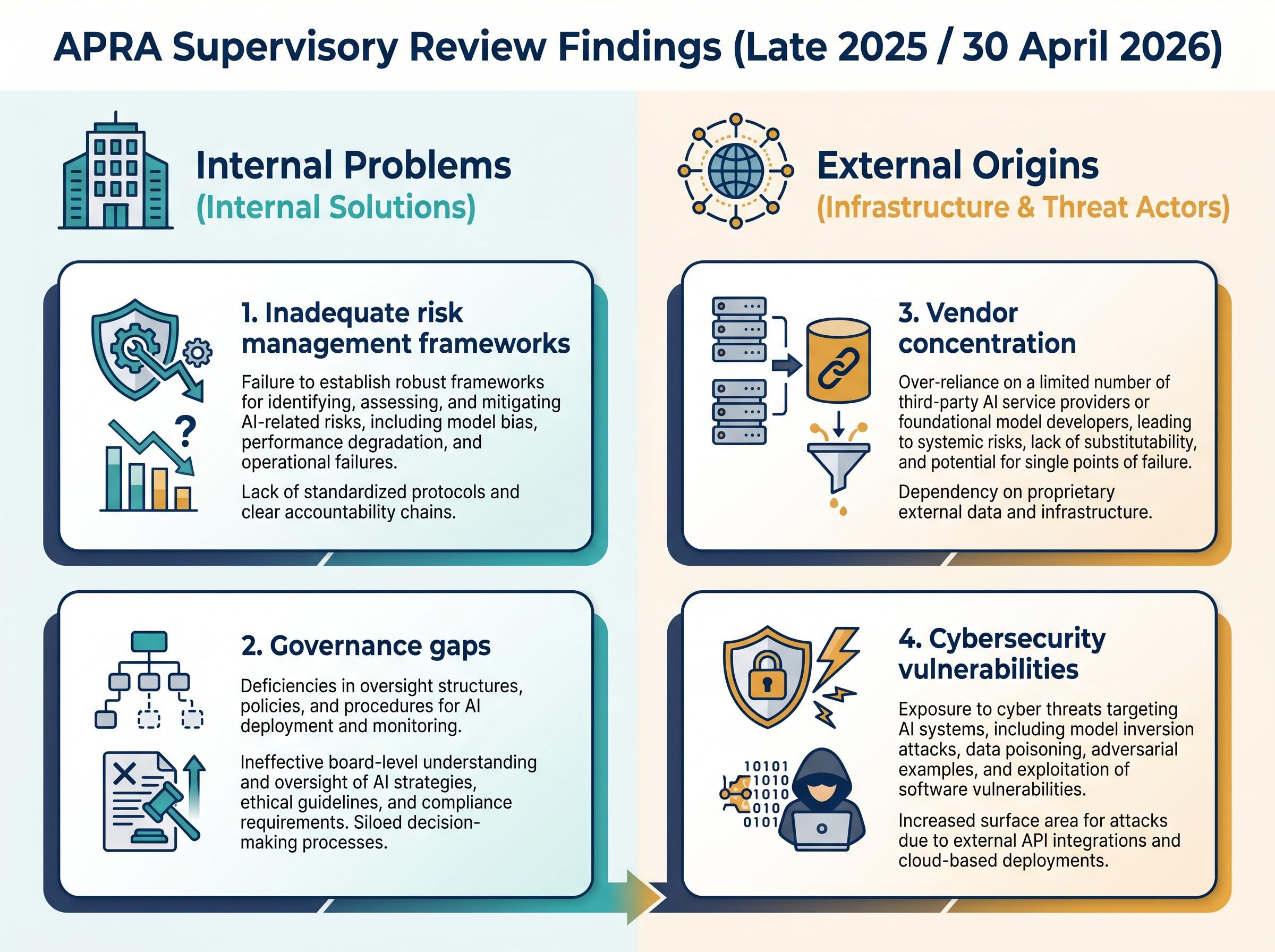

APRA’s supervisory review, conducted across all regulated industries in late 2025 and communicated via formal letter on 30 April 2026, identified four categories of weakness in AI risk management: inadequate risk management frameworks, vendor concentration, cybersecurity vulnerabilities, and governance gaps. The last two, frameworks and governance, are internal problems with internal solutions. The first two are different. They originate outside the institution, at the infrastructure and threat-actor level, and that distinction matters.

The institutions under APRA’s supervision span a broad cross-section of the financial system:

- Banks and mutual institutions

- General and reinsurance companies

- Life insurers and private health insurers

- Friendly societies

- The majority of Australia’s superannuation sector

APRA’s stated objective is maintaining the ongoing safety and stability of the financial system amid rapid technological change. The April 2026 letter signals that AI adoption has moved fast enough to test whether existing supervisory frameworks can keep pace.

For readers wanting the full regulatory record before working through the structural risk analysis, our dedicated guide to APRA’s supervisory letter covers the board oversight deficits, vendor concentration findings, and enforcement toolkit in detail, including the specific prudential standards under which compliance is now being measured.

The $9.8 trillion asset base gives the stakes context. A risk that compounds across this pool is not a compliance problem for individual boards; it is a systemic stability question. The sections that follow examine each of the two externally-originating risks in detail.

When big ASX news breaks, our subscribers know first

What AI vendor concentration risk actually means for financial stability

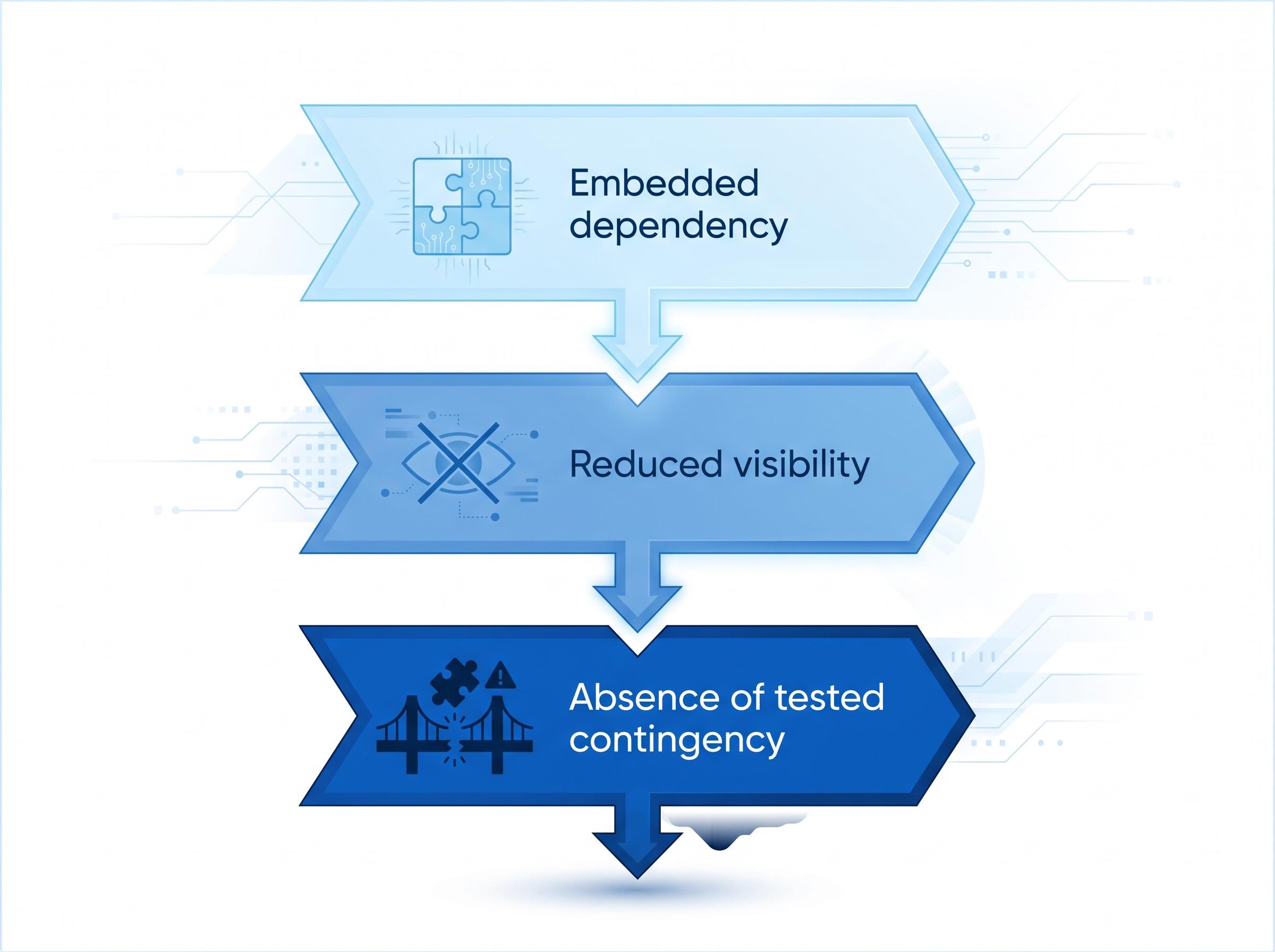

The concentration risk APRA identified operates through a specific three-stage mechanism:

- Embedded dependency. AI capabilities are frequently embedded within broader software platforms and developer tooling, rather than deployed as standalone systems. An institution’s customer relationship management platform, compliance monitoring tool, or fraud detection system may all rely on the same underlying AI provider without that dependency appearing as a distinct line item in a vendor register.

- Reduced visibility. Because AI models are embedded rather than procured independently, institutions face reduced transparency about where and how those models are trained, updated, or constrained. This limits the capacity to evaluate and manage associated risks at the board and risk committee level.

- Absence of tested contingency. APRA found that concentration risk is compounded by the absence of tested exit strategies or fallback arrangements. If a provider fails, exits a market, or changes its model’s behaviour, affected institutions may not have a ready alternative.

The embedded AI problem: why concentration is harder to see than cloud dependency

Cloud infrastructure concentration attracted regulatory attention years ago because it was visible: institutions knew which cloud provider hosted their workloads. AI concentration is less visible. A compliance platform powered by a specific large language model may not appear in vendor registers as an AI dependency. A customer service tool with embedded generative capabilities may be categorised under its parent software vendor, not the AI provider whose model sits beneath it.

This opacity means boards and risk committees may lack full visibility of their actual AI provider exposure across the institution. For depositors and investors, the practical implication is that a disruption to a single AI provider could simultaneously impair fraud detection, credit decisioning, customer service, and compliance monitoring, not just at one institution, but across multiple institutions if that provider is dominant across the sector.

Embedded AI deployment patterns across enterprise software explain much of this visibility problem: research tracking infrastructure-level AI adoption shows that only an estimated 12-20% of enterprises achieve meaningful operational AI embedding, meaning the majority of AI capabilities arrive bundled inside existing platforms rather than as distinctly procured and registered systems.

How frontier AI is changing the calculus for financial sector cyber defence

APRA’s second structural concern is not a future risk. It is a current capability shift. The regulator warned that advanced frontier AI models are anticipated to increase both the likelihood and scale of cyberattacks by enabling malicious actors to identify vulnerabilities more effectively.

Anthropic’s Claude Mythos has been cited as an example of a frontier AI model with the potential to enhance adversarial capabilities, illustrating the class of risk that regulators are tracking as individual frontier systems advance.

APRA’s finding is that the pace at which entities detect and remediate vulnerabilities must increase substantially to match the speed advantage AI provides to threat actors.

The threat elevation operates across three dimensions:

- Speed of vulnerability discovery. Frontier models can scan codebases and network architectures at machine speed, identifying exploitable weaknesses faster than human security teams can patch them.

- Scale of coordinated attack potential. AI-enabled attacks can be orchestrated simultaneously across multiple vectors, overwhelming detection systems designed for sequential threat processing.

- Automation of exploit chains. The capacity to chain multiple exploits together, from initial access to lateral movement to data exfiltration, can be automated using agentic AI capabilities.

The Australian Cyber Security Centre (ACSC) published guidance on agentic AI services in approximately late April 2026, addressing emerging risks consistent with APRA’s framing. The threat environment is described as fast-moving, requiring continuous adaptation of cybersecurity practices rather than periodic review.

Board-level AI governance sits at the centre of APRA’s enforcement framing: CrowdStrike’s 2026 Global Threat Report recorded an 89% surge in AI-enabled attacks and adversary breakout times falling to just 29 minutes, quantifying exactly the detection-to-remediation gap that APRA’s letter describes in qualitative terms.

Understanding why these two risks are structurally linked

Vendor concentration and frontier AI cyber threats may appear as separate line items on a risk register. They are not separate risks. They are a compounding system.

The connection is direct: if a dominant AI vendor is itself compromised by a frontier AI-enabled attack, the blast radius extends to every financial institution relying on that provider. A single point of failure at the infrastructure layer means that an institution’s fraud detection, credit scoring, and compliance monitoring could all degrade simultaneously, not because the institution was attacked, but because its provider was.

This is categorically different from the governance gaps APRA also flagged (board oversight, change management, assurance processes). Those are internal problems with internal solutions. The concentration-plus-cyber risk pairing sits outside the institution’s direct control.

APRA’s letter identifies that AI risks span multiple domains simultaneously: operational resilience, cyber and information security, data privacy, and procurement. The table below compares the two structural risks across their defining attributes.

| Risk Type | Key Characteristics | Compounding Factor |

|---|---|---|

| AI Vendor Concentration | External origin; control sits with third-party providers; affects multiple business functions simultaneously | Absence of tested exit strategies or fallback arrangements across the sector |

| Frontier AI Cyber Threats | External origin; control sits with threat actors; offensive capability scales with model advancement | Detection and remediation pace has not kept up with AI-accelerated attack speed |

When these two risks interact, the sector faces a failure mode that no individual institution’s compliance programme can prevent on its own.

What APRA expects regulated institutions to do, and what it is prepared to do if they don’t

APRA is not introducing new prudential standards for AI. Instead, the regulator expects AI risk management to fit within existing frameworks covering:

- Information security (CPS 234)

- Operational risk management

- Governance and board oversight

- Data risk and procurement practices

This distinction matters operationally. The compliance runway is shorter than if new standards were being drafted from scratch. Institutions are expected to manage AI risk under rules that already exist, applied with greater rigour. APRA’s call for a “step change” in AI-related risk management and governance makes the expectation explicit.

On enforcement, APRA has signalled willingness to act if the gaps identified in the 30 April letter persist, though no major enforcement actions had materialised as of early May 2026. Post-letter supervisory engagements are ongoing. The regulator has also indicated it plans to continue engagement with government bodies, regulated entities, and international peer regulators to monitor AI advancements.

Australian financial regulatory enforcement has shifted meaningfully toward action in the current period: ASIC secured a $10 million Federal Court penalty against Binance in 2026 and enacted purpose-built digital asset legislation in April 2026, signalling that the broader regulatory environment in which APRA operates is one of increasing cross-regulator willingness to move from guidance to formal sanction.

How Australia’s approach compares to global regulatory peers

APRA’s position on AI vendor concentration is consistent with the direction taken by multiple international regulators during 2025-2026. The Bank of England, the European Central Bank, and US regulators including the OCC and FINRA have all moved to address AI vendor concentration as a systemic risk, though the specific instruments and timelines vary across jurisdictions.

This represents regulatory convergence rather than Australian exceptionalism. Institutions operating across jurisdictions face compounding compliance pressure, as multiple regulators tighten expectations on AI vendor dependency and contingency planning simultaneously.

The Bank of England analysis of AI vendor concentration in financial systems, published in April 2025, explicitly identified the potential for market concentration in AI-related services as a systemic challenge, situating APRA’s April 2026 findings within a broader pattern of regulatory convergence across major financial jurisdictions.

The next major ASX story will hit our subscribers first

The stability question APRA’s letter leaves open

APRA has named the risks and set expectations. The analytical tension is what comes next. Individual institution compliance with APRA’s expectations can address concentration risk at the firm level, requiring each bank or insurer to map its AI dependencies and develop exit plans. It does not resolve the sector-level exposure if multiple institutions share the same dominant vendor.

The letter does not address whether APRA has tools to manage sector-wide AI vendor concentration the way it manages bank capital or liquidity concentration. Prudential standards can require individual institutions to hold capital buffers or maintain liquidity ratios. No equivalent mechanism exists to limit the proportion of the financial system’s AI infrastructure that flows through a single external provider.

Commonwealth Bank of Australia’s (CBA) April 2026 deployment of an agentic AI system for real-time fraud detection illustrates the sector’s deepening operational integration with AI at the frontline. Separately, the Australian Securities and Investments Commission’s (ASIC) Key Issues Outlook 2026, published on 27 January 2026, also flags AI risks in financial services, suggesting cross-regulator alignment on the severity of the issue.

The ASIC Key Issues Outlook 2026 flags AI-powered cybercrime and risks to public trust in AI-driven financial decisions as priority concerns for the coming year, confirming that the threat landscape APRA described in its April 2026 letter is shared across Australia’s two principal financial regulators.

APRA’s stated objective is maintaining the ongoing safety and stability of the financial system. The open question is whether supervisory tools designed for institutional risk management are sufficient when the risk originates at the infrastructure level, outside Australia, and outside the financial sector entirely.

The $9.8 trillion sector’s stability increasingly depends on infrastructure decisions made by a small number of AI providers headquartered outside Australia. That dependency is now formally on the regulatory record. Whether it can be addressed through existing frameworks remains unresolved.

A regulator has named the risk; now the sector must close the gap

APRA’s 30 April 2026 letter marks a formal shift in how systemic AI risk is categorised in Australia. The regulator has distinguished infrastructure-level, externally-originating risks from internal governance gaps, and it has named both with specificity: vendor concentration creates sector-wide single-point-of-failure exposure; frontier AI accelerates the offensive cyber threat in ways institutions cannot control unilaterally.

APRA’s ongoing engagement with international peer regulators suggests the framework will continue to evolve. Institutions that act now on contingency planning and cybersecurity uplift will be better positioned as expectations tighten across jurisdictions.

Readers seeking APRA’s full letter and supervisory expectations can consult the official publication at https://www.apra.gov.au/apra-letter-to-industry-on-artificial-intelligence-ai.

This article is for informational purposes only and should not be considered financial advice. Investors should conduct their own research and consult with financial professionals before making investment decisions. Forward-looking statements regarding regulatory developments and institutional responses are subject to change based on evolving policy and market conditions.

Frequently Asked Questions

What is APRA AI risk and why is it significant for Australia's financial sector?

APRA AI risk refers to the Australian Prudential Regulation Authority's formal identification of AI-related threats to financial stability, specifically vendor concentration and frontier AI-enabled cyber threats, affecting institutions managing $9.8 trillion in assets on behalf of depositors, policyholders, and superannuation members.

What is AI vendor concentration risk in the context of APRA's April 2026 letter?

AI vendor concentration risk occurs when multiple financial institutions rely on the same underlying AI provider, often without realising it because AI capabilities are embedded inside broader software platforms, creating a sector-wide single point of failure if that provider is disrupted or compromised.

How does frontier AI change the cybersecurity threat landscape for banks and insurers?

Frontier AI models can scan codebases at machine speed, automate exploit chains, and coordinate attacks across multiple vectors simultaneously, shrinking adversary breakout times to as little as 29 minutes according to CrowdStrike's 2026 Global Threat Report, which is the detection-to-remediation gap APRA flagged in its April 2026 letter.

What prudential standards does APRA expect financial institutions to use when managing AI risk?

APRA is not introducing new AI-specific prudential standards; instead it expects institutions to apply existing frameworks covering information security under CPS 234, operational risk management, governance and board oversight, and data risk and procurement practices, with greater rigour than previously applied.

How does APRA's approach to AI vendor concentration compare to other global regulators?

APRA's position is consistent with regulatory convergence underway across major jurisdictions, including the Bank of England, European Central Bank, OCC, and FINRA, all of which have moved to address AI vendor concentration as a systemic risk during 2025-2026, though specific instruments and timelines vary.