Nvidia at 24x or Broadcom at 37x: Two Ways to Bet on AI Chips

Key Takeaways

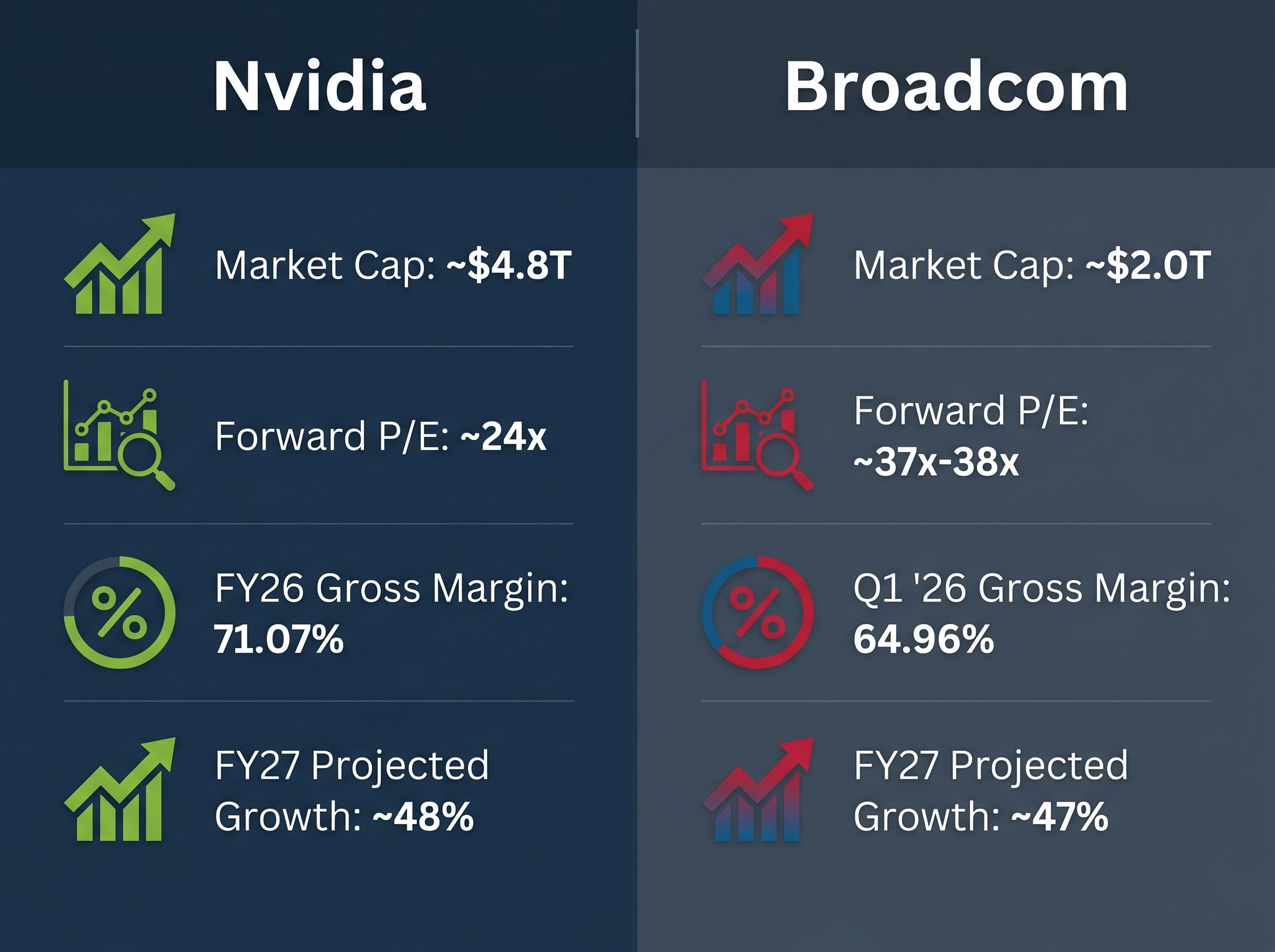

- Nvidia generated $193.7 billion in data centre revenue in FY2026 and trades at approximately 24x forward earnings, a notable compression from prior peak multiples despite continued revenue acceleration.

- Broadcom's AI chip revenue surged 106% year over year in Q1 FY2026, supported by locked-in multi-year contracts with Google and Meta extending through at least 2029.

- The two companies are not direct competitors; Nvidia's GPU deployments and Broadcom's custom ASICs frequently coexist within the same hyperscaler data centre, making a portfolio allocation to both analytically defensible.

- Broadcom's forward P/E of approximately 37x reflects the market pricing in contract-backed revenue certainty, while Nvidia's lower 24x multiple signals de-rating on competitive concerns despite strong business execution.

- Hyperscaler in-housing, led by Amazon's Trainium programme which has already won chip orders from Meta and OpenAI, represents a structural risk that investors in either name should monitor closely.

Nvidia generated $193.7 billion in data centre revenue in fiscal year 2026. Broadcom’s AI chip revenue surged 106% in Q1 2026 alone. These are not rivals in the traditional sense; they are two companies each winning the AI hardware buildout in entirely different ways, and that distinction carries significant investment implications. As of May 2026, both companies carry near-unanimous analyst buy ratings, both are executing against multi-year product roadmaps, and both are benefiting from the same structural tailwind: hyperscale cloud providers spending aggressively on AI infrastructure. But the investment case for each differs meaningfully in terms of revenue visibility, moat durability, valuation, and risk profile. This analysis unpacks how Nvidia’s GPU-and-CUDA flywheel compares to Broadcom’s locked-in custom ASIC model, what the divergence in forward multiples signals about market conviction, and how investors should think about positioning in both names.

Two companies, two completely different bets on how AI compute scales

Nvidia sells general-purpose GPU compute at massive scale. Broadcom co-designs purpose-built silicon for specific hyperscaler workloads. In most cases, these companies are not competing for the same purchase order; Nvidia’s GPU deployments and Broadcom’s ASICs often coexist within the same hyperscaler data centre.

The numbers reflect that structural separation. Nvidia posted $215.9 billion in total revenue for FY2026, with $193.7 billion from data centre alone. Broadcom’s AI revenue hit $8.4 billion in Q1 FY2026, growing 106% year over year, with analyst projections pointing toward $100 billion-plus in annual custom chip revenue by 2027.

The comparison, then, is not about market share. It is about revenue model and moat type.

| Dimension | Nvidia | Broadcom |

|---|---|---|

| Primary AI revenue model | General-purpose GPU sales (Blackwell, Vera Rubin) | Custom ASIC co-development for hyperscalers |

| Key customers | Broad hyperscaler and enterprise base | Google, Meta, and select hyperscalers |

| Revenue visibility | High, but tied to GPU demand cycle | Very high; locked-in multi-year contracts |

| Competitive moat | CUDA ecosystem, full-stack AI platform | Deep co-design relationships, switching costs |

| Key risk | Hyperscaler in-housing, export controls | Customer concentration, long design cycles |

Three structural dimensions the table cannot fully capture:

- Software lock-in depth: Nvidia’s CUDA ecosystem has years of developer tooling embedded across thousands of enterprise customers; Broadcom’s lock-in is hardware-specific and tied to individual hyperscaler architectures

- Design cycle length: Broadcom’s ASIC co-development spans multiple years per customer, creating longer revenue visibility but slower market responsiveness

- Addressable market breadth: Nvidia sells to virtually any organisation deploying AI compute; Broadcom’s custom model limits its addressable market to a handful of the world’s largest technology companies

Investors who frame this as a zero-sum competition will misread both stocks. Wall Street can be simultaneously bullish on both without contradiction precisely because they occupy different lanes.

The scale of hyperscaler AI capital expenditure underpinning both companies’ revenue trajectories is itself a moving target: Amazon, Microsoft, Alphabet, and Meta spent $130 billion in Q1 2026 alone, with full-year 2026 combined guidance reaching approximately $725 billion and a $1 trillion annual run rate projected for 2027.

When big ASX news breaks, our subscribers know first

What Nvidia’s $215.9 billion year reveals about the durability of the GPU moat

The headline revenue figure is extraordinary, but the mechanism that produced it is what matters to investors evaluating durability. Nvidia’s CUDA ecosystem, the software layer that sits on top of its GPUs, represents years of accumulated developer familiarity, tooling, and integration that would take any competitor years to replicate. Every enterprise AI deployment built on CUDA deepens the switching cost.

That software lock-in translates directly into pricing power. Nvidia reported a gross margin of 71.07% in FY2026, a level that reflects how little pricing pressure the company faces from alternative compute architectures. The market cap sits at approximately $4.8 trillion, with a 52-week range of $110.82 to $216.82.

Analyst consensus: 56 of 59 analysts polled by S&P Global in April 2026 rated Nvidia a buy or strong buy, with a 12-month price target implying approximately 24% upside from the survey-period price.

The forward P/E has compressed to approximately 24x from the roughly 32x level cited in earlier 2026 analyst data, even as revenue growth has accelerated. That compression changes the risk-reward calculus for new investors: the market has de-rated the stock on competitive concerns while the business continues to execute.

The Vera Rubin platform and what comes after Blackwell

Nvidia launched the Vera Rubin platform at CES in January 2026, comprising seven new chips designed for agentic AI workloads. The platform represents the next generational step in Nvidia’s cadence following Hopper and Blackwell, with full production now underway.

Major hyperscalers have been identified as early adopters for AI training deployments on the new architecture. Analyst consensus projects approximately 48% revenue growth for FY2027, with average estimates around $315 billion, suggesting the market expects Vera Rubin to sustain the company’s growth trajectory through the next cycle.

Understanding ASICs: why Broadcom’s custom chip model is structurally different from GPU competition

An application-specific integrated circuit (ASIC) is a chip designed for a single, specific workload rather than general-purpose compute. Where Nvidia builds GPUs that can run any AI model, Broadcom works directly with a hyperscaler customer to co-design silicon optimised for that customer’s proprietary workloads. The result is lower cost per inference at scale, reduced power consumption for targeted tasks, and reduced dependence on external GPU suppliers.

The technical case for why hyperscalers pursue both architectures simultaneously is well documented in industry analysis of GPU versus ASIC tradeoffs for LLM inference, with research from McKinsey projecting that ASICs could handle up to 90% of AI workloads as inference displaces training as the dominant compute task at scale.

The co-development lifecycle follows a predictable three-step pattern:

- Design brief and specification: The hyperscaler defines the workload requirements; Broadcom engineers the chip architecture to meet them

- Multi-year development and tape-out: The chip moves through design, fabrication, and testing over a period typically spanning two to three years

- Production and multi-generation refresh cycle: Once deployed, the customer and Broadcom iterate on subsequent generations, deepening the integration with each cycle

Once a hyperscaler has invested years of engineering into a custom chip with Broadcom, switching to an alternative supplier would require restarting the entire design process. That creates structural switching costs that function differently from Nvidia’s software lock-in but are equally durable.

CEO Hock Tan noted during Broadcom’s Q1 2026 earnings call that agentic AI and generative AI are driving demand for VMware cloud infrastructure, reinforcing the breadth of Broadcom’s AI exposure beyond custom silicon alone.

Broadcom’s Q1 2026 total revenue grew 29% year over year. Gross margin stood at 64.96%, with a dividend yield of 0.60%. Readers who understand why ASICs create durable customer relationships will understand why the company’s multi-year contracts are worth more than their headline dollar value.

Broadcom’s locked-in partnerships with Google and Meta redefine what revenue visibility means

In April 2026, the abstract ASIC model became concrete through two partnership expansions:

- Meta: Multi-year co-development agreement extended through 2029, including 2nm AI accelerators for Meta’s MTIA (Meta Training and Inference Accelerator) chips

- Google: Long-term agreement to supply future generations of custom AI chips, extending Broadcom’s existing deep collaboration on Tensor Processing Units (TPUs)

These contracts are not simply revenue events. They represent structural commitments from the world’s largest AI spenders to Broadcom’s roadmap through the end of the decade. Analyst consensus projects approximately 64% revenue growth for FY2026 and approximately 47% for FY2027, with longer-term projections pointing toward $100 billion-plus in annual custom chip revenue by 2027.

The locked-in nature of this revenue stands in contrast to Nvidia’s exposure to demand cycle fluctuations, where a slowdown in hyperscaler GPU capital expenditure spending could materially affect quarterly results.

S&P Global data shows 44 of 47 analysts rated Broadcom a buy or strong buy, with an average price target approximately 14% above the prevailing share price at the time of the survey. The market cap sits at approximately $2.0 trillion.

What a 37x forward multiple on Broadcom actually reflects

Broadcom’s forward P/E of approximately 37x-38x appears elevated next to Nvidia’s compressed 24x. The gap, however, reflects revenue predictability rather than speculative growth. The market is pricing Broadcom’s multi-year contract base and the near-certainty of its design-win revenue against Nvidia’s larger but more cycle-exposed top line.

Jefferies raised its Broadcom price target to $500 in January 2026. Morgan Stanley raised its target to $443 from $409 in December 2025. Some analysts characterise Broadcom as undervalued relative to its growth trajectory despite the elevated headline multiple.

The threat no one on Wall Street is fully pricing: hyperscaler in-housing and the Amazon signal

Amazon quintupled its AI chip market share in 2026, driven by its Trainium chip programme and triple-digit growth in AWS AI services.

That statistic complicates the bullish consensus on both Nvidia and Broadcom. In April 2026, Meta signed a deal for millions of Amazon AI CPUs, a specific customer defection signal that illustrates the multi-vendor diversification strategy large hyperscalers are now executing. Meta is simultaneously a Broadcom ASIC partner and an Amazon Trainium customer.

The competitive threat extends further than internal deployment: the Amazon Trainium external sales programme is expected to begin within two years, with OpenAI already partnered on Trainium workloads and Anthropic committing to purchase $100 billion worth of chips, signalling that Amazon intends to become a direct third-party competitor to both Nvidia and Broadcom.

The competitive pressure extends beyond Amazon:

- Amazon Trainium: The most advanced in-house programme, with demonstrated customer wins including Meta; currently the most material threat to both Nvidia and Broadcom

- Microsoft custom silicon: Part of a broader structural trend of large cloud providers reducing dependence on Nvidia’s general-purpose GPUs; directionally significant

- Intel Xeon 6: Launched to recapture data centre compute share; a secondary but relevant pressure point on Nvidia’s dominance

- AMD: Remains a credible alternative in AI compute, particularly for workloads where Nvidia’s premium pricing creates switching incentives

Citi downgraded Nvidia’s price target to $210 from $220 in September 2025, citing intensifying AI chip rivalry. A Seeking Alpha analyst downgraded Nvidia to Hold in January 2026. Neither Nvidia nor Broadcom can assume captive demand from the largest cloud buyers indefinitely.

For investors wanting to stress-test the bull case before sizing a position in either name, our deep-dive into the hardware bubble risk scenario examines how escalating inference costs, generative AI profitability pressures, and potential hyperscaler capex deceleration could transmit into semiconductor stock corrections, with specific analysis of what forward guidance from Microsoft, Amazon, Alphabet, and Meta would need to show to validate continued spending.

The next major ASX story will hit our subscribers first

Nvidia at 24x or Broadcom at 37x: framing the choice for US investors in May 2026

The valuation gap between the two companies is the most actionable data point in this comparison. Nvidia’s 24x forward P/E represents a significant de-rating from its prior peak multiple, making it more accessible to value-sensitive investors. Broadcom’s 37x reflects the premium the market assigns to contract-backed revenue predictability.

| Investor Profile | Nvidia | Broadcom |

|---|---|---|

| Time horizon | 12-18 months (growth capture) | 2-4 years (contract duration) |

| Growth preference | ~48% FY2027 projected growth, $315B target | ~47% FY2027 projected growth, locked-in base |

| Risk tolerance | Higher (cycle-exposed demand) | Lower (contract-backed visibility) |

| Valuation sensitivity | ~24x forward P/E, $4.8T market cap | ~37x forward P/E, $2.0T market cap |

| Portfolio role | Scale and ecosystem exposure | Revenue durability and moat depth |

Jefferies raised both price targets in January 2026: Nvidia to $275, Broadcom to $500. Given that both companies are net beneficiaries of the same AI infrastructure buildout, a portfolio allocation to both is analytically defensible. Understanding what each multiple is actually paying for determines whether either stock is mispriced at current levels.

Investors weighing whether 24x represents genuine value or a structural discount will find our dedicated guide to Nvidia’s forward valuation and capital return thesis, which examines how Bank of America analysts argue a dividend yield increase to 0.5%-1.0% could expand the institutional buyer base and catalyse a multiple re-rating independent of the AI demand story.

The AI hardware buildout has two winners in 2026, not one

Nvidia and Broadcom are not in a zero-sum competition. Both are structurally positioned to benefit from the multi-year AI infrastructure investment cycle, but through meaningfully different mechanisms. Nvidia offers unmatched scale and ecosystem depth with a compressed valuation. Broadcom offers revenue visibility and locked-in hyperscaler relationships at a premium multiple.

The common risk both share is real: hyperscaler in-housing and the diversification trend represented by Amazon and Meta’s multi-vendor strategies is a structural headwind that investors in either name should monitor closely.

Investors may benefit from examining their existing AI hardware exposure, assessing whether it is weighted toward GPU cycle risk or ASIC contract durability, and considering whether the current valuation gap between the two companies represents a relative value opportunity.

This article is for informational purposes only and should not be considered financial advice. Investors should conduct their own research and consult with financial professionals before making investment decisions. Past performance does not guarantee future results. Financial projections are subject to market conditions and various risk factors.

Frequently Asked Questions

What is the difference between Nvidia and Broadcom in AI chips?

Nvidia sells general-purpose GPUs used across a broad range of AI workloads, while Broadcom co-designs custom ASICs built specifically for individual hyperscaler customers like Google and Meta, meaning the two companies largely occupy different roles within the same data centre.

What is an ASIC and why do hyperscalers use them alongside GPUs?

An application-specific integrated circuit (ASIC) is a chip engineered for one specific workload rather than general-purpose compute, offering lower cost per inference and reduced power consumption at scale; hyperscalers use both GPUs and ASICs because each architecture serves different tasks within large AI deployments.

How does Broadcom's revenue visibility compare to Nvidia's?

Broadcom's revenue is backed by multi-year co-development contracts with customers including Google and Meta extending through 2029, giving it high predictability; Nvidia's revenue, while larger in absolute scale at $215.9 billion for FY2026, is more exposed to fluctuations in the GPU demand cycle.

What forward P/E multiples are Nvidia and Broadcom trading at in 2026?

As of May 2026, Nvidia trades at approximately 24x forward earnings, a significant compression from its earlier peak multiple, while Broadcom trades at approximately 37x-38x, reflecting the premium the market assigns to its contract-backed revenue predictability.

What is the biggest risk facing both Nvidia and Broadcom in 2026?

The most material shared risk is hyperscaler in-housing, where large cloud providers including Amazon are building and selling their own custom AI chips; Amazon quintupled its AI chip market share in 2026 through its Trainium programme and has already attracted customers including Meta and OpenAI.