The CPU-to-GPU Ratio Shift Repricing AI Semiconductor Stocks

Key Takeaways

- Three simultaneous May 2026 earnings reports from Arm, AMD, and Intel confirm that CPU for AI is a structural shift in data centre procurement, not a cyclical trend.

- Arm's AGI CPU attracted more than $2 billion in forward demand against confirmed supply of just $1 billion, signalling the repricing of CPU companies may still be in early stages.

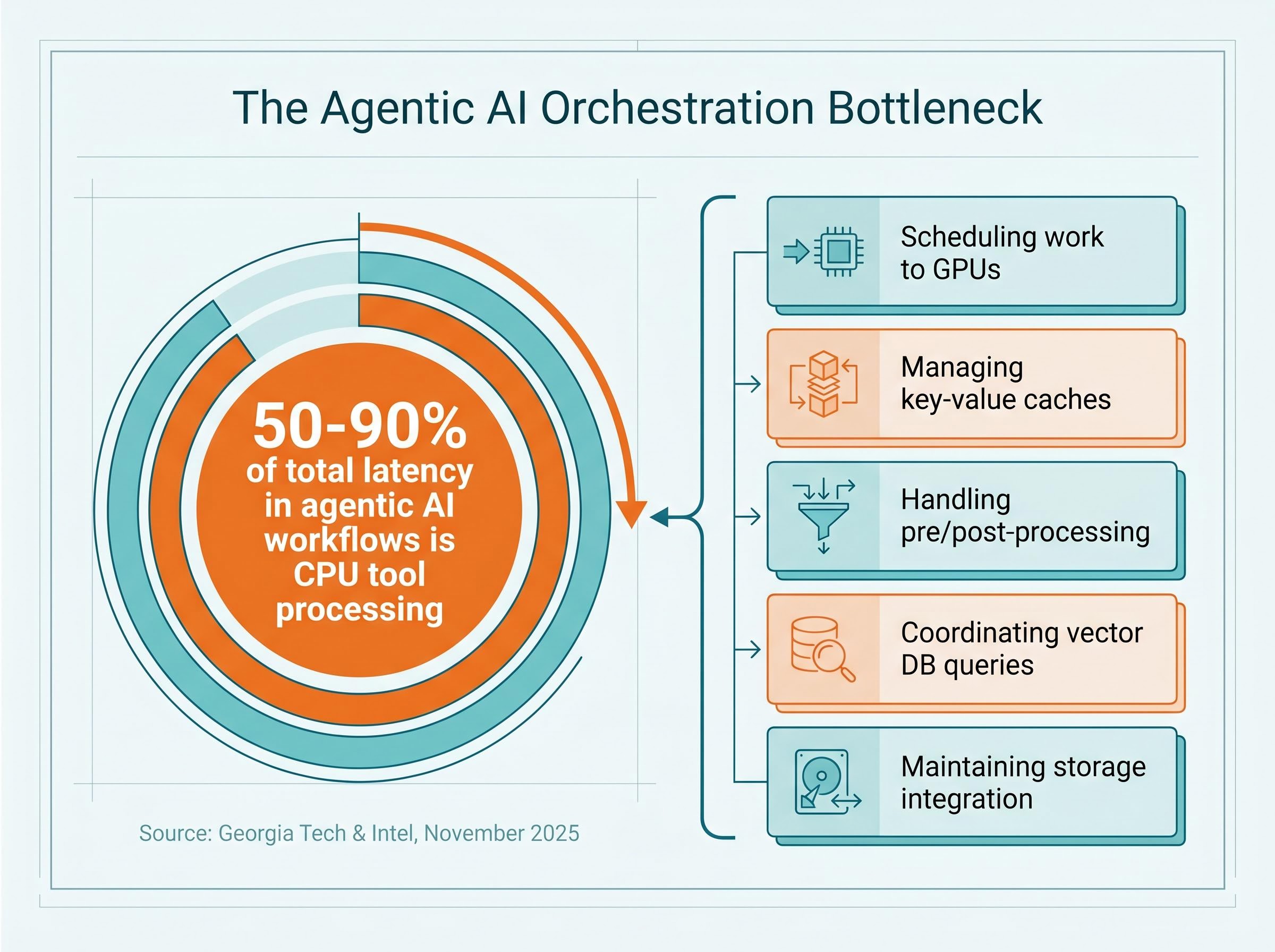

- Georgia Tech and Intel research found that CPU tool processing accounts for 50-90% of total latency in agentic AI workflows, establishing CPUs as a co-requirement alongside GPUs rather than a secondary component.

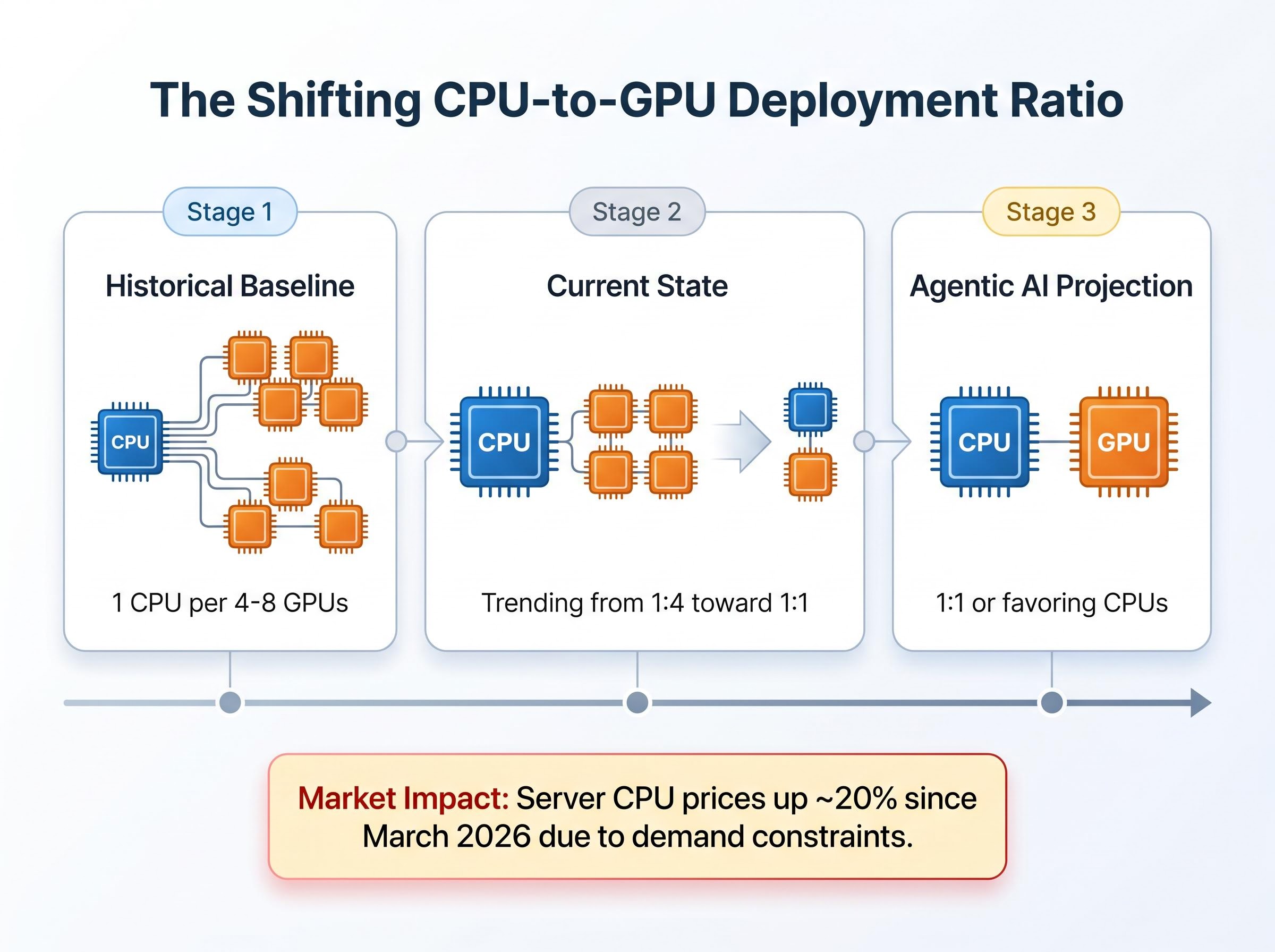

- The CPU-to-GPU deployment ratio is compressing from a historical 1:8 toward 1:1, translating AI infrastructure investment into incremental CPU revenue across AMD, Intel, and Arm architectures.

- AMD raised its server CPU total addressable market forecast to imply a market above $120 billion by 2030, with analyst price targets upgraded to the $485-579 range following its 38% year-over-year revenue surge.

Three semiconductor earnings reports landed within days of each other in early May 2026, and they tell the same story from different angles. The AI hardware buildout that Wall Street has spent two years framing as a GPU narrative is now, unmistakably, a CPU narrative too. Arm’s AGI CPU drew more than $2 billion in forward demand against a confirmed supply position of just $1 billion. Intel’s CFO told investors that CPU demand is becoming a “significant part of the AI TAM.” AMD’s EPYC server revenue anchored a 38% year-over-year revenue surge. These are not isolated data points. They are the first earnings cycle to confirm, simultaneously and in dollar terms, that AI inference and agentic workloads are reshaping data centre hardware procurement in ways that extend well beyond the GPU allocation decisions investors have been tracking. What follows is an examination of what is driving CPU demand in AI infrastructure, why the shift appears structural rather than cyclical, and what it means for how investors evaluate semiconductor companies going forward.

Three earnings reports, one structural signal

The convergence matters more than any single result. AMD reported Q1 2026 revenue of $10.3 billion, up 38% year-over-year, with its data centre segment delivering $5.8 billion on the strength of EPYC CPU shipments. Intel’s Data Centre and AI (DCAI) segment posted $5.1 billion in revenue, up 22% year-over-year, with Q2 guidance of $13.8-14.8 billion signalling continued momentum. Arm closed fiscal Q4 FY2026 at $1.49 billion in quarterly revenue, but the sharper signal sits in its forward book: AGI CPU demand exceeding $2 billion across FY2027-2028, double the initial launch projection, against confirmed supply capacity of just $1 billion.

The Arm figure carries particular weight because it reflects forward orders, not trailing revenue. Customers are committing capital to CPU capacity that does not yet exist at the scale they need.

Intel CFO David Zinsner, Q1 2026 earnings call: “As you think about the growth rate now going forward, it’s going to become a significant part of the AI TAM.”

| Company | Q1/Q4 FY2026 Revenue | Year-Over-Year Growth | CPU-for-AI Signal |

|---|---|---|---|

| AMD | $10.3B | 38% | Data centre segment of $5.8B driven by EPYC CPUs |

| Intel | $5.1B (DCAI) | 22% | CFO identifies CPU as growing share of AI TAM |

| Arm | $1.49B | Full-year $4.92B | AGI CPU forward demand of $2B+ vs $1B supply |

Finance readers typically process these three reports in isolation. The concurrent timing makes the cross-company pattern the real signal.

The ripple effects from AMD’s Q1 result extended well beyond AMD itself, triggering a sector-wide semiconductor rally that pushed Nvidia up 5.77%, Samsung up 14%, SK Hynix up 11%, and Super Micro Computer up 24% in a single session, a cross-company price reaction that reflects how much of the AI supply chain now depends on the same CPU-plus-GPU infrastructure thesis being confirmed.

When big ASX news breaks, our subscribers know first

Why AI inference runs on more than a GPU

The prevailing assumption is straightforward: GPUs do AI. That framing was accurate when inference meant a single forward pass through a neural network. It is no longer sufficient.

Modern AI inference, particularly in agentic systems, is a multi-step distributed process. A single user query can trigger planning, tool invocation, memory retrieval, validation, and iterative reasoning across multiple model calls. The GPU handles the matrix arithmetic inside each model call. Everything between those calls falls to the CPU.

The orchestration functions CPUs perform in these systems include:

- Scheduling work to GPUs and managing job queues across accelerator clusters

- Managing key-value caches that store context between inference steps

- Handling pre-processing and post-processing of model inputs and outputs

- Coordinating vector database queries for retrieval-augmented generation

- Maintaining storage integration and keeping accelerators continuously fed with data

Research from Georgia Tech and Intel, published in November 2025, quantified the bottleneck: CPU tool processing accounts for 50-90% of total latency in agentic AI workflows. GPUs sit idle, waiting for CPU orchestration to complete before the next inference step can begin.

Georgia Tech’s CPU bottleneck research, published as “Characterizing CPU-Induced Slowdowns in Multi-GPU LLM Inference,” found that multi-GPU systems routinely underperform not because GPUs are saturated but because CPUs fail to keep accelerators adequately fed, a finding that places the orchestration constraint at the centre of AI infrastructure design rather than at its periphery.

How agentic AI raises the stakes for CPU performance

Agentic workflows require sustained, always-on CPU availability across multi-step reasoning cycles. Unlike single-pass inference, where CPU involvement is brief, an agentic pipeline may execute dozens of sequential CPU-dependent operations before returning a result. The hardware requirements this creates are specific:

- High single-core clock speed to minimise orchestration latency per step

- High core count to support parallel agent execution

- Large caches and fast memory access for context and state management

- Strong PCIe I/O connectivity for APIs, databases, and networking

Smaller, faster models and complex multi-agent pipelines both push the required CPU-to-GPU ratio higher, not lower. The orchestration bottleneck is the conceptual unlock for the investment thesis: GPU demand and CPU demand are not competing claims on the same budget. They are complementary requirements of the same system.

The ratio shift that is repricing server infrastructure

The complementary relationship between CPUs and GPUs is now visible in procurement data. Intel’s Q1 2026 earnings call disclosed the ratio evolution in concrete terms:

- Historical baseline: 1 CPU per 4-8 GPUs in data centre deployments

- Current state: The ratio has already compressed toward 1:4 and is trending toward 1:1

- Agentic AI projection: Ratios could converge to 1:1 or favour CPUs further as orchestration complexity scales

This ratio shift, not just absolute growth in AI spending, is the mechanism that translates infrastructure investment into incremental CPU revenue. Data centre budgets that were previously dominated by GPU line items are now allocating meaningfully more to CPU capacity.

Supply constraints have already produced tangible price effects. Server CPU prices have risen approximately 20% since March 2026, according to Intel commentary, reflecting demand that exceeds current manufacturing capacity. TrendForce confirmed the directional shift toward 1:1 ratios in an April 2026 assessment.

Intel’s strategic response: Intel confirmed in October 2025 that it is deprioritising consumer CPU production to meet data centre demand, a supply-side decision that validates the strength of the demand signal.

The pricing data matters because it confirms the demand is structural, not aspirational. Customers are paying more for CPUs and suppliers are reallocating production capacity to serve them.

AMD’s revised server CPU total addressable market forecast, raised from 18% to 35% annual growth and implying a market above $120 billion by 2030, is the clearest quantification yet of how the agentic AI hardware investment map is being redrawn, with sequential reasoning and agent orchestration tasks placing 35-45% of inference workloads on CPU-bound paths rather than GPU-bound ones.

Arm, AMD, and Intel: three architectures competing for the control plane

The question is no longer whether CPUs matter in AI infrastructure. It is which architectures capture the demand.

Arm’s Neoverse platform has emerged as the hyperscaler-preferred control-plane architecture. By March 2026, more than 1.25 billion Neoverse-based data centre cores were deployed, up from 1 billion just one month earlier. AWS Trainium systems rely on Arm-based Graviton processors to coordinate large accelerator clusters. Nvidia’s latest rack architectures integrate dozens of Arm-based CPUs alongside GPUs. Arm’s data centre business is projected to reach $15 billion, as cited at the Arm Everywhere event.

AMD leads on efficiency. The 5th Gen EPYC processor offers up to 2.26x uplift on SPECpower (operations per watt) compared to Nvidia Grace Superchip-based systems, a direct competitive claim relevant to hyperscalers facing power density constraints. AMD’s roadmap, the “Venice” CPU and “Helios” rack-scale architecture, signals confidence in sustained demand through the next product cycle. Analyst price targets following Q1 results were raised to the $485-579 range.

Intel’s Xeon server CPUs benefit from the structural tailwind, though the company operates from a position of catching up on both architecture and supply. The production pivot from consumer to data centre chips is a credibility signal, and Q2 guidance of $13.8-14.8 billion reflects continued momentum.

| Company | Architecture Positioning | Key AI CPU Product | Differentiation | Forward Data Point |

|---|---|---|---|---|

| Arm | Hyperscaler control plane | Neoverse / AGI CPU | Scale: 1.25B deployed cores | $15B data centre TAM projection |

| AMD | Efficiency leader | 5th Gen EPYC / Venice | 2.26x SPECpower vs Grace | Analyst targets: $485-579 |

| Intel | Incumbent scale | Xeon for AI workloads | Production pivot to data centre | Q2 guidance: $13.8-14.8B |

The next major ASX story will hit our subscribers first

What the GPU-only investment framework misses

For two years, the dominant AI investment thesis has been that AI equals GPU spending. That framework systematically underweights the orchestration infrastructure required to operate the GPU clusters being built.

The scale of commitment is without modern precedent: major US technology firms are on track to spend up to $700 billion on hardware in 2026, and the AI infrastructure investment cycle is already compressing free cash flow sharply enough that Wall Street has imposed a roughly twelve-month horizon for monetisation evidence, a constraint that shapes how quickly CPU vendors can be repriced as their AI relevance becomes consensus.

The blind spots are specific:

- Inference efficiency cannot be optimised at the GPU level alone; system balance between CPU and GPU is required, and that architectural reality has revenue implications for CPU vendors

- Analysis of real inference pipelines, including financial anomaly detection use cases, shows that CPU-dominated processing can consume more time than GPU-based model inference itself

- The CPU-to-GPU ratio shift means that incremental AI infrastructure spending now flows to both GPU and CPU vendors, not just GPU vendors

- Power and density constraints at hyperscale data centres increasingly favour CPU architectures optimised for efficiency, a competitive dimension the GPU-only framework ignores

Some repricing is underway. AMD’s analyst price target upgrades to $485-579 reflect recognition of CPU-driven AI revenue. Intel’s CFO framing CPU demand as a growing share of the AI total addressable market (TAM) is a direct challenge to GPU-only valuation models.

The supply constraint as market signal: Arm’s AGI CPU demand more than doubled initial projections, yet supply is confirmed for only half of total demand. When demand outstrips supply before a product is fully ramped, it suggests the repricing of CPU companies may still be early.

For investors already long GPU names, the question is whether CPU exposure is additive or redundant. The architecture provides the answer: CPUs and GPUs are not substitutes in AI systems. They are co-requirements.

The AI hardware story is wider than the market priced

The May 2026 earnings cycle may serve as a reference point: the first time CPU demand for AI appeared simultaneously in the reported financials of three major semiconductor companies. The AI infrastructure buildout appears to be entering a phase where CPU investment grows faster than the headline GPU narrative has suggested, and the companies building AI-optimised CPU architectures are at an inflection point.

Arm’s data centre segment is projected to become its largest business unit. Intel’s Q2 guidance of $13.8-14.8 billion reflects continued data centre demand momentum. AMD’s “Venice” and “Helios” roadmap signals product-cycle confidence in sustained AI CPU demand.

Investors tracking the development of this thesis should monitor:

- CPU-to-GPU ratio disclosures in future earnings calls from Intel, AMD, and hyperscaler customers

- Server CPU pricing trends as a real-time indicator of supply-demand balance

- Arm AGI CPU supply ramp progress against the $2 billion forward demand figure

- Hyperscaler capital expenditure breakdowns that separate CPU and GPU procurement

Those who update their semiconductor investment framework to account for CPU demand before it is fully reflected in consensus estimates and valuations are working from a more complete picture of where AI infrastructure spending actually flows.

Investors who find the CPU repricing thesis compelling should stress-test it against the bearish case: for a detailed examination of how escalating inference costs, derivative market complacency, and potential hyperscaler capex deceleration could unwind semiconductor valuations, our full explainer on AI hardware bubble risks walks through the specific vulnerability scenarios and the forward guidance signals from Microsoft, Amazon, Alphabet, and Meta that would confirm or refute them.

This article is for informational purposes only and should not be considered financial advice. Investors should conduct their own research and consult with financial professionals before making investment decisions. Past performance does not guarantee future results. Financial projections referenced in this article are subject to market conditions and various risk factors.

Frequently Asked Questions

What is CPU for AI and why does it matter in data centre infrastructure?

CPU for AI refers to the growing role of central processing units in orchestrating artificial intelligence workloads, particularly agentic systems that require scheduling, memory management, and coordination between multiple model calls. Research from Georgia Tech and Intel found that CPU tool processing accounts for 50-90% of total latency in agentic AI workflows, making CPUs a critical co-requirement alongside GPUs in modern AI infrastructure.

How is the CPU-to-GPU ratio in data centres changing because of agentic AI?

Intel disclosed during its Q1 2026 earnings call that the historical ratio of one CPU per 4-8 GPUs has already compressed toward 1:4 and is trending toward 1:1, with agentic AI workloads potentially pushing the ratio to favour CPUs further as orchestration complexity scales. Server CPU prices have risen approximately 20% since March 2026, reflecting demand that exceeds current manufacturing capacity.

What did Arm, AMD, and Intel report about AI CPU demand in early 2026?

AMD reported Q1 2026 revenue of $10.3 billion, up 38% year-over-year, with its data centre segment delivering $5.8 billion driven by EPYC CPU shipments. Intel's Data Centre and AI segment posted $5.1 billion, up 22%, while Arm disclosed forward AGI CPU demand exceeding $2 billion for FY2027-2028, more than double its initial launch projection, against confirmed supply capacity of just $1 billion.

How does agentic AI differ from standard AI inference in terms of hardware requirements?

Standard AI inference involves a single forward pass through a neural network where GPU involvement is dominant and CPU involvement is brief. Agentic AI pipelines execute dozens of sequential CPU-dependent operations, including planning, tool invocation, memory retrieval, and validation, before returning a result, requiring high single-core clock speeds, high core counts, large caches, and strong PCIe connectivity from CPUs.

What metrics should investors monitor to track the CPU for AI investment thesis?

Investors should watch CPU-to-GPU ratio disclosures in future earnings calls from Intel, AMD, and hyperscaler customers, server CPU pricing trends as a real-time indicator of supply and demand balance, progress on Arm's AGI CPU supply ramp against its $2 billion forward demand figure, and hyperscaler capital expenditure breakdowns that separately identify CPU and GPU procurement.