AMD Surges 19% as Q1 Earnings Smash Estimates on AI Demand

Key Takeaways

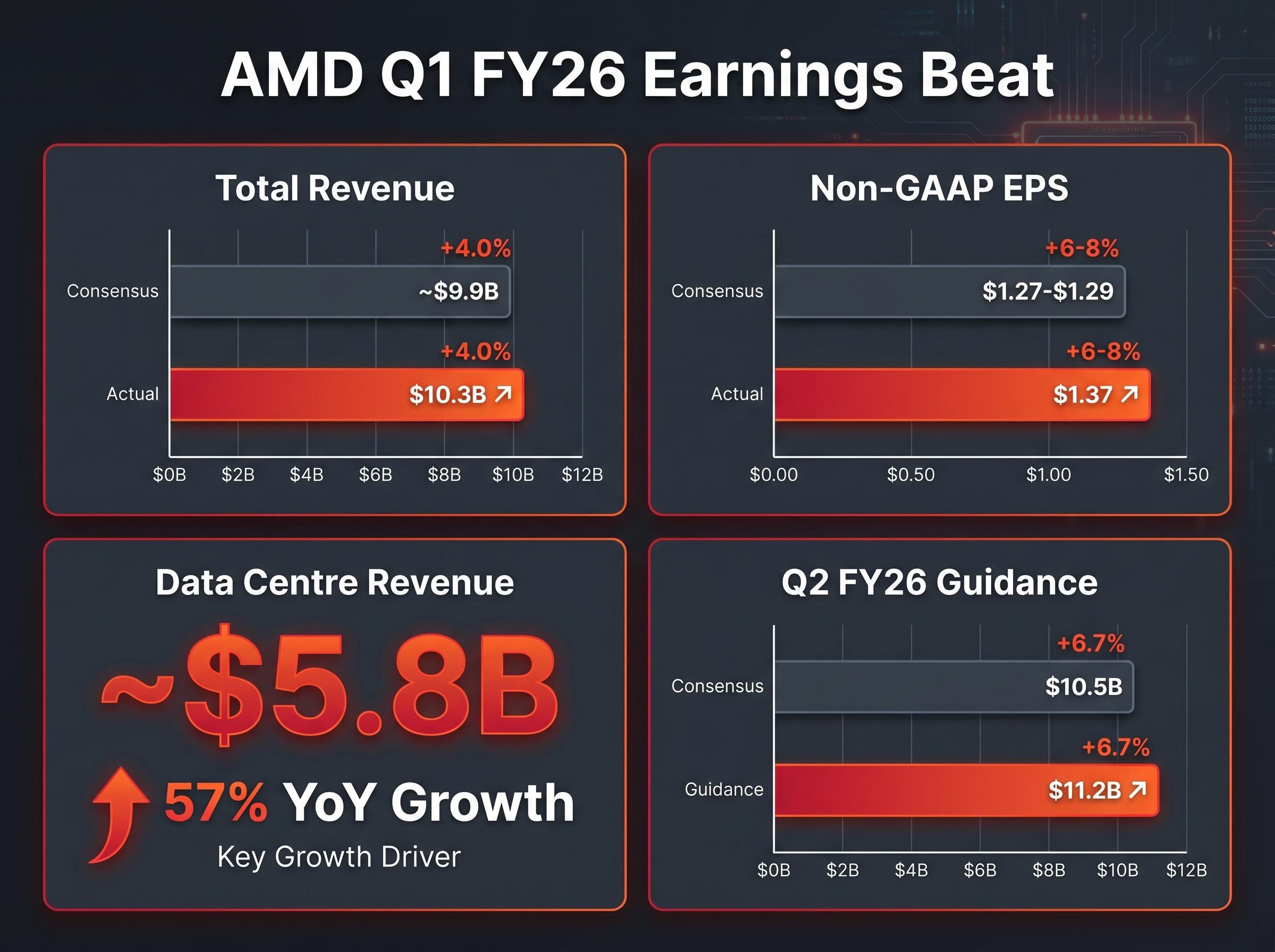

- AMD reported Q1 FY26 revenue of $10.3 billion, beating analyst consensus by approximately 4%, with data centre revenue of $5.8 billion representing 57% year-over-year growth and confirming broad-based AI chip demand.

- Q2 FY26 guidance of $11.2 billion came in roughly 7% above the $10.5 billion analyst consensus, the single most market-moving figure from the earnings report given its forward visibility signal.

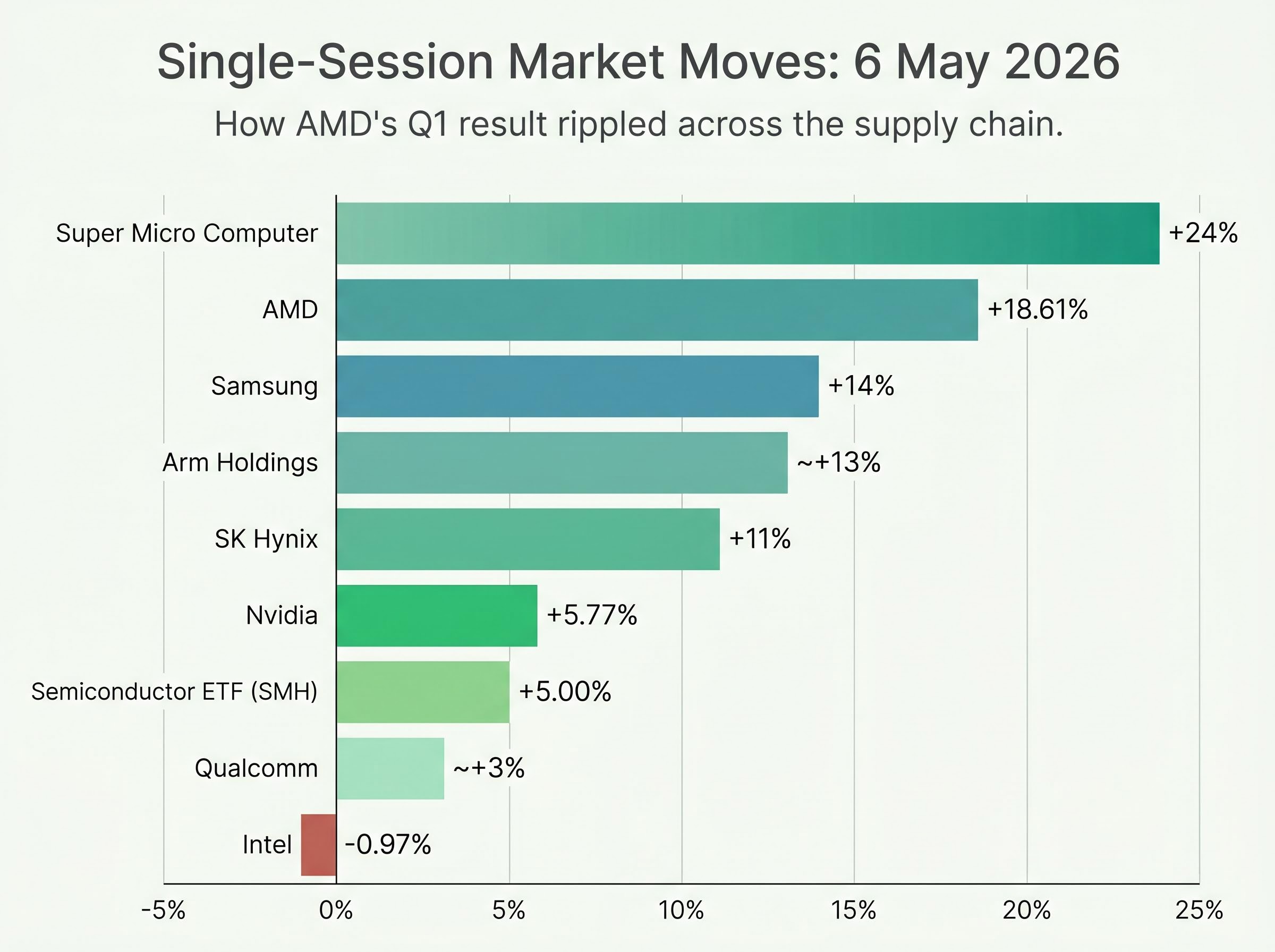

- The 18.61% single-session gain rippled across the semiconductor sector, with Nvidia rising 5.77%, Samsung climbing 14%, SK Hynix gaining 11%, and Super Micro Computer surging 24%, confirming supply-chain-wide AI demand confirmation.

- Goldman Sachs upgraded AMD to Buy and Morgan Stanley raised its price target to $360 from $255, while Deutsche Bank maintained a cautious stance citing stretched forward valuations and Nvidia Blackwell competition risk.

- HBM memory supply from Samsung and SK Hynix represents a structural bottleneck for AMD's GPU ramp, and MI325X volume shipments in H2 2026 will be the key product-level signal for whether AMD maintains its inference cost advantage into 2027.

AMD shares surged 18.61% on 6 May 2026, closing at $421.39 and adding roughly $107 billion in market capitalisation in a single session. The move ranks among the largest single-day value additions in semiconductor history, and it was earned. The catalyst was a Q1 FY26 earnings report that beat analyst expectations on every major metric: revenue, earnings per share, gross margin, and forward guidance. The result arrived at a moment when investors were already debating whether AI chip spending had durably shifted demand or was inflating a sentiment bubble. What follows unpacks what AMD’s numbers actually show, why the sector-wide rally that followed reflects more than momentum trading, and what risks remain for investors betting on the AI infrastructure cycle to sustain.

AMD’s Q1 numbers were not a narrow beat, they were a statement

Start with revenue. AMD reported $10.3 billion for Q1 FY26, up 38% year-over-year and approximately 4% above analyst consensus. Non-GAAP earnings per share came in at $1.37, against estimates of $1.27-$1.29. Non-GAAP gross margin held at 53%.

AMD’s Q1 FY26 earnings release confirmed total revenue of $10.3 billion and non-GAAP diluted EPS of $1.37, with CEO Lisa Su citing accelerating demand for AI infrastructure as the primary driver of record data centre segment performance.

The engine behind those headline figures was the data centre segment: approximately $5.8 billion in revenue, representing 57% year-over-year growth and 56% of total AMD revenue. This was not a single-product anomaly. It was broad-based demand across GPUs and server processors.

Then came the number that moved the stock. Q2 FY26 revenue guidance of $11.2 billion, implying 46% year-over-year growth, landed well above the $10.5 billion consensus. Forward guidance carries the most analytical weight because it reflects management’s visibility into the pipeline, not just what has already shipped.

- Total revenue: $10.3 billion (approximately 4% above consensus)

- Non-GAAP EPS: $1.37 (vs. $1.27-$1.29 estimates)

- Non-GAAP gross margin: 53%

- Data centre revenue: approximately $5.8 billion (up 57% YoY)

- Q2 FY26 guidance: $11.2 billion (vs. $10.5 billion consensus)

CEO Lisa Su, AMD earnings call: “We delivered an outstanding first quarter, driven by accelerating demand for AI infrastructure… Customer engagement around MI450 Series and Helios is strengthening…”

| Metric | AMD Result | Analyst Estimate | Beat Magnitude |

|---|---|---|---|

| Total Revenue | $10.3B | ~$9.9B | ~4% above |

| Non-GAAP EPS | $1.37 | $1.27-$1.29 | ~6-8% above |

| Data Centre Revenue | ~$5.8B | Not separately guided | 57% YoY growth |

| Q2 FY26 Guidance | $11.2B | $10.5B | ~7% above |

When big ASX news breaks, our subscribers know first

Why data centre revenue is the only number that matters for the AI thesis

The $5.8 billion figure is not just large. It is structurally significant, and understanding why requires following the money from chips to workloads to procurement logic.

AMD shipped record volumes of MI300X Instinct GPUs in Q1, with more than $5 billion in Instinct GPU revenue alone. The MI300X is AMD’s flagship data centre GPU, a processor designed specifically for artificial intelligence workloads in cloud computing environments. Its Q1 performance represented the bulk of data centre segment revenue and confirmed that hyperscaler demand is broadening, not narrowing.

The broader backdrop to AMD’s Q1 beat is the scale of hyperscaler capital expenditure now flowing into AI infrastructure: the four largest cloud platforms are projected to invest $650 billion in 2026, a concentration of hardware spending that creates durable demand visibility for GPU and memory suppliers operating across the supply chain.

Su confirmed that inference, the process of running trained AI models to generate outputs, now represents over 40% of AMD’s data centre revenue mix and is growing faster than training workloads. The economic rationale is direct: MI300X delivers approximately 40% lower cost-per-token on inference workloads versus comparable Nvidia offerings, according to AMD’s benchmarking data.

Hyperscaler breadth removes a structural concern that analysts had previously flagged. All four major cloud platforms are now deploying AMD AI silicon at scale:

- Microsoft: Expanded MI300X deployment for inference workloads

- Meta: Multi-billion dollar commitment for 2026 AMD AI deployments

- Google: Training and inference mixed deployments confirmed

- Oracle: Training and inference mixed deployments confirmed

What inference economics mean for AMD’s competitive position

The distinction between AI training (teaching a model) and AI inference (running a trained model) matters because the two workloads have different cost structures. Nvidia maintains dominance in training via its H100, H200, and Blackwell GPU families. AMD is closing the gap specifically on inference, where cost-per-token economics favour the MI300X.

The EPYC CPU combined with the MI300X is positioned as an optimised inference stack, giving AMD a systems-level argument rather than a GPU-only one. That said, AMD’s market share in AI GPUs remains approximately 10-15% versus Nvidia’s estimated 80-85%. The growth story is about share expansion from a low base, not displacement.

The next-generation roadmap matters here. MI325X is in the sampling phase with volume shipments expected in H2 2026. The MI450 Series and Helios platform are in active customer engagement, per Su’s earnings call commentary.

The sector-wide rally tells a story beyond AMD’s own results

AMD’s result did not stay contained. It rippled across the semiconductor supply chain, spanning US chip designers, Korean memory manufacturers, and AI server assemblers in a single session.

Nvidia rose 5.77% to $207.83, pushing its market capitalisation to $5.051 trillion. Arm Holdings gained approximately 13%. Super Micro Computer surged 24%, with above-consensus Q4 revenue and earnings projections attributed to AI server demand. Qualcomm added approximately 3%.

The Korean memory rally carried its own logic. Samsung climbed 14%, reaching a $1 trillion market capitalisation, only the second Asian-headquartered company to cross that threshold. SK Hynix rose 11%. Both companies manufacture High Bandwidth Memory (HBM), a specialised memory chip that is a direct physical input into AMD’s MI300X and Nvidia’s AI GPU architectures. Their rally was not coincidence; it was supply-chain confirmation that AI chip demand is pulling through memory orders at scale.

Asian semiconductor valuations tell a striking story relative to the US names: Samsung and SK Hynix traded at roughly 12x forward earnings as of early May 2026, approximately half the Nasdaq 100 multiple, despite earnings growth running nearly three times faster than their American peers, a divergence that institutional capital began closing on 6 May.

| Company / ETF | Session Move | Sector Role |

|---|---|---|

| AMD | +18.61% | AI GPU designer |

| Nvidia | +5.77% | AI GPU market leader |

| Arm Holdings | ~+13% | Chip architecture licensor |

| Qualcomm | ~+3% | Mobile and edge AI chipmaker |

| Samsung | +14% | HBM memory supplier |

| SK Hynix | +11% | HBM memory supplier |

| Super Micro Computer | +24% | AI server assembler |

| Intel | -0.97% | Legacy CPU / AI laggard |

| Semiconductor ETF (SMH) | +5.00% | Broad sector benchmark |

Intel fell 0.97% on a day AMD gained 18.61%, suggesting the market is rotating within semiconductors rather than lifting the sector uniformly.

What Wall Street analysts said, and where they disagree

Goldman Sachs upgraded AMD to Buy from Neutral on 6 May, citing explosive AI data centre growth and 57% year-over-year segment revenue as evidence of sustained market share gains. Morgan Stanley reiterated its Overweight rating and raised its price target to $360 from $255, characterising Q2 guidance as validation of a multi-year cycle.

JPMorgan maintained its Overweight rating. The firm’s commentary was blunt: “AMD’s $5.8B data centre revenue confirms it’s no longer a #2 player; MI300X ramp exceeds expectations.”

The bullish consensus across Goldman, Morgan Stanley, and JPMorgan frames AMD’s rally as reflecting the early innings of an estimated $1 trillion-plus annual AI capital expenditure cycle projected to run through 2026-2028. That is a structural thesis, not a momentum call.

The bear case investors should not ignore

Deutsche Bank maintained a cautious stance, warning that valuation is stretched on a forward price-to-earnings basis and that sentiment may be running ahead of fundamentals.

Inference cost economics sit at the centre of the bear case that Deutsche Bank and others have articulated: if generative AI applications remain structurally unprofitable at scale, the $635 billion to $700 billion in hyperscaler hardware commitments for 2026 could face deceleration, and AMD’s inference-driven revenue mix would be the first segment exposed to any procurement slowdown.

The bear case rests on three specific risks:

- Stretched valuation at elevated forward P/E. AMD’s 18.61% single-day move on a $687 billion market cap represents one of the largest dollar-value single-session gains in semiconductor history. Moves of this magnitude at elevated multiples increase downside sensitivity to any subsequent guidance disappointment.

- Nvidia Blackwell reassertion risk. If Nvidia’s Blackwell platform proves more cost-competitive on inference than current analyst models assume, AMD’s primary competitive advantage narrows.

- Inference demand sensitivity. Enterprise AI adoption could stall, or inference workload growth could cool faster than the current pipeline suggests. AMD management flagged HBM supply chain constraints (Samsung and SK Hynix memory supply) as a potential bottleneck for further GPU ramp acceleration, a supply-side risk that is structural, not sentiment-driven.

The next major ASX story will hit our subscribers first

AMD’s forward guidance frames what comes next for AI infrastructure investors

Q2 guidance of $11.2 billion implies that AMD’s data centre segment alone could approach or exceed $7 billion if the revenue mix holds at approximately 56-60% data centre. That is the number to watch at the next earnings report.

AMD management characterised Q2 guidance as “conservative given pipeline visibility,” flagging upside potential if supply chain conditions and demand signals hold through the quarter.

The single most important external variable for AMD’s execution against that guidance is HBM memory supply. SK Hynix and Samsung capacity is a shared input for both AMD and Nvidia, meaning competition for memory allocation intensifies as both companies ramp GPU production simultaneously.

Samsung and SK Hynix HBM supply constraints have intensified significantly ahead of AMD’s Q2 ramp, with both manufacturers flagging record-low order fulfillment rates and fully booked HBM4 production capacity, a bottleneck that applies equally to Nvidia and represents a ceiling on how quickly either company can accelerate GPU shipments.

On the product roadmap, two milestones will determine whether AMD maintains its inference cost advantage into 2027, when Nvidia’s next-generation Blackwell successors are expected to close the gap:

- Q2 data centre revenue versus the approximately $7 billion implied target, as the near-term execution test

- MI325X volume ramp in H2 2026, the product-level competitiveness signal

- HBM supply availability across Samsung and SK Hynix, the external constraint neither AMD nor Nvidia fully controls

Broader context supports the demand backdrop. The US trade deficit widened in March 2026 with import growth concentrated in AI-related capital equipment, corroborating the scale of infrastructure spending already underway.

AMD’s quarter has redrawn the AI chip investment map, at least for now

AMD’s Q1 results matter not because they confirm AMD is winning the AI chip race outright, but because they confirm the race has a genuine second competitor. That structural reality changes the risk profile for investors who assumed Nvidia was the only viable entry point into the AI infrastructure cycle.

The bear case is not irrational. AMD trades at a demanding multiple, and the variables that will determine whether the thesis holds, HBM supply, Blackwell competition, inference demand durability, are genuinely unresolved.

The relevant question is no longer whether AMD belongs in AI infrastructure discussions. It is whether current valuations already price in the multi-year cycle the bulls are projecting. For investors tracking the competitive dynamics at stake, related coverage on Nvidia’s Blackwell roadmap and the broader AI semiconductor investment landscape offers further context.

For investors who want to stress-test the multi-year AI hardware thesis beyond AMD’s quarterly results, our deep-dive into software monetisation lag examines how the gap between application revenue generation and hardware deployment timelines could constrain procurement budgets from 2027 onward, a risk that sits upstream of any single company’s guidance.

This article is for informational purposes only and should not be considered financial advice. Investors should conduct their own research and consult with financial professionals before making investment decisions. Past performance does not guarantee future results. Financial projections are subject to market conditions and various risk factors.

Frequently Asked Questions

What did AMD report in its Q1 FY26 earnings results?

AMD reported Q1 FY26 revenue of $10.3 billion, up 38% year-over-year and approximately 4% above analyst consensus, with non-GAAP EPS of $1.37 against estimates of $1.27-$1.29 and data centre revenue of approximately $5.8 billion, up 57% year-over-year.

Why did AMD stock surge 18.61% on 6 May 2026?

AMD shares surged 18.61% after the company delivered a broad earnings beat across revenue, EPS, gross margin, and forward guidance, with Q2 FY26 revenue guidance of $11.2 billion coming in roughly 7% above the $10.5 billion analyst consensus.

How does AMD compete with Nvidia in AI chips?

AMD competes primarily on inference workloads, where its MI300X GPU delivers approximately 40% lower cost-per-token versus comparable Nvidia offerings according to AMD benchmarking data, though AMD's overall AI GPU market share remains approximately 10-15% versus Nvidia's estimated 80-85%.

What is HBM memory and why does it matter for AMD's GPU outlook?

High Bandwidth Memory (HBM) is a specialised memory chip that is a direct physical input into AMD's MI300X and Nvidia's AI GPU architectures; AMD management flagged HBM supply constraints from Samsung and SK Hynix as a potential bottleneck that could limit how quickly AMD can accelerate GPU shipments in 2026.

Which hyperscalers are deploying AMD AI silicon at scale?

All four major cloud platforms are now deploying AMD AI silicon at scale: Microsoft has expanded MI300X deployment for inference workloads, Meta has made a multi-billion dollar commitment for 2026, and both Google and Oracle have confirmed mixed training and inference deployments.