Semiconductor Stocks Are Surging on AI Capex That Has Yet to Pay Off

Key Takeaways

- The Phlx Semiconductor Index has surged approximately 66.3% year-to-date through early May 2026, driven by a market narrative that AI agent deployments are creating persistent, compounding inference demand across the semiconductor stack.

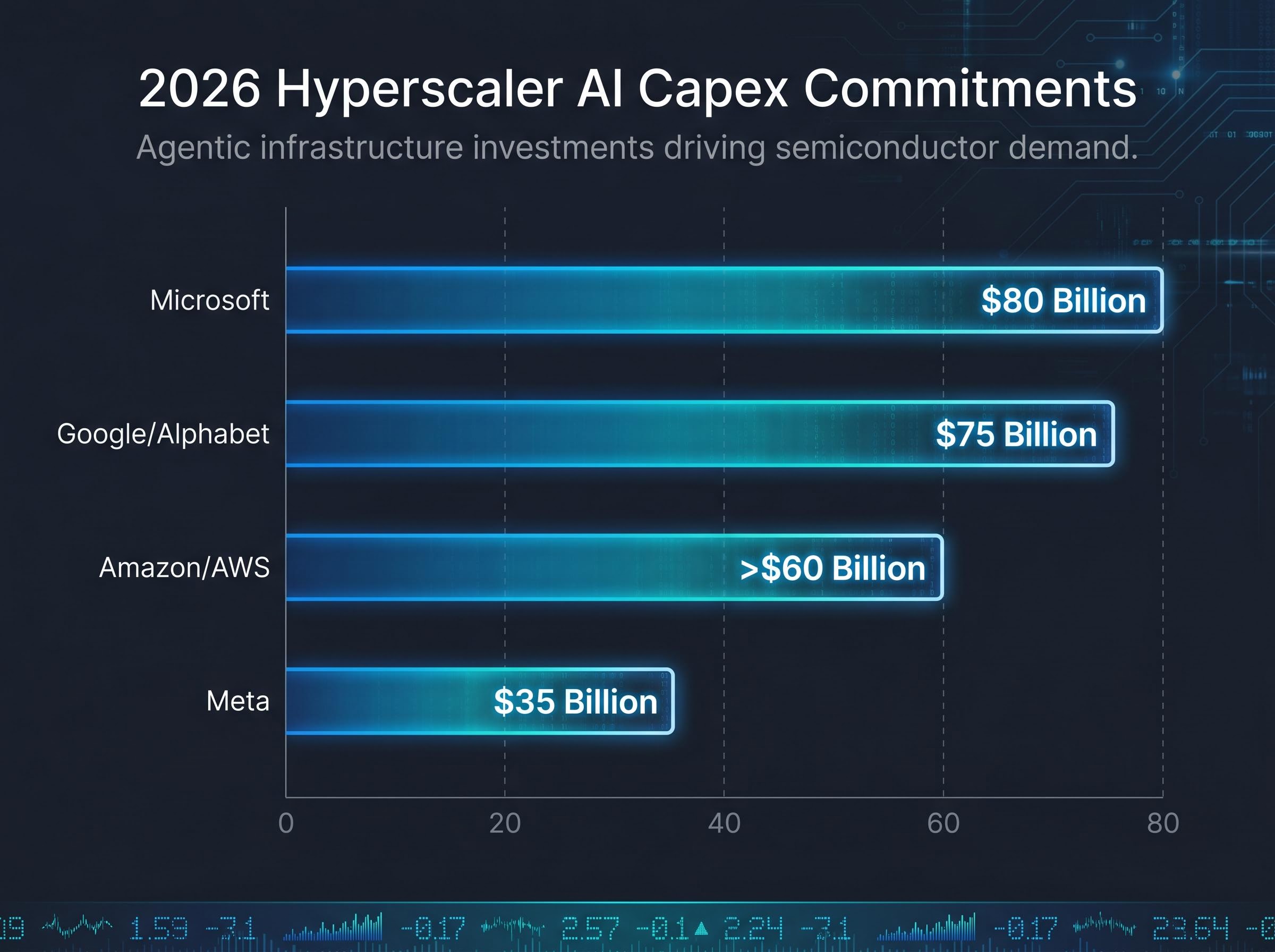

- Hyperscalers including Microsoft, Google, Amazon, and Meta have committed a combined approximately $725 billion in 2026 capex guidance, with spending explicitly directed toward agent-driven inference infrastructure.

- Micron and AMD have significantly outpaced Nvidia year-to-date, with Micron's HBM revenue growing approximately 300% year-over-year as high-bandwidth memory emerges as a critical bottleneck component in AI chip stacks.

- Morningstar analyst Dennis Li has identified an 18-24 month capex-to-revenue lag as the central risk, while Gartner estimates only 20% of current AI agent pilots are scalable to production by 2027.

- Geopolitical risks including the MATCH Act and China's Order No. 834 are unevenly distributed across the sector, with ASML's approximately 29% China revenue exposure creating a materially higher risk profile than Nvidia's approximately 15%.

The Phlx Semiconductor Index (SOX) has surged approximately 66.3% year-to-date through early May 2026, a pace that dwarfs the broader S&P 500 and demands an explanation beyond simple momentum. The explanation being offered by markets centres on a single narrative: artificial intelligence is entering a new phase, one defined by autonomous agents rather than model training, and that shift requires a fundamentally larger and more persistent layer of compute infrastructure. For investors, the question is whether that narrative represents a durable investment thesis or a hype cycle that has pulled valuations well ahead of fundamentals. What follows examines the AI agent demand story driving semiconductor stocks, stress-tests it against valuation data and institutional scepticism, weighs the geopolitical risks that could disrupt the momentum, and offers a framework for evaluating whether the sector at current prices represents opportunity or overreach.

From model training to agent deployment: the demand story reshaping the sector

Why inference is more infrastructure-intensive than training

The distinction matters because it changes the shape of semiconductor demand. Model training is a capital event concentrated in time: a company builds or rents a cluster of GPUs, trains a model over weeks or months, then deploys it. Inference, by contrast, is the ongoing computational cost of actually using that model. AI agents performing multi-step tasks (browsing, coding, reasoning chains, coordinating with other agents) create compound inference demand that runs continuously, serving millions of concurrent tasks rather than completing a single training run.

This shift from periodic to persistent compute demand is the core of the bull case. If inference workloads scale as projected, the semiconductor infrastructure required is not a one-time buildout but a recurring, expanding obligation.

What hyperscalers are actually committing to

The market’s primary evidence for this thesis comes from the capital expenditure commitments of the four largest cloud providers, all of which have directed spending explicitly toward inference and agent-related infrastructure in 2026:

- Microsoft: approximately $80 billion in capex, focused on inference efficiency infrastructure tied to AI agent integration across Copilot and enterprise products

- Google/Alphabet: approximately $75 billion directed toward TPU buildout for agentic search applications, with demand reportedly outpacing current supply

- Amazon/AWS: over $60 billion, with emphasis on Trainium and Inferentia custom silicon for agent workloads

- Meta: approximately $35 billion, with inference spending reported up approximately 2x year-over-year, tied to Llama-based agent deployments

Nvidia has projected approximately 150% year-over-year data centre revenue growth in 2026, citing AI agent deployments as the primary accelerant for inference demand.

The directional consistency across all four hyperscalers is itself a data point. Whether or not each figure proves exact, the scale and stated rationale point to a capex cycle that the industry is framing as structurally different from prior investment waves. AMD has cited hyperscaler commitments exceeding $10 billion for 2026 inference infrastructure as driving MI300X and MI325X order growth.

The scale behind those figures becomes clearer when the four providers are aggregated: hyperscaler capex commitments for 2026 reached approximately $725 billion in combined guidance, with Q1 2026 alone accounting for $130 billion, a pace that implies the annual run rate could approach $1 trillion by 2027 if current trajectories hold.

When big ASX news breaks, our subscribers know first

Which stocks are actually winning, and by how much

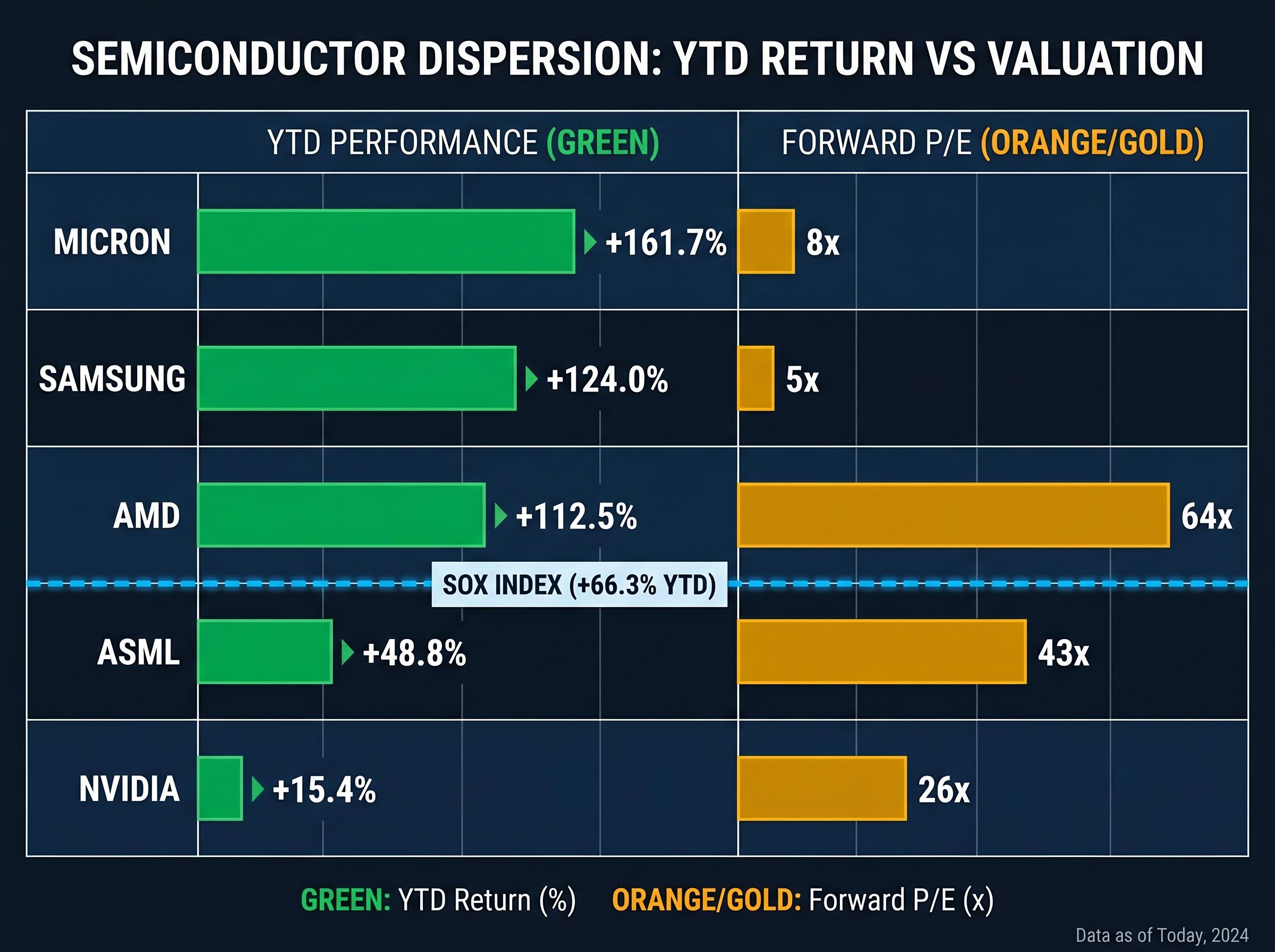

The SOX index’s 66.3% gain tells a sector story, but the individual stock returns tell a more specific one. The dispersion across names is wide, and it contains a genuine analytical insight about where the market believes incremental value is accumulating.

| Stock | YTD Performance | Forward P/E | Consensus Rating | Primary AI Demand Exposure |

|---|---|---|---|---|

| Nvidia | ~+15.4% | ~26x | Buy (81%) | Data centre GPUs, Blackwell architecture ramp |

| AMD | ~+112.5% | ~64x | Strong Buy (88%) | MI300X/MI325X inference accelerators |

| Micron | ~+161.7% | ~8x | Buy (75%) | HBM memory for AI chip stacks |

| ASML | ~+48.8% | ~43x | Hold (55%) | EUV lithography monopoly |

| Samsung | ~+124.0% | ~5x | Buy (70%) | Memory pricing recovery, AI-driven demand |

The most striking feature is not the top of the table but the relative positioning of Nvidia. At +15.4% year-to-date, it trails every other name listed, despite being the sector’s most prominent AI beneficiary. A higher entering valuation base partially explains this: when a stock already prices in dominance, the incremental return accrues to the names catching up.

Why AMD and Micron are outrunning Nvidia in 2026

AMD’s +112.5% gain reflects the MI300X and MI325X ramp, which has positioned the company as a credible second supplier for inference workloads at a moment when hyperscalers are actively seeking supply diversification. Micron’s +161.7% reflects something even more specific: high-bandwidth memory (HBM) has become a bottleneck component in AI chip stacks, and Micron has reported HBM revenue growing approximately 300% year-over-year, with $20 billion in projected 2026 AI-related sales.

The valuation gap between AMD at approximately 64x forward P/E and Micron at approximately 8x represents a fundamentally different risk proposition for what is, at its core, thematic exposure to the same AI infrastructure buildout.

Asian semiconductor stocks were trading at approximately 12x forward P/E as of early May 2026, roughly half the Nasdaq 100’s multiple, despite earnings growth forecasts running nearly three times faster than their US peers, a valuation gap that has attracted record single-session foreign inflows into Korean equities concentrated in Samsung and SK Hynix.

What AI agents actually are, and why it matters for semiconductor demand

AI agents are software systems that can autonomously plan, reason across multiple steps, use tools, and execute tasks without continuous human instruction. Unlike earlier AI applications such as chatbots or image generators, which respond to a single prompt and return a single output, agents chain multiple reasoning steps together, often calling external tools (databases, APIs, other agents) as they work.

This distinction matters for semiconductor demand because each step in an agent’s reasoning chain requires a separate inference computation. A single agent task might involve dozens of sequential inferences, each drawing on the three compute layers that underpin agent architecture:

- Inference processing: GPUs and accelerators performing real-time reasoning at each step of the agent’s task, requiring persistent rather than batch compute capacity

- High-bandwidth memory: HBM retaining the agent’s working context across reasoning steps, a requirement that scales with task complexity

- Networking silicon: Chips coordinating communication between agents and between agents and external tools, creating a networking demand layer that earlier AI workloads did not generate

Gartner estimated in April 2026 that only approximately 20% of current AI agent pilots are scalable to production by 2027, characterising the current environment as one where enthusiasm is running ahead of demonstrated deployability.

Concrete deployment evidence does exist. Salesforce reported that its Agentforce platform added approximately $500 million in annual recurring revenue, while UiPath cited approximately 40% year-over-year revenue growth driven by RPA-based agent deployments. A McKinsey survey from April 2026 found approximately 15% of Fortune 500 companies reported active AI agent pilots. The question is whether these early signals represent the beginning of a broad adoption curve or isolated successes.

Capex commitments versus proven returns: where the sceptical case rests

The infrastructure spending is real. Whether it converts to revenue on the timeline the market is pricing in is the central unresolved question.

Morningstar analyst Dennis Li has articulated the bear case most precisely. His assessment, current as of 8 May 2026, centres on an 18-24 month capex-to-revenue lag: the period between when hyperscalers deploy infrastructure and when application-layer companies generate sufficient economic value to justify those ongoing outlays. Li argues the rally is pricing in monetisation that has not yet been demonstrated.

Dennis Li at Morningstar has warned that the AI capex-to-revenue lag of 18-24 months makes the current rally unsustainable without concrete proof of returns from application-layer companies.

Two specific uncertainties underpin this thesis: the timeline for AI productivity gains to materialise at enterprise scale, and the degree to which productivity gains convert to corporate profitability rather than simply reducing headcount costs.

The McKinsey AI productivity paradox research published in April 2026 found that most enterprise AI investments are concentrated in pilots targeting incremental efficiency gains rather than structural business transformation, a pattern that maps directly onto the Solow Paradox dynamic where broad technology adoption precedes measurable aggregate productivity improvement by years.

The institutional scepticism is not limited to Morningstar:

Paul Tudor Jones framed the AI bull market runway as one to two years on 6 May 2026, drawing an explicit parallel to 1999, while Arm Holdings’ royalty miss of $22 million in the same week sent the SOX down 2.86%, providing a live test of how thin the margin between structural demand and sentiment-driven correction has become.

- Morningstar/Dennis Li: The 18-24 month capex-to-revenue lag means markets are pricing in monetisation that remains unproven at scale

- Gartner: Only 20% of current AI agent pilots are scalable to production by 2027, suggesting deployment timelines may be optimistic

- Harvard Business Review: A 60% abandonment rate among enterprise agent pilots, with productivity gains not converting to measurable profit improvements until approximately 2028, drawing parallels to the historical productivity paradox

Goldman Sachs has flagged a bear case in which the capex cycle peaks by Q4 2026, a scenario that would compress the window for monetisation to justify current valuations. For investors considering entry at AMD’s approximately 64x forward P/E or ASML’s approximately 43x, the embedded assumption is that revenue materialises within a relatively tight window.

Past performance does not guarantee future results. Financial projections are subject to market conditions and various risk factors.

Geopolitical fault lines: how US-China tensions are reshaping sector risk

Two regulatory developments represent the most concrete geopolitical risks to the semiconductor sector’s rally. The MATCH Act, which completed House markup on approximately 22 April 2026, proposes expanded restrictions on advanced semiconductor exports to China. Citi analysts estimated on 9 May 2026 that passage through the Senate could introduce 10-15% downside to affected stocks. Separately, China’s Order No. 834, effective 7 April 2026, mandates 50% local equipment sourcing requirements, creating supply chain disruption risks for companies with significant Chinese manufacturing exposure.

| Stock | China Revenue Share | Key Regulatory Risk | Analyst Downside Estimate |

|---|---|---|---|

| Nvidia | ~15% | H200 tariffs (~25%) and volume caps (~50%) | Offset by US hyperscaler demand |

| ASML | ~29% | Potential DUV lithography export ban expansion | UBS downgrade to Sell (3 May 2026) |

| Samsung | ~20-30% | Order No. 834 fab sourcing requirements | Brief 2-3% dip on MATCH Act news, recovered |

| Micron | ~20-30% | Order No. 834 fab sourcing requirements | Brief 2-3% dip on MATCH Act news, recovered |

The risk is concentrated, not uniform. ASML’s approximately 29% China revenue exposure creates a materially different risk profile than Nvidia’s approximately 15%. UBS specifically cited this exposure when downgrading ASML to Sell on 3 May 2026, even as the stock had delivered +48.8% year-to-date returns.

What would change the market’s current risk calculus

Two scenarios would most materially shift sentiment: MATCH Act passage through the Senate, and Chinese retaliatory measures expanding beyond Order No. 834. The US-China leadership summit anticipated in the week of 12 May 2026 represents a near-term event that could either reduce or amplify these risks. The market’s current posture, reflected in the SOX index’s 66.3% year-to-date gain despite these tensions, prices in a view that US hyperscaler demand is sufficient to offset China revenue headwinds. That assumption remains untested against a genuine escalation.

For investors wanting a structured scenario framework before the meeting, our full explainer on the May 14 summit risk scenarios maps the asymmetric payoff profile across four outcomes, including Bloomberg Intelligence’s 70% probability estimate for partial de-escalation, the $20 billion in Nvidia and TSMC China revenue that could be unlocked, and the specific stocks most insulated from hardening if talks break down.

The next major ASX story will hit our subscribers first

Separating signal from noise: a framework for evaluating the rally at current valuations

The bull case rests on hyperscaler capex commitments that are real, directionally consistent, and explicitly tied to agent-driven inference demand. Bank of America characterised AI agent deployment on 10 May 2026 as creating a “new moat” for sector leaders, while ARK Invest projected the semiconductor sector reaching $3 trillion in total market capitalisation. The bear case rests on the monetisation gap: the 18-24 month lag between infrastructure deployment and revenue generation, a 60% pilot abandonment rate, and the possibility that the capex cycle peaks before application-layer returns materialise.

- Bull case assumes: Hyperscaler capex sustains or accelerates through H2 2026; enterprise agent deployments convert from pilots to production at scale; HBM demand continues to outstrip supply; geopolitical risks remain contained

- Bear case assumes: Monetisation lags force hyperscalers to moderate capex by Q4 2026; pilot abandonment rates hold at 60%; MATCH Act passage or Chinese retaliation compresses sector multiples by 10-15%

The valuation dispersion across the sector offers different entry points for different risk tolerances. AMD at approximately 64x forward P/E prices in aggressive growth execution; Micron at approximately 8x prices in cyclicality risk despite strong HBM momentum. The S&P 500 forward P/E of approximately 20.8x provides the broader market benchmark.

Four indicators are worth monitoring to evaluate whether the demand thesis is converting on schedule:

- Hyperscaler capex trajectory into H2 2026: Any moderation in guidance from Microsoft, Google, Amazon, or Meta would be the earliest signal of a capex cycle peak

- Enterprise agent pilot-to-production conversion rates: The Gartner 20% scalability estimate is the current baseline; movement above or below it recalibrates the monetisation timeline

- HBM pricing trends: As a bottleneck component, HBM pricing is a real-time demand signal; sustained premiums support the bull case, while softening suggests demand is peaking

- MATCH Act legislative progress: Senate passage would introduce the most concrete downside catalyst for names with significant China exposure

Dennis Li at Morningstar has cautioned that current market pricing ignores monetisation risks, arguing that application-layer companies must generate sufficient economic value to justify ongoing infrastructure outlays before the rally can be considered sustainable.

These statements are speculative and subject to change based on market developments and company performance.

Infrastructure is real, but the profit proof is still pending

The semiconductor sector’s rally in 2026 is built on infrastructure commitments that are large, specific, and directionally consistent across the world’s largest technology companies. That foundation is genuine.

What remains unproven is the second half of the thesis: that application-layer monetisation will arrive on a timeline that justifies valuations already pricing it in. The gap between committed capex and demonstrated returns is the single most important variable for the sector over the next 12-18 months.

If agent deployment scales as projected, the sector’s structural position in the AI compute stack makes current valuations defensible. If monetisation lags, the capex cycle peak that Goldman Sachs has flagged for Q4 2026 creates a meaningful correction risk. The asymmetry cuts both ways.

Investors positioned in the sector, or considering entry, would benefit from defining their monitoring criteria now rather than after the market has already repriced.

The 18-24 month capex-to-revenue lag identified by Morningstar remains the most important unresolved question facing semiconductor stocks at current valuations.

This article is for informational purposes only and should not be considered financial advice. Investors should conduct their own research and consult with financial professionals before making investment decisions.

Frequently Asked Questions

What is the Phlx Semiconductor Index (SOX) and why is it relevant to AI investing?

The Phlx Semiconductor Index (SOX) tracks the performance of major semiconductor companies and serves as a benchmark for the sector. Its 66.3% year-to-date gain through May 2026 reflects the market's pricing of AI infrastructure demand as a structural growth driver.

Why are AI agents driving higher semiconductor demand than earlier AI applications?

AI agents chain multiple reasoning steps together, requiring a separate inference computation at each step, which creates persistent and compounding compute demand. Earlier applications like chatbots returned a single output per prompt, whereas agents can require dozens of sequential inferences per task.

What is the capex-to-revenue lag and why does it matter for semiconductor stocks?

The capex-to-revenue lag refers to the 18-24 month gap between when hyperscalers deploy AI infrastructure and when application-layer companies generate sufficient economic returns to justify those outlays. Morningstar analyst Dennis Li has argued this lag means current semiconductor valuations are pricing in monetisation that has not yet been demonstrated at scale.

Which semiconductor stocks have performed best year-to-date in 2026?

Micron has led with approximately 161.7% gains, followed by Samsung at approximately 124.0% and AMD at approximately 112.5%, while Nvidia has lagged the group at approximately 15.4% despite being the sector's most prominent AI beneficiary.

What geopolitical risks could affect semiconductor stocks in 2026?

The MATCH Act, which proposes expanded export restrictions on advanced semiconductors to China, could introduce 10-15% downside to affected stocks if it passes the Senate, according to Citi analysts. China's Order No. 834 also mandates 50% local equipment sourcing, creating supply chain risks for companies with significant Chinese manufacturing exposure.