Why Big Tech AI Spending Is Crushing Earnings Despite Revenue Growth

Key Takeaways

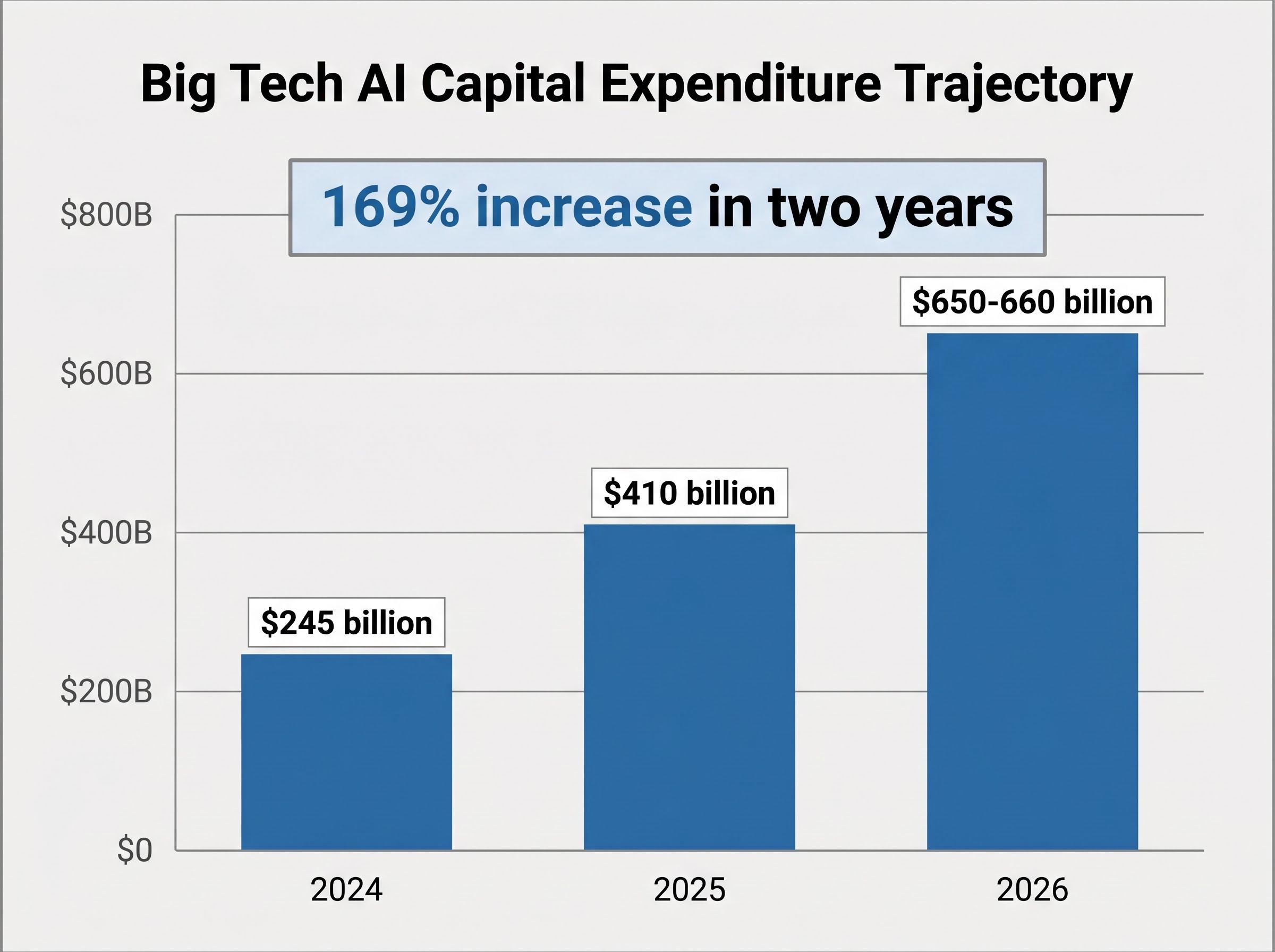

- Amazon, Alphabet, Meta, and Microsoft are collectively spending approximately $650-660 billion on AI infrastructure in 2026, a 169% increase from 2024 levels, creating a front-loaded investment cycle that is compressing near-term earnings across all four companies simultaneously.

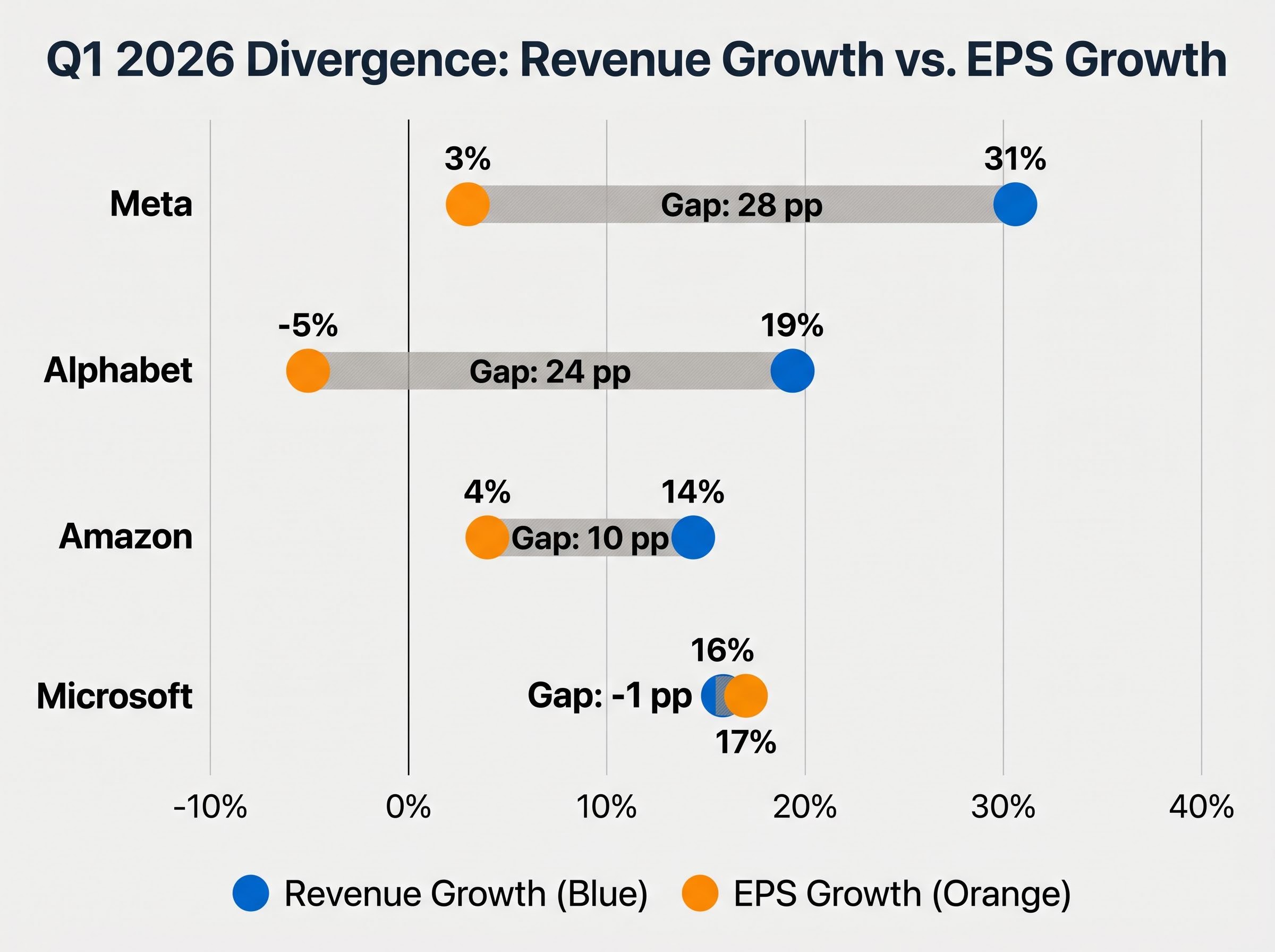

- Meta is projected to grow Q1 2026 revenue by 31% but EPS by only 3%, while Alphabet faces an estimated 5% EPS decline despite 19% revenue growth, illustrating how accelerated depreciation and capex-to-revenue timing gaps suppress reported profits.

- Microsoft stands as the outlier with EPS growth roughly matching revenue growth at approximately 17% and 16% respectively, providing evidence that monetisation running alongside capex can absorb the earnings drag.

- Management teams across the Big Four frame the spending as strategically non-negotiable, with Amazon CEO Andy Jassy stating that customer commitments already cover nearly the entirety of the planned $200 billion 2026 spend and citing an AI chip division with a $50 billion annualised revenue run-rate.

- Investors should prioritise cloud segment growth rates, capacity utilisation commentary, and free cash flow guidance over headline EPS when evaluating these reports, as the ROI inflection is broadly expected in the late 2026 to 2027 period when inference workloads overtake training as the dominant compute demand.

Four of America’s largest technology companies are preparing to report earnings on the same Wednesday, 29 April 2026, and the story threading through all of them is the same: revenue is growing at double-digit rates while earnings-per-share growth is nearly flat, and in one case, declining. Meta is projected to grow revenue 31% year over year in Q1 2026, yet EPS is expected to grow just 3%. Amazon is forecast at 14% revenue growth and only 4% EPS growth. The source of that divergence is not weak business performance. It is a deliberate, record-breaking wave of AI capital expenditure compressing near-term earnings across all four companies simultaneously.

What follows explains exactly how that compression works, why management teams consider the spending strategically non-negotiable, what the timeline for return on investment looks like, and how investors should interpret the revenue-versus-earnings gap when evaluating Big Tech valuations in 2026.

The $650 billion question: what Big Tech is spending on AI in 2026

The combined AI infrastructure budget for Amazon, Alphabet, Meta, and Microsoft in 2026 is approximately $650-660 billion. That figure is up from roughly $410 billion in 2025 and $245 billion in 2024, an acceleration that has nearly tripled the annual outlay in just two years.

The trajectory in context: Big Tech AI capital expenditure has moved from $245 billion (2024) to $410 billion (2025) to $650-660 billion (2026), a 169% increase in two years.

Each company’s commitment reflects a distinct set of priorities.

| Company | 2026 Capex Guidance | Year-over-Year Change | Primary Use of Funds |

|---|---|---|---|

| Amazon | $200 billion | ~50% increase; ~$50B above prior estimates | AWS AI workloads, data centre expansion |

| Alphabet | $175-185 billion | Nearly double 2025 spend | DeepMind models, Google Cloud infrastructure |

| Microsoft | ~$145 billion (run-rate) | Continued acceleration | Azure, OpenAI partnership, Copilot expansion |

| Meta | $115-135 billion | Significant increase from 2025 | Llama models, AI-powered ad infrastructure |

| Big Four Combined | ~$650-660 billion | ~60% increase from 2025 | — |

For comparative scale, Apple plans approximately $14 billion in AI-related capex for 2026, relying instead on partner compute infrastructure. Chinese AI firms, led by Alibaba and others, spent an estimated $57 billion in 2025. The US Big Four alone will outspend China’s entire AI infrastructure commitment by more than tenfold.

When big ASX news breaks, our subscribers know first

How AI infrastructure spending actually works: data centres, chips, and power

Where does a single capex dollar actually go? The hundreds of billions are concentrated across five major categories:

- Data centres: Over $200 billion in commitments across the hyperscalers for new and expanded facilities. Amazon’s recent Louisiana data centre investments are one named example.

- Nvidia GPUs: High-end graphics processing units, including Nvidia’s Rubin platform architecture, remain the primary hardware for frontier AI model training.

- Custom silicon: Proprietary chips designed to lower long-run inference costs (covered below).

- Cooling and power infrastructure: Advanced liquid cooling systems and dedicated power supply required for high-density AI compute clusters.

- Power supply build-out: Securing the electricity these facilities consume, which is creating measurable strain on the US grid.

That last category carries real-world consequences beyond balance sheets. AI data centres are driving record US power consumption forecasts through 2027-2030, and electrical equipment shortages, particularly transformers, are emerging as a near-term constraint on construction timelines. Once a campus is under construction and power contracts are signed, the cost is committed regardless of whether AI revenue materialises on schedule.

The Lawrence Berkeley National Laboratory forecasts for US data centre electricity demand project consumption growing from 176 TWh in 2023 to between 325 and 580 TWh by 2028, a range that captures the uncertainty around how quickly AI workloads will scale and what grid infrastructure will need to be built to support them.

Why custom silicon matters to the ROI story

All four companies are running a dual-track chip strategy: procuring Nvidia GPUs for the most demanding training workloads while developing proprietary accelerators to reduce per-unit inference costs over time. Google’s Tensor Processing Units (TPUs), backed by a five-year chip agreement with Broadcom, and Microsoft’s Maia chips are the most prominent examples.

This matters because inference, the process of running trained AI models to produce outputs, is expected to overtake training as the dominant workload by late 2026 into 2027. Custom silicon designed for inference is the mechanism through which margins could eventually recover.

The earnings squeeze explained: how capex turns into EPS pressure

The mechanical chain from a capital expenditure decision to a suppressed earnings-per-share figure runs through three linked stages.

First, accelerated depreciation. Data centre buildouts and server infrastructure generate large depreciation charges that flow directly through the income statement. Even when cloud and AI revenues are growing strongly, these charges create an earnings drag that compresses reported profits.

Second, the spend-to-revenue timing gap. Infrastructure spending precedes monetisation. The 2026 cycle is characterised by analysts and management alike as a front-loaded investment year where capex runs ahead of expected revenue yield.

Third, some of this 2026 capex is debt-funded, adding an interest burden on top of the depreciation drag.

The result is a striking divergence between top-line revenue growth and bottom-line earnings growth.

| Company | Q1 2026 Revenue Growth (Consensus) | Q1 2026 EPS Growth (Consensus) | Gap (Percentage Points) |

|---|---|---|---|

| Meta | 31% | 3% | 28 pp |

| Alphabet | 19% | ~-5% | ~24 pp |

| Amazon | 14% | 4% | 10 pp |

| Microsoft | 16% | 17% | -1 pp (outlier) |

Alphabet is projected to report an EPS decline of approximately 5% despite 19% revenue growth in Q1 2026, one of the starkest illustrations of how depreciation from front-loaded AI infrastructure investment can suppress earnings even during a period of strong business momentum.

Microsoft stands as the outlier. Its 17% projected EPS growth roughly matches its 16% revenue growth, providing evidence that when monetisation is running alongside capex (through products like Copilot and Azure AI), the drag can be absorbed.

Why these companies say the spending is non-negotiable

Management teams across all four companies frame AI capex not simply as a growth bet but as a defensive necessity. The argument is that the window for establishing AI infrastructure advantage is narrow, and under-investing now risks ceding that advantage permanently.

Amazon CEO Andy Jassy offered the most specific counter-narrative to investor concern. Following Amazon’s 5 February 2026 earnings call, where the $200 billion capex figure was disclosed, Jassy stated that customer commitments already cover nearly the entirety of the planned 2026 spend. He pointed to Amazon’s AI chip division, which reportedly has an annualised revenue run-rate of $50 billion, growing at over 100% per year.

The US-versus-China competitive framing reinforces the argument. In 2025, Chinese AI firms invested an estimated $57 billion in infrastructure, while the US Big Four are on course to deploy $650-660 billion across 2026. US companies view maintaining that scale gap as strategically non-negotiable, particularly given chip export controls that limit Chinese access to leading-edge accelerators.

The RAND Corporation analysis of China’s AI computing infrastructure buildout and US export controls details how Beijing’s industrial policy targets 300 EFLOPS of national computing capacity while US chip export restrictions constrain Chinese developers’ access to leading-edge accelerators, the structural asymmetry that makes the scale gap between the two nations’ AI investments strategically significant rather than merely numerical.

Where analyst scepticism is focused

Scepticism remains, however, and a number of analysts have identified three recurring risk factors:

- Adoption pace lag: AI uptake by enterprise customers may not accelerate fast enough to justify the infrastructure built ahead of demand.

- Rising compute costs: The cost of training and running frontier models continues to increase, compressing returns even as revenue grows.

- Margin compression: Capex growing at 50% or more year over year risks outpacing cloud revenue growth, squeezing segment margins in AWS, Google Cloud, and Azure.

February’s earnings season captured this tension plainly. Amazon shares shed roughly 11% in the session after its 5 February 2026 report, even though AWS posted 24% revenue growth. The sell-off suggested investors were prioritising near-term capital discipline and earnings predictability over confidence in long-cycle infrastructure commitments.

Microsoft offers a useful point of comparison. Reported figures indicate its AI revenue expansion is now running ahead of capex growth, illustrating what a credible path to ROI looks like once monetisation is genuinely under way.

When does the investment pay off? The ROI timeline investors should understand

Management across the Big Four broadly expects the return on investment to accelerate in the late 2026 to 2027 timeframe. The structural hinge is the shift from training-dominant workloads to inference-dominant workloads. Inference revenue, generated from API calls, embedded AI features, and enterprise deployments, scales more linearly with usage than training, offering a clearer path to monetisation.

- 2026 (current phase): Front-loaded investment year. Capex runs ahead of revenue. EPS is compressed by depreciation and interest charges. Management and analysts characterise this as an investment and validation year.

- Late 2026 to early 2027: Inference workloads begin to overtake training as the dominant compute demand. Early monetisation signals emerge from products like Copilot, AI-enhanced advertising, and cloud contract expansion.

- 2027 and beyond: Depreciation charges from current buildouts begin to be absorbed. Margin recovery becomes possible if cloud and AI revenue growth sustains at forecast rates.

Executives across the Big Four have described 2026 as a validation phase for AI infrastructure, a year in which the investment thesis needs to produce early evidence of progress, even as the full financial payback lies further out.

Societe Generale’s analysis of hyperscaler free cash flow trajectory through late 2026 adds a specific structural warning to the ROI timeline: free cash flow across the Big Four could turn negative before recovering in Q1 2027, a sequence that implies the depreciation and interest drag visible in Q1 EPS figures is likely to intensify before it abates.

The most concrete near-term evidence that the thesis can work comes from Microsoft’s Copilot. According to Bloomberg reporting, Copilot standalone product sales have significantly outperformed internal targets, with Office average selling price acceleration supporting near-term monetisation.

Alphabet’s 14% stake in Anthropic, with a reported potential additional $40 billion investment and a possible 2026 IPO, illustrates how AI infrastructure spending creates optionality beyond direct revenue. Not all returns will appear on the income statement.

The next major ASX story will hit our subscribers first

How to read the revenue-versus-earnings gap as an investor

For capital expenditure-heavy growth companies in the middle of an investment cycle, headline EPS is not the most informative metric. Free cash flow trajectory and segment revenue growth rates, specifically AWS, Google Cloud, and Azure, are stronger signals of underlying business health during this phase.

Four specific signals are worth monitoring during the 29 April earnings calls:

- Cloud segment revenue growth rate: Is AWS, Google Cloud, or Azure accelerating or decelerating relative to Q4 2025?

- Management commentary on capacity utilisation: Are the data centres being built filling up with paying customers?

- Free cash flow guidance: Is management maintaining or revising its full-year free cash flow outlook?

- Any capex revision language: Has any company adjusted its February 2026 capex guidance, in either direction?

No earnings pre-announcements or guidance updates have emerged between February 2026 and the 29 April reporting date. Meta’s 10% workforce reduction, approximately 8,000 positions, offers a margin management signal that may complement capex commentary.

The broader market stakes on 29 April

These four companies collectively represent over 18% of S&P 500 index weighting. Their earnings outcomes function as a de facto market earnings season catalyst with implications well beyond individual stock performance.

The concentration of four mega-cap names on a single Wednesday has prompted JPMorgan to issue an explicit warning about options markets pricing implied moves across Magnificent Seven stocks at above-average levels, a function not just of earnings uncertainty but of geopolitical risk from Iran-related oil price pressure amplifying volatility premiums heading into results day.

Equity markets have experienced turbulence in 2026 from software and AI sector rotation, the Iran conflict, and elevated oil prices. That backdrop makes the 29 April reports particularly sensitive for index-level positioning. The tariff environment and enterprise IT budget trends are the primary external variables that could cause results to diverge from consensus, independent of AI capex dynamics.

Where this leaves investors heading into 29 April

The revenue-versus-earnings divergence visible in Q1 2026 Big Tech previews is not a sign of business deterioration. It is the predictable accounting consequence of four companies simultaneously deploying record capital expenditure ahead of the monetisation cycle they are building toward.

Whether that investment pays off depends on how quickly inference workloads scale, how effectively each company converts AI infrastructure into billable products (Copilot is the clearest proof point so far), and whether cloud revenue growth absorbs the depreciation drag before investor patience thins. The 29 April earnings calls will be the first major opportunity in 2026 to assess whether the timeline is holding.

Investors following these reports should focus less on the headline EPS number and more on the signals that matter at this stage of the capex cycle: cloud segment growth rates, management commentary on capacity utilisation, and any revision to full-year capex guidance.

For investors trying to translate the ROI timeline into a concrete re-entry or positioning decision, our full explainer on SocGen’s hyperscaler free cash flow timing framework details why Societe Generale’s Manish Kabra identifies a positive free cash flow inflection and moderation in capex-to-revenue ratios in Q1 2027 as the specific fundamental signals that would confirm the investment cycle has turned, along with his interim recommendation to reduce technology concentration through the equal-weighted S&P 500.

This article is for informational purposes only and should not be considered financial advice. Investors should conduct their own research and consult with financial professionals before making investment decisions. Past performance does not guarantee future results. Financial projections are subject to market conditions and various risk factors.

Frequently Asked Questions

What is big tech AI spending and why is it so high in 2026?

Big tech AI spending refers to the capital expenditure Amazon, Alphabet, Meta, and Microsoft are deploying on data centres, AI chips, and power infrastructure to build out AI capabilities. In 2026, their combined budget is approximately $650-660 billion, up from $410 billion in 2025, driven by competitive pressure to establish AI infrastructure advantage before the monetisation window narrows.

Why is EPS growth so much lower than revenue growth for Meta and Alphabet in Q1 2026?

The gap is caused by accelerated depreciation on data centre buildouts, the timing delay between infrastructure spending and revenue generation, and in some cases debt-funded capex adding an interest burden. Meta is projected to grow revenue 31% but EPS only 3%, while Alphabet is expected to see EPS decline roughly 5% despite 19% revenue growth.

When will Big Tech AI infrastructure investment start paying off for investors?

Management teams across the Big Four broadly expect return on investment to accelerate in the late 2026 to 2027 timeframe, as inference workloads overtake training and depreciation from current buildouts begins to be absorbed. Microsoft's Copilot is cited as the clearest early proof point that monetisation can run alongside capex.

What should investors watch for in the 29 April 2026 Big Tech earnings calls?

Investors should focus on cloud segment revenue growth rates for AWS, Google Cloud, and Azure, management commentary on data centre capacity utilisation, free cash flow guidance, and any revision to the capex figures disclosed in February 2026. Headline EPS is less informative than these signals during a front-loaded investment cycle.

How does Big Tech AI capex compare to China's AI infrastructure spending?

The US Big Four are on course to deploy $650-660 billion on AI infrastructure in 2026, while Chinese AI firms invested an estimated $57 billion in 2025. US companies view maintaining that scale gap as strategically non-negotiable, particularly given chip export controls that limit Chinese access to leading-edge accelerators.