How Broadcom’s $100B OpenAI Deal Reshapes AI Infrastructure

Key Takeaways

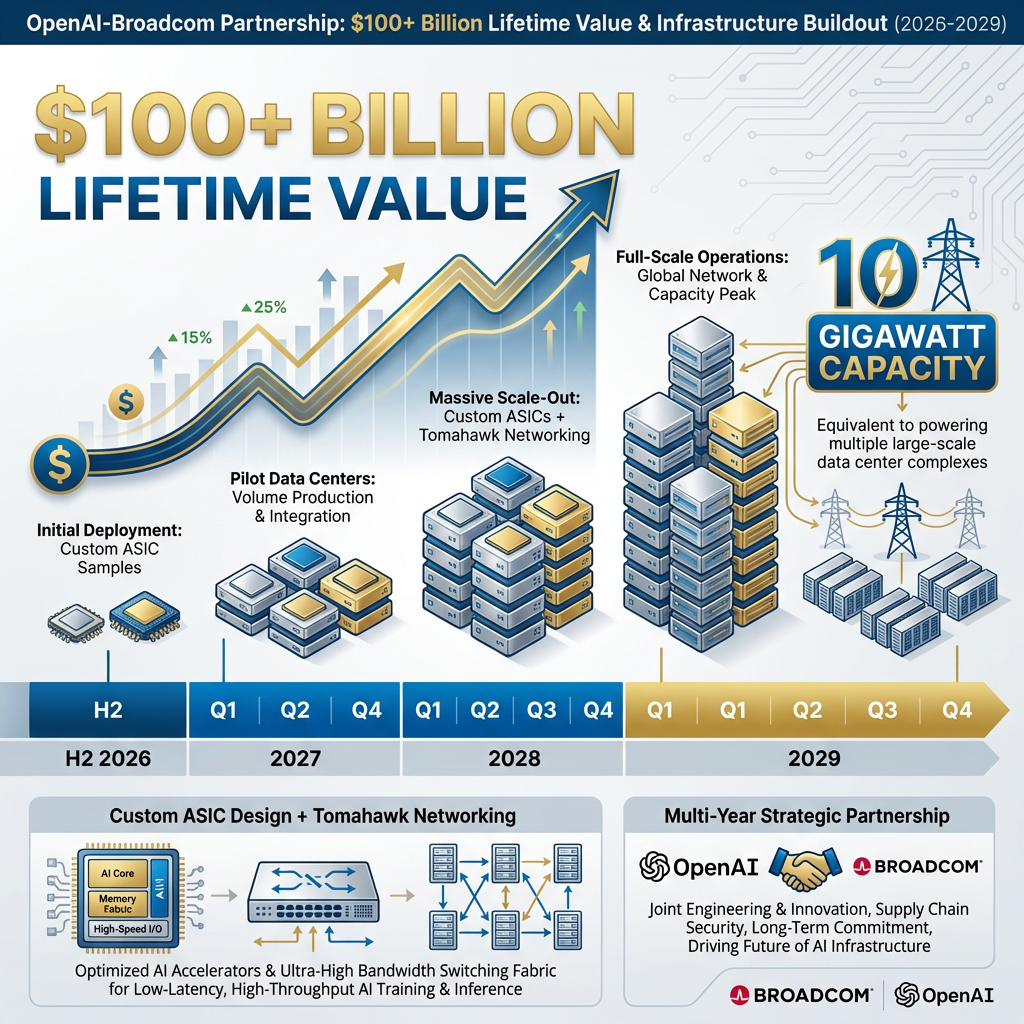

- Broadcom secured the largest custom AI chip deal in history with OpenAI, a 10-gigawatt partnership projected to generate over $100 billion in lifetime value with deliveries spanning 2026-2029.

- Broadcom reported $8.4 billion in AI revenue for Q1 FY2026, representing 106% year-over-year growth, with Q2 FY2026 guidance set at $17.5-17.7 billion in total revenue.

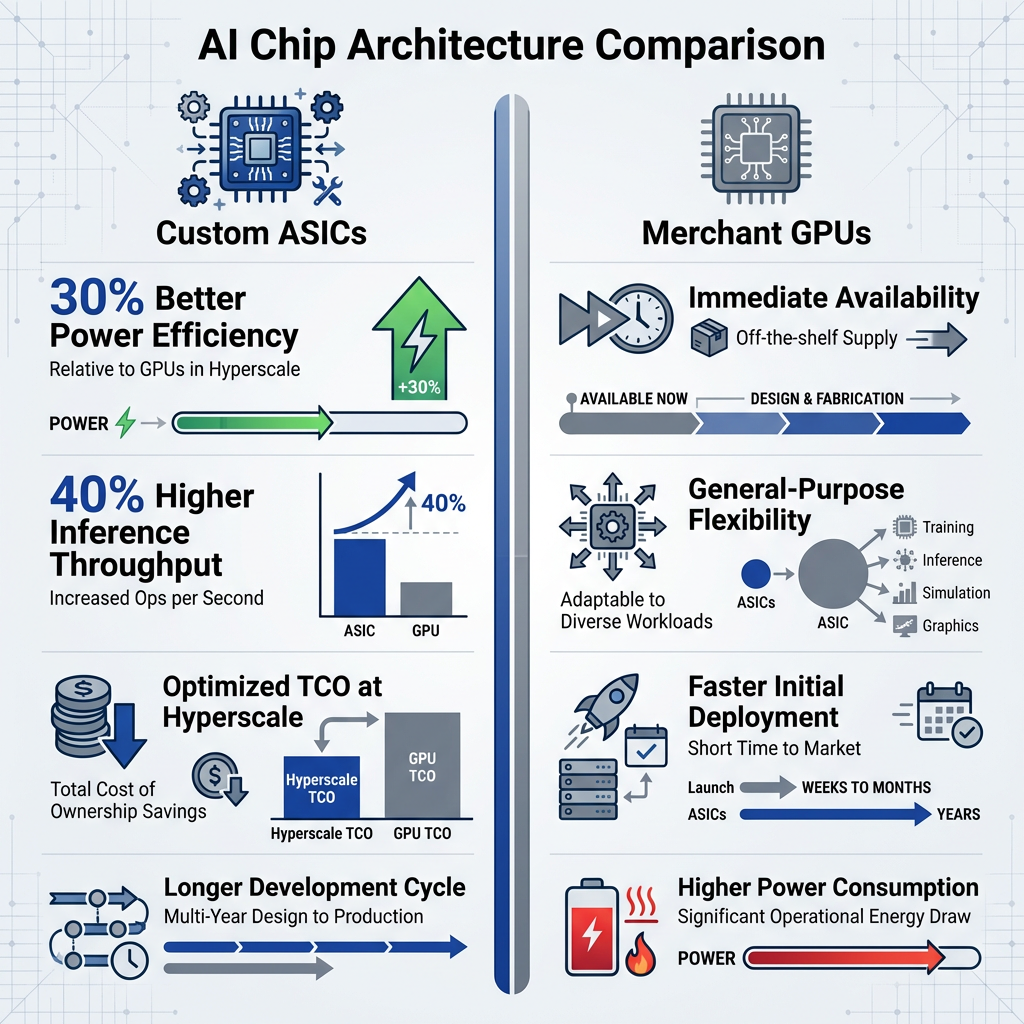

- Custom ASICs deliver up to 30% better power efficiency and 40% higher inference throughput compared to Nvidia merchant GPUs, driving hyperscaler adoption at unprecedented scale.

- Broadcom's 47.3% net margin far exceeds AMD's 14.7%, reflecting the pricing power that comes from deep custom chip partnerships rather than competing in the merchant GPU market.

- Wall Street analysts project the custom AI chip market could reach $100 billion annually by 2027, with ASIC shipments forecast to triple from 2024-2027 as hyperscalers shift away from general-purpose solutions.

Broadcom has secured the largest custom AI chip partnership in industry history. The company announced a 10-gigawatt deal with OpenAI in mid-April 2026, a partnership projected to generate more than $100 billion in lifetime value. This agreement cements Broadcom’s position as the dominant player in hyperscaler AI infrastructure, validating the custom ASIC approach over merchant GPU solutions at unprecedented scale.

The OpenAI win follows a pattern of strategic relationships with the world’s largest AI operators. Broadcom reported $8.4 billion in AI revenue for Q1 FY2026, representing 106% year-over-year growth. Analysts project the company will capture 60% of the ASIC market share by 2027, driven by efficiency advantages that hyperscalers cannot achieve with off-the-shelf alternatives. This analysis examines how Broadcom built this commanding position and what the OpenAI partnership signals for AI infrastructure through the end of the decade.

What Are Custom AI Chips and Why Hyperscalers Want Them

Custom ASICs are purpose-built chips designed from the ground up for specific workloads. Unlike merchant GPUs, which are general-purpose processors sold identically to all customers, ASICs are tailored to a company’s exact requirements. At hyperscaler scale, this distinction translates directly to operational advantage.

The performance delta is substantial. Broadcom’s custom ASICs deliver up to 30% better power efficiency compared to Nvidia GPUs and 40% higher inference throughput for optimised workloads. For companies running AI training and inference across millions of servers, these efficiency gains compound into hundreds of millions of dollars in reduced energy costs and improved computational capacity. The custom approach avoids paying for unused general-purpose capabilities inherent in merchant solutions.

The peer-reviewed IEEE study on Tensor Processing Unit performance provides quantitative data demonstrating 15-30x throughput improvements and superior performance per watt for custom ASICs compared to general-purpose GPUs in production datacenter environments.

Custom ASICs:

- Tailored to specific workload requirements

- 30-40% efficiency gains over general-purpose alternatives

- Optimised total cost of ownership at hyperscale

- Requires deep design partnership and longer development cycles

Merchant GPUs:

- Off-the-shelf availability with immediate deployment

- General-purpose flexibility across varied applications

- Faster time to initial deployment

- Higher power consumption per equivalent workload

Broadcom’s role in this ecosystem centres on design expertise rather than manufacturing. The company provides the intellectual property and engineering capability that enables hyperscalers to create custom solutions. TSMC fabricates the actual silicon, creating a design-to-manufacture partnership model. This structure allows hyperscalers to control their AI compute destiny whilst leveraging Broadcom’s decades of semiconductor design experience and IP portfolio.

The partnership model leverages TSMC’s advanced fabrication capabilities whilst Broadcom provides the design expertise and intellectual property that enable hyperscalers to create differentiated compute infrastructure.

When big ASX news breaks, our subscribers know first

The OpenAI Deal: Anatomy of a $100 Billion Partnership

The 10-gigawatt capacity commitment represents an extraordinary infrastructure buildout. To contextualise this scale, the deal encompasses compute capacity sufficient to power multiple large-scale data centre complexes. Deliveries will span from H2 2026 through 2029, indicating a multi-year strategic partnership rather than a transactional purchase.

The $100 billion+ lifetime value projection positions this single partnership as potentially generating more revenue than Broadcom’s entire historical AI business. This validates the custom ASIC model at the highest tier of AI infrastructure deployment.

The partnership’s strategic implications for OpenAI are substantial. Moving to custom silicon reduces the company’s dependence on Nvidia, potentially improving margins on compute whilst providing differentiated infrastructure unavailable to competitors. The integration of Broadcom’s Tomahawk networking creates a complete stack optimised for both AI training and inference workloads. This vertical integration mirrors the approach taken by Google with TPUs and Meta with custom AI accelerators.

The deal signals a broader industry inflection point. If the leading AI research laboratory is choosing custom ASICs over merchant GPUs for its next-generation infrastructure, other hyperscalers operating at similar scale will likely accelerate their own custom silicon programmes. The OpenAI partnership demonstrates that custom approaches have matured beyond early adopters into the mainstream of AI infrastructure strategy.

Broadcom’s Hyperscaler Customer Base: Beyond OpenAI

Counterpoint Research identifies Broadcom as the primary custom ASIC design partner for virtually every major hyperscaler. This isn’t concentrated exposure to a single customer but systemic adoption by the companies building AI infrastructure at the largest scale globally.

| Hyperscaler | Partnership Focus | Notable Details |

|---|---|---|

| TPU design collaboration | Longstanding partnership, multiple TPU generations | |

| Meta | Custom AI chips | Works alongside AMD’s 6-GW GPU deployment |

| Anthropic | TPU infrastructure deal | 3.5 GW capacity, deliveries starting 2027 |

| OpenAI | Custom ASICs + networking | 10 GW capacity, $100B+ lifetime value |

| Amazon | Trainium design partnership | Custom ASIC for AWS AI services |

| Microsoft | Maia chip collaboration | Custom silicon for Azure AI infrastructure |

| ByteDance | Custom ASIC design | Supporting TikTok’s AI recommendation systems |

| Apple | Custom AI accelerators | Integrated with Apple Silicon roadmap |

This diversification provides both risk mitigation and multiple growth vectors. No single customer dominates Broadcom’s AI revenue, though certain partnerships represent larger deployments than others. The market is diversifying beyond the early Google and AWS duopoly, with Meta and Microsoft significantly ramping their custom silicon deployments. This broadening adoption validates that custom ASICs have moved from niche technology to essential infrastructure for hyperscale AI operations.

Competitive Analysis: Broadcom vs. Nvidia, AMD, and In-House Solutions

Broadcom competes across three distinct dimensions. Against Nvidia’s merchant GPUs, the company offers efficiency advantages. Against AMD, Broadcom demonstrates superior margin structure. Against potential hyperscaler in-house development, Broadcom provides deep expertise that makes partnership more attractive than independent chip design.

Nvidia competitive comparison:

- Efficiency edge: Broadcom ASICs deliver up to 30% better power efficiency for target workloads

- Throughput advantage: 40% higher inference throughput for custom-optimised applications

- Cost structure: Custom approach avoids paying for unused general-purpose capabilities

- Strategic alignment: Hyperscalers seeking differentiation favour custom over commodity solutions

The AMD comparison centres on business model quality rather than direct technical competition. Broadcom’s 47.3% net margin compared to AMD’s 14.7% reflects fundamentally different competitive positions. AMD competes more directly with Nvidia on merchant GPUs whilst Broadcom’s custom relationships command pricing power that flows through to industry-leading profitability. This margin advantage enables sustained research and development investment that reinforces technical leadership.

Broadcom’s commanding position reflects broader semiconductor sector momentum and valuation dynamics, where custom ASIC providers are capturing pricing power whilst merchant GPU suppliers face increasing competitive pressure.

The in-house development question addresses why hyperscalers partner with Broadcom rather than designing chips independently. Broadcom provides decades of semiconductor IP, design expertise, and execution capability that would require years to replicate internally. The company’s relationship with TSMC ensures access to leading-edge process nodes and advanced packaging technologies. For hyperscalers, partnership accelerates time to deployment whilst reducing technical risk compared to building internal chip design teams from scratch.

Emerging competitive pressure includes the Google-MediaTek alliance, which adds another player to the custom ASIC landscape. Marvell holds 8% market share despite shipment growth, indicating the market can support multiple participants. According to Counterpoint Research, Broadcom’s projected 60% market share by 2027 reflects sustainable competitive advantages built on technical superiority, customer relationships, and execution capability rather than temporary market positioning.

Counterpoint Research’s custom AI accelerator market analysis provides the industry-standard methodology for market share projections and CAGR forecasts that underpin the hyperscaler ASIC segment’s growth trajectory through 2027.

The next major ASX story will hit our subscribers first

Financial Performance and Market Outlook Through 2027

Broadcom reported $8.4 billion in AI revenue for Q1 FY2026, representing 106% year-over-year growth. This acceleration demonstrates the business is scaling rapidly as hyperscaler deployments expand. The strong performance drove a 30% post-earnings stock surge as investors recognised the OpenAI deal’s significance and the broader momentum in custom AI chip adoption.

Broadcom’s post-earnings 30% surge reflects strong execution, yet the approximately $1.9 trillion market capitalisation warrants careful technology sector valuation and entry point considerations for investors evaluating positions.

Key financial metrics:

- Q2 FY2026 guidance: $17.5-17.7 billion revenue (24% year-over-year growth)

- EPS trajectory: 32% year-over-year growth

- AI segment outlook: $30 billion revenue with $17 billion operating income

- Margin strength: 47.3% net margin demonstrates pricing power

- Current valuation: Approximately $1.9 trillion market cap, trading in the $390-406 range

The forward opportunity is substantial. Wall Street analysts project $100 billion+ annual custom AI revenue by 2027, and the addressable market could reach $75 billion in three years. According to MarketBeat analyst Thomas Hughes, custom chips could more than double the business if this addressable market is captured. ASIC shipments are projected to triple from 2024-2027, driven by hyperscaler infrastructure buildouts and the ongoing shift from merchant GPUs to custom silicon.

What the OpenAI Win Means for AI Infrastructure’s Future

The OpenAI partnership validates Broadcom’s thesis that custom ASICs will displace merchant GPUs for the largest AI workloads. Combined with existing hyperscaler relationships spanning Google, Meta, Amazon, Microsoft, and others, Broadcom appears positioned as the central infrastructure partner for the next wave of AI scaling. The company’s 60% projected market share, deep customer relationships, and 30-40% efficiency advantages create a defensible competitive position.

The broader industry implications point to acceleration in the shift from merchant GPUs to custom ASICs. The AI chip market is projected to grow at 29% CAGR through 2030, with custom ASICs reaching $100 billion+ annually by 2027. This would represent a fundamental restructuring of AI compute infrastructure, moving from general-purpose solutions towards purpose-built accelerators optimised for specific workloads. Training and inference requirements are diverging, creating opportunities for tailored solutions that merchant approaches cannot match.

Broadcom’s combination of technical superiority, customer diversification, margin strength, and execution capability positions the company at the centre of AI infrastructure buildout through the end of the decade. The OpenAI deal represents the most significant single win, but the pattern of hyperscaler adoption suggests it is part of a sustained trend rather than an isolated event. As AI models scale and compute requirements compound, the efficiency advantages of custom silicon become increasingly material to hyperscaler economics and competitive positioning.

Whilst Broadcom’s OpenAI partnership demonstrates confidence in custom silicon economics, investors should consider broader AI infrastructure investment risks including deployment timelines, utilisation rates, and return on capital assumptions embedded in these multi-billion dollar commitments.

This article is for informational purposes only and should not be considered financial advice. Investors should conduct their own research and consult with financial professionals before making investment decisions.

Frequently Asked Questions

What are custom AI chips and how do they differ from standard GPUs?

Custom AI chips, or ASICs, are purpose-built processors designed for a specific company's workloads, delivering up to 30% better power efficiency and 40% higher inference throughput compared to general-purpose GPUs like those sold by Nvidia.

What is the Broadcom and OpenAI chip deal and why does it matter?

Broadcom announced a 10-gigawatt custom ASIC partnership with OpenAI in April 2026, projected to generate over $100 billion in lifetime value, making it the largest custom AI chip deal in industry history and validating the shift away from merchant GPU solutions.

How much AI revenue did Broadcom generate in Q1 FY2026?

Broadcom reported $8.4 billion in AI revenue for Q1 FY2026, representing 106% year-over-year growth, driven by expanding hyperscaler deployments and the growing adoption of custom ASICs.

Which major companies use Broadcom for custom AI chip design?

Broadcom serves as the primary custom ASIC design partner for Google, Meta, Amazon, Microsoft, Apple, Anthropic, OpenAI, and ByteDance, giving it broad exposure across virtually every major AI infrastructure operator globally.

What is Broadcom's projected AI chip market share by 2027?

Analysts at Counterpoint Research project Broadcom will capture approximately 60% of the custom ASIC market by 2027, underpinned by deep hyperscaler relationships, superior efficiency advantages, and a strong semiconductor IP portfolio.